增量式爬虫ScrapyRredis详解及案例

![增量式爬虫 Scrapy-Rredis 详解及案例[Python基础]](/wp-content/uploads/new2022/20220602jjjkkk2/2985211544_1.jpg)

1、创建scrapy项目命令

scrapy startproject myproject2、在项目中创建一个新的spider文件命令:

scrapy genspider mydomain mydomain.com #mydomain为spider文件名,mydomain.com为爬取网站域名3、运行项目命令

scrapy crawl <spider>scrapy runspider <spider_file.py> #运行spider第二种方法

4、检查spider文件有无语法错误

scrapy check5、其他的语法

scrapy crawl <spider> --nolog #运行spider文件 不显示日志scrapy list #列出spider路径下的spider文件

scrapy fetch <url> #将网页内容下载下来,然后在终端打印当前返回的内容,相当于 request 和 urllib 方法

scrapy view <url> #将网页内容保存下来,并在浏览器中打开当前网页内容,直观呈现要爬取网页的内容

scrapy shell [url] #打开 scrapy 显示台,类似ipython,可以用来做测试

scrapy parse <url> [options] #输出格式化内容

scrapy settings [options] #返回系统设置信息

scrapy bench #测试电脑当前爬取速度性能

1、以当当网为例爬取信息如下,首先文件的结构目录如下所示

E:.│ dangdang_content.sql

│ scrapy.cfg

│

├─.idea

│ │ misc.xml

│ │ modules.xml

│ │ ScrapyRedisPro.iml

│ │ workspace.xml

│ │

│ └─libraries

│ R_User_Library.xml

│

└─ScrapyRedisPro

│ items.py

│ middlewares.py

│ pipelines.py

│ settings.py

│ test.py

│ __init__.py

│

├─.idea

│ │ misc.xml

│ │ modules.xml

│ │ ScrapyRedisPro.iml

│ │ workspace.xml

│ │

│ └─libraries

│ R_User_Library.xml

│

├─spiders

│ │ test.py

│ │ __init__.py

│ │

│ └─__pycache__

│ test.cpython-36.pyc

│ __init__.cpython-36.pyc

│

└─__pycache__

items.cpython-36.pyc

middlewares.cpython-36.pyc

pipelines.cpython-36.pyc

settings.cpython-36.pyc

__init__.cpython-36.pyc

2、mysql数据库数据结构

SET NAMES utf8mb4;SET FOREIGN_KEY_CHECKS = 0;

-- ----------------------------

-- Table structure for dangdang_content

-- ----------------------------

DROP TABLE IF EXISTS `dangdang_content`;

CREATE TABLE `dangdang_content` (

`id` int NOT NULL AUTO_INCREMENT,

`b_cate` varchar(255) CHARACTER SET utf8mb4 COLLATE utf8mb4_general_ci NULL DEFAULT NULL,

`m_cate` varchar(255) CHARACTER SET utf8mb4 COLLATE utf8mb4_general_ci NULL DEFAULT NULL,

`s_href` varchar(255) CHARACTER SET utf8mb4 COLLATE utf8mb4_general_ci NULL DEFAULT NULL,

`s_cate` varchar(255) CHARACTER SET utf8mb4 COLLATE utf8mb4_general_ci NULL DEFAULT NULL,

`book_img` varchar(255) CHARACTER SET utf8mb4 COLLATE utf8mb4_general_ci NULL DEFAULT NULL,

`book_name` varchar(255) CHARACTER SET utf8mb4 COLLATE utf8mb4_general_ci NULL DEFAULT NULL,

`book_desc` varchar(255) CHARACTER SET utf8mb4 COLLATE utf8mb4_general_ci NULL DEFAULT NULL,

`book_price` varchar(255) CHARACTER SET utf8mb4 COLLATE utf8mb4_general_ci NULL DEFAULT NULL,

`book_author` varchar(255) CHARACTER SET utf8mb4 COLLATE utf8mb4_general_ci NULL DEFAULT NULL,

`book_publish_date` varchar(255) CHARACTER SET utf8mb4 COLLATE utf8mb4_general_ci NULL DEFAULT NULL,

`book_press` varchar(255) CHARACTER SET utf8mb4 COLLATE utf8mb4_general_ci NULL DEFAULT NULL,

`insert_data` timestamp(0) NULL DEFAULT CURRENT_TIMESTAMP(0) ON UPDATE CURRENT_TIMESTAMP(0),

PRIMARY KEY (`id`) USING BTREE

) ENGINE = InnoDB CHARACTER SET = utf8mb4 COLLATE = utf8mb4_general_ci ROW_FORMAT = Dynamic;

SET FOREIGN_KEY_CHECKS = 1;

1、spider 爬虫项目文件test.py 如下所示

# -*- coding: utf-8 -*-import scrapy

from scrapy_redis.spiders import RedisCrawlSpider

from ScrapyRedisPro.items import ScrapyredisproItem

from copy import deepcopy

import urllib

class TestSpider(RedisCrawlSpider):

name = "test"

# allowed_domains = ["www.baidu.com"]

# start_urls = ["http://www.baidu.com/"]

#调度器队列名称

redis_key = "dangdang"

def parse(self, response):

"""

逻辑分析

1.通过抓取下一页的链接,交给scrapy实现自动翻页,如果没有下一页则爬取完成

2.将本页面的所有文章url爬下,并交给scrapy进行深入详情页的爬取

"""

# 大分类分组

div_list = response.xpath("//div[@class="con flq_body"]/div")

for div in div_list:

item = {}

item["b_cate"] = div.xpath("./dl/dt//text()").extract()

item["b_cate"] = [i.strip() for i in item["b_cate"] if len(i.strip()) > 0]

# 中间分类分组

dl_list = div.xpath("./div//dl[@class="inner_dl"]")

for dl in dl_list:

item["m_cate"] = dl.xpath("./dt//text()").extract()

item["m_cate"] = [i.strip() for i in item["m_cate"] if len(i.strip()) > 0][0]

# 小分类分组

a_list = dl.xpath("./dd/a")

for a in a_list:

item["s_href"] = a.xpath("./@href").extract_first()

item["s_cate"] = a.xpath("./text()").extract_first()

if item["s_href"] is not None:

# print(item)

yield scrapy.Request(

item["s_href"],

callback=self.parse_book_list,

meta={"item": deepcopy(item)}

)

def parse_book_list(self, response):

"""

将爬虫爬取的数据送到item中进行序列化

这里通过ItemLoader加载item

"""

item = response.meta["item"]

li_list = response.xpath("//ul[@class="bigimg"]/li")

for li in li_list:

item["book_img"] = li.xpath("./a[@class="pic"]/img/@src").extract_first()

if item["book_img"] == "images/model/guan/url_none.jpg":

item["book_img"] = li.xpath("./a[@class="pic"]/img/@data-original").extract_first()

item["book_name"] = li.xpath("./p[@class="name"]/a/@title").extract_first()

item["book_desc"] = li.xpath("./p[@class="detail"]/text()").extract_first()

item["book_price"] = li.xpath(".//span[@class="search_now_price"]/text()").extract_first()

item["book_author"] = li.xpath("./p[@class="search_book_author"]/span[1]/a/text()").extract()

item["book_publish_date"] = li.xpath("./p[@class="search_book_author"]/span[2]/text()").extract_first()

item["book_press"] = li.xpath("./p[@class="search_book_author"]/span[3]/a/text()").extract_first()

# print(item)

yield item

# 下一页

next_url = response.xpath("//li[@class="next"]/a/@href").extract_first()

if next_url is not None:

next_url = urllib.parse.urljoin(response.url, next_url)

yield scrapy.Request(

next_url,

callback=self.parse_book_list,

meta={"item": item}

)

Middlewware.py 中间件文件

# -*- coding: utf-8 -*-# Define here the models for your spider middleware

#

# See documentation in:

# https://doc.scrapy.org/en/latest/topics/spider-middleware.html

from scrapy import signals

from scrapy.http import HtmlResponse

import time

from scrapy.downloadermiddlewares.useragent import UserAgentMiddleware

import random

class ScrapyredisproSpiderMiddleware(object):

# Not all methods need to be defined. If a method is not defined,

# scrapy acts as if the spider middleware does not modify the

# passed objects.

@classmethod

def from_crawler(cls, crawler):

# This method is used by Scrapy to create your spiders.

s = cls()

crawler.signals.connect(s.spider_opened, signal=signals.spider_opened)

return s

def process_spider_input(self, response, spider):

# Called for each response that goes through the spider

# middleware and into the spider.

# Should return None or raise an exception.

return None

def process_spider_output(self, response, result, spider):

# Called with the results returned from the Spider, after

# it has processed the response.

# Must return an iterable of Request, dict or Item objects.

for i in result:

yield i

def process_spider_exception(self, response, exception, spider):

# Called when a spider or process_spider_input() method

# (from other spider middleware) raises an exception.

# Should return either None or an iterable of Response, dict

# or Item objects.

pass

def process_start_requests(self, start_requests, spider):

# Called with the start requests of the spider, and works

# similarly to the process_spider_output() method, except

# that it doesn’t have a response associated.

# Must return only requests (not items).

for r in start_requests:

yield r

def spider_opened(self, spider):

spider.logger.info("Spider opened: %s" % spider.name)

class ScrapyredisproDownloaderMiddleware(object):

# Not all methods need to be defined. If a method is not defined,

# scrapy acts as if the downloader middleware does not modify the

# passed objects.

@classmethod

def from_crawler(cls, crawler):

# This method is used by Scrapy to create your spiders.

s = cls()

crawler.signals.connect(s.spider_opened, signal=signals.spider_opened)

return s

def process_request(self, request, spider):

# Called for each request that goes through the downloader

# middleware.

# Must either:

# - return None: continue processing this request

# - or return a Response object

# - or return a Request object

# - or raise IgnoreRequest: process_exception() methods of

# installed downloader middleware will be called

return None

# 拦截到响应对象(下载器传递给Spider的响应对象)

# request:响应对象对应的请求对象

# response:拦截到的响应对象

# spider:爬虫文件中对应的爬虫类的实例

def process_response(self, request, response, spider):

# 响应对象中存储页面数据的篡改

if request.url in ["http://news.163.com/domestic/", "http://news.163.com/world/", "http://news.163.com/air/",

"http://war.163.com/"]:

spider.bro.get(url=request.url)

js = "window.scrollTo(0,document.body.scrollHeight)"

spider.bro.execute_script(js)

time.sleep(3) # 一定要给与浏览器一定的缓冲加载数据的时间

# 页面数据就是包含了动态加载出来的新闻数据对应的页面数据

page_text = spider.bro.page_source

# 篡改响应对象

return HtmlResponse(url=spider.bro.current_url, body=page_text, encoding="utf-8", request=request)

else:

return response

def process_exception(self, request, exception, spider):

# Called when a download handler or a process_request()

# (from other downloader middleware) raises an exception.

# Must either:

# - return None: continue processing this exception

# - return a Response object: stops process_exception() chain

# - return a Request object: stops process_exception() chain

pass

def spider_opened(self, spider):

spider.logger.info("Spider opened: %s" % spider.name)

#UA池代码的编写(单独给UA池封装一个下载中间件的一个类)

#1,导包UserAgentMiddlware类

class RandomUserAgent(UserAgentMiddleware):

def process_request(self, request, spider):

#从列表中随机抽选出一个ua值

ua = random.choice(user_agent_list)

#ua值进行当前拦截到请求的ua的写入操作

request.headers.setdefault("User-Agent",ua)

#批量对拦截到的请求进行ip更换

class Proxy(object):

def process_request(self, request, spider):

#对拦截到请求的url进行判断(协议头到底是http还是https)

#request.url返回值:http://www.xxx.com

h = request.url.split(":")[0] #请求的协议头

if h == "https":

ip = random.choice(PROXY_https)

request.meta["proxy"] = "https://"+ip

else:

ip = random.choice(PROXY_http)

request.meta["proxy"] = "http://" + ip

PROXY_http = [

"153.180.102.104:80",

"195.208.131.189:56055",

]

PROXY_https = [

"120.83.49.90:9000",

"95.189.112.214:35508",

]

user_agent_list = [

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.1 "

"(KHTML, like Gecko) Chrome/22.0.1207.1 Safari/537.1",

"Mozilla/5.0 (X11; CrOS i686 2268.111.0) AppleWebKit/536.11 "

"(KHTML, like Gecko) Chrome/20.0.1132.57 Safari/536.11",

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.6 "

"(KHTML, like Gecko) Chrome/20.0.1092.0 Safari/536.6",

"Mozilla/5.0 (Windows NT 6.2) AppleWebKit/536.6 "

"(KHTML, like Gecko) Chrome/20.0.1090.0 Safari/536.6",

"Mozilla/5.0 (Windows NT 6.2; WOW64) AppleWebKit/537.1 "

"(KHTML, like Gecko) Chrome/19.77.34.5 Safari/537.1",

"Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/536.5 "

"(KHTML, like Gecko) Chrome/19.0.1084.9 Safari/536.5",

"Mozilla/5.0 (Windows NT 6.0) AppleWebKit/536.5 "

"(KHTML, like Gecko) Chrome/19.0.1084.36 Safari/536.5",

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.3 "

"(KHTML, like Gecko) Chrome/19.0.1063.0 Safari/536.3",

"Mozilla/5.0 (Windows NT 5.1) AppleWebKit/536.3 "

"(KHTML, like Gecko) Chrome/19.0.1063.0 Safari/536.3",

"Mozilla/5.0 (Macintosh; Intel Mac OS X 10_8_0) AppleWebKit/536.3 "

"(KHTML, like Gecko) Chrome/19.0.1063.0 Safari/536.3",

"Mozilla/5.0 (Windows NT 6.2) AppleWebKit/536.3 "

"(KHTML, like Gecko) Chrome/19.0.1062.0 Safari/536.3",

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.3 "

"(KHTML, like Gecko) Chrome/19.0.1062.0 Safari/536.3",

"Mozilla/5.0 (Windows NT 6.2) AppleWebKit/536.3 "

"(KHTML, like Gecko) Chrome/19.0.1061.1 Safari/536.3",

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.3 "

"(KHTML, like Gecko) Chrome/19.0.1061.1 Safari/536.3",

"Mozilla/5.0 (Windows NT 6.1) AppleWebKit/536.3 "

"(KHTML, like Gecko) Chrome/19.0.1061.1 Safari/536.3",

"Mozilla/5.0 (Windows NT 6.2) AppleWebKit/536.3 "

"(KHTML, like Gecko) Chrome/19.0.1061.0 Safari/536.3",

"Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/535.24 "

"(KHTML, like Gecko) Chrome/19.0.1055.1 Safari/535.24",

"Mozilla/5.0 (Windows NT 6.2; WOW64) AppleWebKit/535.24 "

"(KHTML, like Gecko) Chrome/19.0.1055.1 Safari/535.24"

]

3、items.py 文件

# -*- coding: utf-8 -*-# Define here the models for your scraped items

#

# See documentation in:

# https://doc.scrapy.org/en/latest/topics/items.html

import scrapy

class ScrapyredisproItem(scrapy.Item):

# define the fields for your item here like:

# name = scrapy.Field()

b_cate = scrapy.Field()

m_cate = scrapy.Field()

s_href = scrapy.Field()

s_cate = scrapy.Field()

book_img = scrapy.Field()

book_name = scrapy.Field()

book_desc = scrapy.Field()

book_price = scrapy.Field()

book_author = scrapy.Field()

book_publish_date = scrapy.Field()

book_press = scrapy.Field()

4、pipelines.py 管道文件

注意 一般下载方式有两种,异步与同步下载方式(下列两种方式都有)

# -*- coding: utf-8 -*-# Define your item pipelines here

#

# Don"t forget to add your pipeline to the ITEM_PIPELINES setting

# See: https://doc.scrapy.org/en/latest/topics/item-pipeline.html

import pymysql.cursors

from twisted.enterprise import adbapi

class ScrapyredisproPipeline(object):

# def process_item(self, item, spider):

# return item

# class MysqlTwistedPipeline(object):

"""

异步机制将数据写入到mysql数据库中

"""

# 创建初始化函数,当通过此类创建对象时首先被调用的方法

def __init__(self, dbpool):

self.dbpool = dbpool

# 创建一个静态方法,静态方法的加载内存优先级高于init方法,

# 在创建这个类的对之前就已将加载到了内存中,所以init这个方法可以调用这个方法产生的对象

@classmethod

# 名称固定的

def from_settings(cls, settings):

# 先将setting中连接数据库所需内容取出,构造一个地点

dbparms = dict(

host=settings["MYSQL_HOST"],

port=settings["MYSQL_PORT"],

db=settings["MYSQL_DBNAME"],

user=settings["MYSQL_USER"],

password=settings["MYSQL_PASSWORD"],

charset="utf8",

# 游标设置

cursorclass=pymysql.cursors.DictCursor,

# 设置编码是否使用Unicode

use_unicode=True

)

# 通过Twisted框架提供的容器连接数据库,pymysql是数据库模块名

dbpool = adbapi.ConnectionPool("pymysql",**dbparms)

print("连接成功!")

# 无需直接导入 dbmodule. 只需要告诉 adbapi.ConnectionPool 构造器你用的数据库模块的名称比如pymysql.

return cls(dbpool)

def process_item(self, item, spider):

# 使用Twisted异步的将Item数据插入数据库

query = self.dbpool.runInteraction(self.do_insert, item)

query.addErrback(self.handle_error, item, spider) # 这里不往下传入item,spider,handle_error则不需接受,item,spider)

def do_insert(self, cursor, item):

# 执行具体的插入语句,不需要commit操作,Twisted会自动进行

insert_sql = """

insert into dangdang_content(b_cate, m_cate, s_href, s_cate, book_img, book_name, book_desc, book_price, book_author, book_publish_date, book_press) VALUES(%s,%s,%s,%s,%s,%s,%s,%s,%s,%s,%s)

"""

cursor.execute(insert_sql, (item["b_cate"][0], item["m_cate"], item["book_img"], item["s_href"], item["s_cate"], item["book_name"], item["book_desc"], item["book_price"], item["book_author"][0], item["book_publish_date"], item["book_press"]))

print("------------------------------数据插入成功!")

def handle_error(self, failure, item, spider):

# 异步插入异常

if failure:

print(failure)

# class MysqlTwistedPipeline(object):

# """

# 同步步机制将数据写入到mysql数据库中

# """

#

# # 创建初始化函数,当通过此类创建对象时首先被调用的方法

# def __init__(self, conn, cursor):

# self.conn = conn

# self.cursor = cursor

#

# # 创建一个静态方法,静态方法的加载内存优先级高于init方法,

# # 在创建这个类的对之前就已将加载到了内存中,所以init这个方法可以调用这个方法产生的对象

# @classmethod

# # 名称固定的

# def from_settings(cls, settings):

# # 先将setting中连接数据库所需内容取出,构造一个地点

# dbparms = dict(

# host=settings["MYSQL_HOST"],

# port=settings["MYSQL_PORT"],

# db=settings["MYSQL_DBNAME"],

# user=settings["MYSQL_USER"],

# password=settings["MYSQL_PASSWORD"],

# charset="utf8",

# # 游标设置

# cursorclass=pymysql.cursors.DictCursor,

# # 设置编码是否使用Unicode

# use_unicode=True

# )

# conn = pymysql.connect(**dbparms)

# cursor = conn.cursor()

# # 无需直接导入 dbmodule. 只需要告诉 adbapi.ConnectionPool 构造器你用的数据库模块的名称比如pymysql.

# return cls(conn, cursor)

#

# def process_item(self, item, spider):

# insert_sql = "insert into dangdang_content(b_cate[0], m_cate, s_href, s_cate, book_img, book_name, book_desc, book_price, book_author, book_publish_date, book_press) VALUES(%s,%s,%s,%s,%s,%s,%s,%s,%s,%s,%s)".format(item["b_cate"], item["m_cate"], item["book_img"], item["s_href"], item["s_cate"], item["book_name"], item["book_desc"], item["book_price"], item["book_author"][0], item["book_publish_date"], item["book_press"])

# print(insert_sql)

# self.cursor.execute(insert_sql)

# self.conn.commit()

# print("------------------------------数据插入成功!")

#

# def handle_error(self, failure, item, spider):

# # 异步插入异常

# if failure:

# print(failure)

#

# def close_spider(self, spider):

# """

# 清理

# :param spider:

# :return:

# """

# self.cursor.close()

# self.conn.close()

5、settings.py 配置文件

# -*- coding: utf-8 -*-# Scrapy settings for ScrapyRedisPro project

#

# For simplicity, this file contains only settings considered important or

# commonly used. You can find more settings consulting the documentation:

#

# https://doc.scrapy.org/en/latest/topics/settings.html

# https://doc.scrapy.org/en/latest/topics/downloader-middleware.html

# https://doc.scrapy.org/en/latest/topics/spider-middleware.html

BOT_NAME = "ScrapyRedisPro"

SPIDER_MODULES = ["ScrapyRedisPro.spiders"]

NEWSPIDER_MODULE = "ScrapyRedisPro.spiders"

# Crawl responsibly by identifying yourself (and your website) on the user-agent

#USER_AGENT = "ScrapyRedisPro (+http://www.yourdomain.com)"

USER_AGENT = "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/86.0.4240.198 Safari/537.36" # 伪装请求载体身份

# Obey robots.txt rules

ROBOTSTXT_OBEY = False #可以忽略或者不遵守robots协议

#只显示指定类型的日志信息

LOG_LEVEL="ERROR"

# Configure maximum concurrent requests performed by Scrapy (default: 16)

#CONCURRENT_REQUESTS = 32

# Configure a delay for requests for the same website (default: 0)

# See https://doc.scrapy.org/en/latest/topics/settings.html#download-delay

# See also autothrottle settings and docs

#DOWNLOAD_DELAY = 3

# The download delay setting will honor only one of:

#CONCURRENT_REQUESTS_PER_DOMAIN = 16

#CONCURRENT_REQUESTS_PER_IP = 16

# Disable cookies (enabled by default)

#COOKIES_ENABLED = False

# Disable Telnet Console (enabled by default)

#TELNETCONSOLE_ENABLED = False

# Override the default request headers:

#DEFAULT_REQUEST_HEADERS = {

# "Accept": "text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8",

# "Accept-Language": "en",

#}

# Enable or disable spider middlewares

# See https://doc.scrapy.org/en/latest/topics/spider-middleware.html

#SPIDER_MIDDLEWARES = {

# "ScrapyRedisPro.middlewares.ScrapyredisproSpiderMiddleware": 543,

#}

# Enable or disable downloader middlewares

# See https://doc.scrapy.org/en/latest/topics/downloader-middleware.html

DOWNLOADER_MIDDLEWARES = {

"ScrapyRedisPro.middlewares.ScrapyredisproDownloaderMiddleware": 543,

"ScrapyRedisPro.middlewares.RandomUserAgent": 542,

}

# Enable or disable extensions

# See https://doc.scrapy.org/en/latest/topics/extensions.html

#EXTENSIONS = {

# "scrapy.extensions.telnet.TelnetConsole": None,

#}

# Configure item pipelines

# See https://doc.scrapy.org/en/latest/topics/item-pipeline.html

ITEM_PIPELINES = {

"ScrapyRedisPro.pipelines.ScrapyredisproPipeline": 300,

"scrapy_redis.pipelines.RedisPipeline": 400 ,

# "ScrapyRedisPro.pipelines.MysqlTwistedPipeline": 301,

}

# Enable and configure the AutoThrottle extension (disabled by default)

# See https://doc.scrapy.org/en/latest/topics/autothrottle.html

#AUTOTHROTTLE_ENABLED = True

# The initial download delay

#AUTOTHROTTLE_START_DELAY = 5

# The maximum download delay to be set in case of high latencies

#AUTOTHROTTLE_MAX_DELAY = 60

# The average number of requests Scrapy should be sending in parallel to

# each remote server

#AUTOTHROTTLE_TARGET_CONCURRENCY = 1.0

# Enable showing throttling stats for every response received:

#AUTOTHROTTLE_DEBUG = False

# Enable and configure HTTP caching (disabled by default)

# See https://doc.scrapy.org/en/latest/topics/downloader-middleware.html#httpcache-middleware-settings

#HTTPCACHE_ENABLED = True

#HTTPCACHE_EXPIRATION_SECS = 0

#HTTPCACHE_DIR = "httpcache"

#HTTPCACHE_IGNORE_HTTP_CODES = []

#HTTPCACHE_STORAGE = "scrapy.extensions.httpcache.FilesystemCacheStorage"

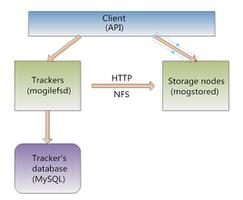

""" scrapy-redis配置 """

# Enables scheduling storing requests queue in redis.

# 使用scrapy-redis组件自己的调度器

SCHEDULER = "scrapy_redis.scheduler.Scheduler"

# 增加了一个去重容器类的配置, 作用使用Redis的set集合来存储请求的指纹数据, 从而实现请求去重的持久化

# Ensure all spiders share same duplicates filter through redis.

DUPEFILTER_CLASS = "scrapy_redis.dupefilter.RFPDupeFilter"

# 配置调度器是否要持久化, 也就是当爬虫结束了, 要不要清空Redis中请求队列和去重指纹的set。如果是True, 就表示要持久化存储, 就不清空数据, 否则清空数据

SCHEDULER_PERSIST = True # 为false Redis关闭了 Redis数据也会被清空

# redis的配置

REDIS_HOST = "127.0.0.1"

REDIS_PORT = 6379

REDIS_ENCODING ="utf8"

REDIS_PARAMS = {"password":"xhw123"}

MYSQL_HOST = "127.0.0.1"

MYSQL_PORT = 3306

MYSQL_DBNAME = "spidertest"

MYSQL_USER = "root"

MYSQL_PASSWORD = "xhw123"

# 增加了一个去重容器类的配置, 作用使用Redis的set集合来存储请求的指纹数据, 从而实现请求去重的持久化

DUPEFILTER_CLASS = "scrapy_redis.dupefilter.RFPDupeFilter"

# 使用scrapy-redis组件自己的调度器

SCHEDULER = "scrapy_redis.scheduler.Scheduler"

# 配置调度器是否要持久化, 也就是当爬虫结束了, 要不要清空Redis中请求队列和去重指纹的set。如果是True, 就表示要持久化存储, 就不清空数据, 否则清空数据

SCHEDULER_PERSIST = True

注意 启动项目后需要在redis的客户端执行如下命令

127.0.0.1:6379> lpush dangdang http://book.dangdang.com/以上是 增量式爬虫ScrapyRredis详解及案例 的全部内容, 来源链接: utcz.com/z/537667.html