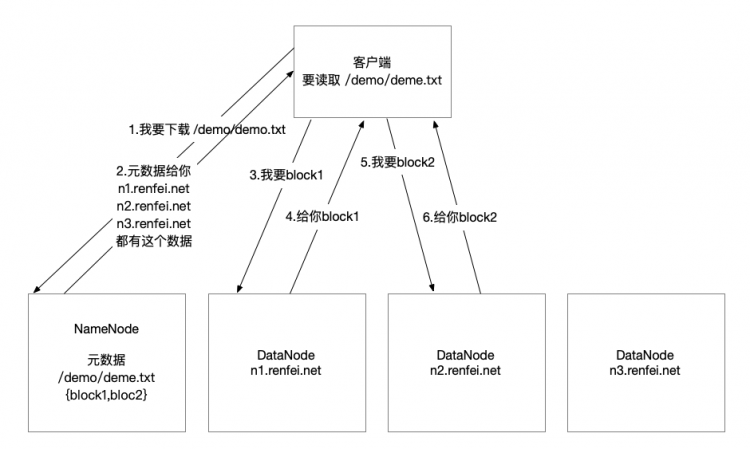

hadoop3自学入门笔记(3)java操作hdfs

1.core-site.xml

<configuration> <property>

<name>fs.defaultFS</name>

<value>hdfs://192.168.3.61:9820</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/opt/hadoopdata</value>

</property>

</configuration>

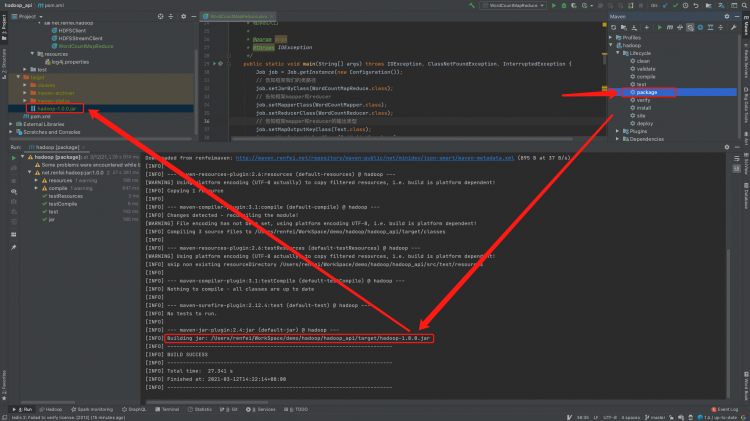

2.pom.xml

<?xml version="1.0" encoding="UTF-8"?><project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.qmkj</groupId>

<artifactId>hdfsclienttest</artifactId>

<version>0.1</version>

<name>hdfsclienttest</name>

<!-- FIXME change it to the project's website -->

<url>http://www.example.com</url>

<properties>

<project.build.sourceEncoding>UTF-8</project.build.sourceEncoding>

<maven.compiler.source>1.7</maven.compiler.source>

<maven.compiler.target>1.7</maven.compiler.target>

</properties>

<dependencies>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.11</version>

<scope>test</scope>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.hadoop/hadoop-hdfs-client -->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-hdfs-client</artifactId>

<version>3.2.1</version>

<scope>provided</scope>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.hadoop/hadoop-common -->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>3.2.1</version>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.hadoop/hadoop-hdfs -->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-hdfs</artifactId>

<version>3.2.1</version>

</dependency>

</dependencies>

<build>

<pluginManagement><!-- lock down plugins versions to avoid using Maven defaults (may be moved to parent pom) -->

<plugins>

<!-- clean lifecycle, see https://maven.apache.org/ref/current/maven-core/lifecycles.html#clean_Lifecycle -->

<plugin>

<artifactId>maven-clean-plugin</artifactId>

<version>3.1.0</version>

</plugin>

<!-- default lifecycle, jar packaging: see https://maven.apache.org/ref/current/maven-core/default-bindings.html#Plugin_bindings_for_jar_packaging -->

<plugin>

<artifactId>maven-resources-plugin</artifactId>

<version>3.0.2</version>

</plugin>

<plugin>

<artifactId>maven-compiler-plugin</artifactId>

<version>3.8.0</version>

</plugin>

<plugin>

<artifactId>maven-surefire-plugin</artifactId>

<version>2.22.1</version>

</plugin>

<plugin>

<artifactId>maven-jar-plugin</artifactId>

<version>3.0.2</version>

</plugin>

<plugin>

<artifactId>maven-install-plugin</artifactId>

<version>2.5.2</version>

</plugin>

<plugin>

<artifactId>maven-deploy-plugin</artifactId>

<version>2.8.2</version>

</plugin>

<!-- site lifecycle, see https://maven.apache.org/ref/current/maven-core/lifecycles.html#site_Lifecycle -->

<plugin>

<artifactId>maven-site-plugin</artifactId>

<version>3.7.1</version>

</plugin>

<plugin>

<artifactId>maven-project-info-reports-plugin</artifactId>

<version>3.0.0</version>

</plugin>

</plugins>

</pluginManagement>

</build>

</project>

3.测试代码

package com.qmkj;import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.*;

import org.junit.Before;

import org.junit.Test;

import java.io.FileNotFoundException;

import java.io.IOException;

import java.net.URI;

/**

* Unit test for simple App.

*/

public class AppTest {

FileSystem fs = null;

@Before

public void init() throws Exception {

Configuration conf = new Configuration();

//设立的设置url请注意,设置core-site.xml中配置fs.defaultFS的地址

fs = FileSystem.get(new URI("hdfs://192.168.3.61:9820"), conf, "root");

}

@Test

public void testAdd() throws Exception {

fs.copyFromLocalFile(new Path("D:\KK_Movies\kk 2020-02-20 20-45-55.mp4"), new Path("/zhanglei"));

fs.close();

}

/**

* 从hdfs中复制文件到本地文件系统

*

* @throws IOException

* @throws IllegalArgumentException

*/

@Test

public void testDownloadFileToLocal() throws IllegalArgumentException, IOException {

// fs.copyToLocalFile(new Path("/mysql-connector-java-5.1.28.jar"), new

// Path("d:/"));

fs.copyToLocalFile(false, new Path("test.txt"), new Path("e:/"), true);

fs.close();

}

/**

* 目录操作

*

* @throws IllegalArgumentException

* @throws IOException

*/

@Test

public void testMkdirAndDeleteAndRename() throws IllegalArgumentException, IOException {

// 创建目录

fs.mkdirs(new Path("/zhanglei/b1/c1"));

// 删除文件夹 ,如果是非空文件夹,参数2必须给值true ,删除所有子文件夹

fs.delete(new Path("/b1"), true);

// 重命名文件或文件夹

fs.rename(new Path("/zhanglei"), new Path("/qmkj"));

}

/**

* 查看目录信息,只显示文件

*

* @throws IOException

* @throws IllegalArgumentException

* @throws FileNotFoundException

*/

@Test

public void testListFiles() throws FileNotFoundException, IllegalArgumentException, IOException {

RemoteIterator<LocatedFileStatus> listFiles = fs.listFiles(new Path("/"), true);

while (listFiles.hasNext()) {

LocatedFileStatus fileStatus = listFiles.next();

System.out.println(fileStatus.getPath().getName());

System.out.println(fileStatus.getBlockSize());

System.out.println(fileStatus.getPermission());

System.out.println(fileStatus.getLen());

BlockLocation[] blockLocations = fileStatus.getBlockLocations();

for (BlockLocation bl : blockLocations) {

System.out.println("block-length:" + bl.getLength() + "--" + "block-offset:" + bl.getOffset());

String[] hosts = bl.getHosts();

for (String host : hosts) {

System.out.println(host);

}

}

System.out.println("--------------打印的分割线--------------");

}

}

/**

* 查看文件及文件夹信息

*

* @throws IOException

* @throws IllegalArgumentException

* @throws FileNotFoundException

*/

@Test

public void testListAll() throws FileNotFoundException, IllegalArgumentException, IOException {

//可以右击方法名,Run 测试一下。

FileStatus[] listStatus = fs.listStatus(new Path("/"));

String flag = "";

for (FileStatus fstatus : listStatus) {

if (fstatus.isFile()) {

flag = "f-- ";

} else {

flag = "d-- ";

}

System.out.println(flag + fstatus.getPath().getName());

System.out.println(fstatus.getPermission());

}

}

}

testDownloadFileToLocal 这里测试请注意,本地也要装hdfs才可以

更多精彩请关注公众号【lovepythoncn】

以上是 hadoop3自学入门笔记(3)java操作hdfs 的全部内容, 来源链接: utcz.com/z/532363.html