git+jenkins+harbor+k8s(kubernetes)实现自动化部署

系统环境:

centos 7

git:gitee.com 当然随便一个git服务端都行

jenkins: lts版本,部署在服务器上,没有通过部署在k8s集群中

harbor: offline版本,用来存储docker镜像

Kubernetes 集群为了方便快捷,使用了kubeadm方式搭建,是三台,并且启用了IPVS,具体服务器用途说明如下:

HOSTNAME

IP地址

服务器用途

master.test.cn

192.168.184.31

k8s-master

node1.test.cn

192.168.184.32

k8s-node1

node2.test.cn

192.168.184.33

k8s-node2

soft.test.cn

192.168.184.34

harbor、jenkins

一、Kubernetes 搭建

本节点参考:https://www.cnblogs.com/lovesKey/p/10888006.html

1.1 系统配置

先将4台主机写好hosts

1 [root@master ~]# cat /etc/hosts2192.168.184.31 master.test.cn3192.168.184.32 node1.test.cn fanli.test.cn4192.168.184.33 node2.test.cn5192.168.184.34 soft.test.cn jenkins.test.cn harbor.test.cn

关闭swap:

临时关闭

swapoff -a

永久关闭(删除或注释掉swap那一行重启即可)

vim /etc/fstab

关闭所有防火墙

systemctl stop firewalldsystemctl disable firewalld

禁用SELINUX:

sed -i "s/SELINUX=enforcing/SELINUX=disabled/g" /etc/selinux/config

重启使SELINUX生效

将桥接的IPv4流量传递到iptables的链,使设置生效:

cat > /etc/sysctl.d/k8s.conf << EOFnet.bridge.bridge

-nf-call-ip6tables = 1net.bridge.bridge

-nf-call-iptables = 1EOF

sysctl --system

1.2 kube-proxy开启ipvs的前置条件

由于ipvs已经加入到了内核的主干,所以为kube-proxy开启ipvs的前提需要加载以下的内核模块:

ip_vs

ip_vs_rr

ip_vs_wrr

ip_vs_sh

nf_conntrack_ipv4

在所有的Kubernetes节点master,node1和node2上执行以下脚本:

cat > /etc/sysconfig/modules/ipvs.modules <<EOF#

!/bin/bashmodprobe -- ip_vsmodprobe -- ip_vs_rrmodprobe -- ip_vs_wrrmodprobe -- ip_vs_shmodprobe -- nf_conntrack_ipv4EOF

chmod755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep -e ip_vs -e nf_conntrack_ipv4

可以看到已经生效了:nf_conntrack_ipv4

150535nf_defrag_ipv4

127291 nf_conntrack_ipv4ip_vs_sh

126880ip_vs_wrr

126970ip_vs_rr

1260016ip_vs

14549722 ip_vs_rr,ip_vs_sh,ip_vs_wrrnf_conntrack

1392649 ip_vs,nf_nat,nf_nat_ipv4,nf_nat_ipv6,xt_conntrack,nf_nat_masquerade_ipv4,nf_conntrack_netlink,nf_conntrack_ipv4,nf_conntrack_ipv6libcrc32c

126444 xfs,ip_vs,nf_nat,nf_conntrack

脚本创建了的/etc/sysconfig/modules/ipvs.modules文件,保证在节点重启后能自动加载所需模块。 使用lsmod | grep -e ip_vs -e nf_conntrack_ipv4命令查看是否已经正确加载所需的内核模块。

在所有节点上安装ipset软件包,同时为了方便查看ipvs规则我们要安装ipvsadm(可选)

yuminstall ipset ipvsadm

1.3 安装Docker(所有节点)

配置docker国内源(阿里云)

wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo -O /etc/yum.repos.d/docker-ce.repo

安装最新版docker-ce

yum -y install docker-cesystemctl enable docker

&& systemctl start dockerdocker

--version

配置kubernetes的源(阿里云)

cat > /etc/yum.repos.d/kubernetes.repo << EOF[kubernetes]

name

=Kubernetesbaseurl

=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

手动导入gpgkey或者关闭

gpgcheck=0

rpmkeys --import https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpgrpmkeys --import https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

开始安装kubeadm和kubelet:

yuminstall -y kubelet kubeadm kubectlsystemctl enable kubelet

systemctl start kubelet

1.4开始部署Kubernetes

初始化master

kubeadm init --apiserver-advertise-address=192.168.184.31 --image-repository lank8s.cn --kubernetes-version v1.18.6--pod-network-cidr=10.244.0.0/16

关注输出内容

Your Kubernetes control-plane has initialized successfully!To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kubesudocp -i /etc/kubernetes/admin.conf $HOME/.kube/configsudochown $(id -u):$(id -g) $HOME/.kube/configYou should now deploy a pod network to the cluster.

Run

"kubectl apply -f [podnetwork].yaml" with one of the options listed at:https:

//kubernetes.io/docs/concepts/cluster-administration/addons/Then you can join any number of worker nodes by running the following on each as root:

kubeadm join192.168.184.31:6443 --token a9vg9z.dlboqvfuwwzauufq

--discovery-token-ca-cert-hash sha256:c2ade88a856f15de80240ff4994661a6daa668113cea0c4a4073f701f05192cb

执行下面命令 初始化当前用户配置 使用kubectl会用到 master节点执行:

mkdir -p $HOME/.kubesudocp -i /etc/kubernetes/admin.conf $HOME/.kube/configsudochown $(id -u):$(id -g) $HOME/.kube/config

安装pod网络插件

kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

在各个node上执行 下面加入命令(加入集群中)

kubeadm join192.168.233.251:6443 --token a9vg9z.dlboqvfuwwzauufq --discovery-token-ca-cert-hash sha256:c2ade88a856f15de80240ff4994661a6daa668113cea0c4a4073f701f05192cb

使用kubectl get po -A -o wide确保所有的Pod都处于Running状态。

[root@master ~]# kubectl get po -A -o wideNAMESPACE NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

kube

-system coredns-5c579bbb7b-flzkd 1/1 Running 5 2d 10.244.1.14 node1.test.cn <none> <none>kube

-system coredns-5c579bbb7b-qz5m8 1/1 Running 4 2d 10.244.2.9 node2.test.cn <none> <none>kube

-system etcd-master.test.cn 1/1 Running 5 2d 192.168.184.31 master.test.cn <none> <none>kube

-system kube-apiserver-master.test.cn 1/1 Running 5 2d 192.168.184.31 master.test.cn <none> <none>kube

-system kube-controller-manager-master.test.cn 1/1 Running 5 2d 192.168.184.31 master.test.cn <none> <none>kube

-system kube-flannel-ds-amd64-bhmps 1/1 Running 6 2d 192.168.184.33 node2.test.cn <none> <none>kube

-system kube-flannel-ds-amd64-mbpvb 1/1 Running 6 2d 192.168.184.32 node1.test.cn <none> <none>kube

-system kube-flannel-ds-amd64-xnw2l 1/1 Running 6 2d 192.168.184.31 master.test.cn <none> <none>kube

-system kube-proxy-8nkgs 1/1 Running 6 2d 192.168.184.32 node1.test.cn <none> <none>kube

-system kube-proxy-jxtfk 1/1 Running 4 2d 192.168.184.31 master.test.cn <none> <none>kube

-system kube-proxy-ls7xg 1/1 Running 4 2d 192.168.184.33 node2.test.cn <none> <none>kube

-system kube-scheduler-master.test.cn 1/1 Running 4 2d 192.168.184.31 master.test.cn <none> <none>

kube-proxy开启ipvs

#修改ConfigMap的kube-system/kube-proxy中的config.conf,把 mode: "" 改为mode: “ipvs" 保存退出即可[root@k8smaster centos]# kubectl edit cm kube-proxy -n kube-system

configmap/kube-proxy edited

###删除之前的proxy pod

[root@master centos]# kubectl get pod -n kube-system |grep kube-proxy |awk"{system("kubectl delete pod "$1" -n kube-system")}"

pod "kube-proxy-2m5jh" deleted

pod "kube-proxy-nfzfl" deleted

pod "kube-proxy-shxdt" deleted

#查看proxy运行状态

[root@master centos]# kubectl get pod -n kube-system | grep kube-proxy

kube-proxy-54qnw 1/1 Running 0 24s

kube-proxy-bzssq 1/1 Running 0 14s

kube-proxy-cvlcm 1/1 Running 0 37s

#查看日志,如果有 `Using ipvs Proxier.` 说明kube-proxy的ipvs 开启成功!

[root@master centos]# kubectl logs kube-proxy-54qnw -n kube-system

I0518 20:24:09.3191601 server_others.go:176] Using ipvs Proxier.

W0518 20:24:09.3197511 proxier.go:386] IPVS scheduler not specified, use rr by default

I0518 20:24:09.3200351 server.go:562] Version: v1.14.2

I0518 20:24:09.3343721 conntrack.go:52] Setting nf_conntrack_max to 131072

I0518 20:24:09.3348531 config.go:102] Starting endpoints config controller

I0518 20:24:09.3349161 controller_utils.go:1027] Waiting for caches to syncfor endpoints config controller

I0518 20:24:09.3349451 config.go:202] Starting service config controller

I0518 20:24:09.3349761 controller_utils.go:1027] Waiting for caches to syncfor service config controller

I0518 20:24:09.4351531 controller_utils.go:1034] Caches are synced for service config controller

I0518 20:24:09.4352711 controller_utils.go:1034] Caches are synced for endpoints config controller

查看 node节点是否ready

[root@master ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

master.test.cn Ready master 2d v1.18.6

node1.test.cn Ready <none> 2d v1.18.6

node2.test.cn Ready <none> 2d v1.18.6

至此 K8S 用kubeadm方式并且使用IPVS方案 安装成功

二、harbor 安装

2.1 准备

harbor是通过docker-compose 启动的,我们首先要在soft.test.cn 节点 安装docker-compose

curl -L https://github.com/docker/compose/releases/download/1.26.1/docker-compose-`uname -s`-`uname -m` > /usr/local/bin/docker-compose

2.2 下载Harbor

harbor的官方地址:https://github.com/goharbor/harbor/releases

然后按照官方安装文档进行安装操作:https://github.com/goharbor/harbor/blob/master/docs/install-config/_index.md

这里的设置方法,我把自己的域名粘贴上来了,如果自己实际操作,需要将harbor.test.cn替换成你自己的域名

下载离线包

wget https://github.com/goharbor/harbor/releases/download/v1.10.4/harbor-offline-installer-v1.10.4.tgz

解压安装包

[root@soft ~]# tar zxvf harbor-offline-installer-v1.10.4.tgz

2.3 设置 https

生成 CA 证书私钥

openssl genrsa -out ca.key 4096生成 CA 证书

openssl req -x509 -new -nodes -sha512 -days 3650-subj "/C=CN/ST=Beijing/L=Beijing/O=example/OU=Personal/CN=harbor.test.cn"-key ca.key -out ca.crt

2.4 生成服务器证书

证书通常包含文件和文件,例如 .crt.key yourdomain.com.crt yourdomain.com.key

生成私钥

openssl genrsa -out harbor.test.cn.key 4096

生成证书签名请求 (CSR)

调整选项中的值以反映您的组织。如果使用 FQDN 连接港口主机,则必须将其指定为公共名称 () 属性,并在密钥和 CSR 文件名中使用它。-subj CN

openssl req -sha512 -new -subj "/C=CN/ST=Beijing/L=Beijing/O=example/OU=Personal/CN=harbor.test.cn"-key harbor.test.cn.key -out harbor.test.cn.csr

生成 x509 v3 扩展文件

无论您使用 FQDN 还是 IP 地址连接到港口主机,都必须创建此文件,以便可以为符合主题替代名称 (SAN) 和 x509 v3 扩展要求的港湾主机生成证书。替换条目以反映您的域。

cat > v3.ext <<-EOFauthorityKeyIdentifier

=keyid,issuerbasicConstraints

=CA:FALSEkeyUsage

= digitalSignature, nonRepudiation, keyEncipherment, dataEnciphermentextendedKeyUsage

= serverAuthsubjectAltName

= @alt_names[alt_names]

DNS.

1=test.cnDNS.

2=testDNS.

3=harbor.test.cnEOF

使用该文件为港口主机生成证书

将 CRS 和 CRT 文件名中的替换为harbor主机名

openssl x509 -req -sha512 -days 3650-extfile v3.ext -CA ca.crt -CAkey ca.key -CAcreateserial -in harbor.test.cn.csr -out harbor.test.cn.crt

2.5 向harbor和docker提供证书

生成ca.crt 、yourdomain.com.crt和 yourdomain.com.key文件后,必须将它们提供给harbor和docker,并重新配置harbor 以使用它们

将服务器证书和密钥复制到港湾主机上的证书文件夹中

cp harbor.test.cn.crt /data/cert/cp harbor.test.cn.key /data/cert/

转换harbor.test.cn.crt为harbor.test.cn.cert ,供 Docker 使用

Docker 守护进程将crt文件解释为 CA 证书,cert文件解释为客户端证书

openssl x509 -inform PEM -in yourdomain.com.crt -out yourdomain.com.cert将服务器证书、密钥和 CA 文件复制到港湾主机上的 Docker 证书文件夹中。您必须先创建相应的文件夹

cp harbor.test.cn.cert /etc/docker/certs.d/harbor.test.cn/cp harbor.test.cn.key /etc/docker/certs.d/harbor.test.cn/

cp ca.crt /etc/docker/certs.d/harbor.test.cn/

重启docker

systemctl restart docker

2.6 部署harbor

修改配置文件harbor.yml

两处需要修改

1.修改hostname

2. 修改certificate 和key的路径

运行脚本以启用 HTTPS

[root@soft ~]# cd harbor[root@soft harbor]# .

/prepare

结束后执行install.sh

[root@soft harbor]# ./install.sh

harbor相关命令

查看结果,可以看到已经都是up的状态,同时启动了80和443端口映射

[root@soft harbor]# docker-compose psName Command State Ports

---------------------------------------------------------------------------------------------------------------harbor

-core /harbor/harbor_core Up (healthy)harbor

-db /docker-entrypoint.sh Up (healthy) 5432/tcpharbor

-jobservice /harbor/harbor_jobservice ... Up (healthy)harbor

-log /bin/sh -c /usr/local/bin/ ... Up (healthy) 127.0.0.1:1514->10514/tcpharbor

-portal nginx -g daemon off; Up (healthy) 8080/tcpnginx nginx

-g daemon off; Up (healthy) 0.0.0.0:80->8080/tcp, 0.0.0.0:443->8443/tcpredis redis

-server /etc/redis.conf Up (healthy) 6379/tcpregistry

/home/harbor/entrypoint.sh Up (healthy) 5000/tcpregistryctl

/home/harbor/start.sh Up (healthy)

停止harbor运行

[root@soft harbor]# docker-compose down -vStopping nginx ...

doneStopping harbor

-jobservice ... doneStopping harbor

-core ... doneStopping harbor

-portal ... doneStopping redis ...

doneStopping registryctl ...

doneStopping harbor

-db ... doneStopping registry ...

doneStopping harbor

-log ... doneRemoving nginx ...

doneRemoving harbor

-jobservice ... doneRemoving harbor

-core ... doneRemoving harbor

-portal ... doneRemoving redis ...

doneRemoving registryctl ...

doneRemoving harbor

-db ... doneRemoving registry ...

doneRemoving harbor

-log ... doneRemoving network harbor_harbor

启动harbor进程

[root@soft harbor]# docker-compose up -dCreating network

"harbor_harbor" with the default driverCreating harbor

-log ... doneCreating registry ...

doneCreating harbor

-db ... doneCreating redis ...

doneCreating harbor

-portal ... doneCreating registryctl ...

doneCreating harbor

-core ... doneCreating nginx ...

doneCreating harbor

-jobservice ... done

2.7 登录测试

浏览器登录https://harbor.test.cn 需要电脑配置hosts.账号admin 密码Harbor12345

创建一个jenkins用户,用于jenkins使用

新建一个jenkins项目用于jenkins后续部署用

添加成员

在需要上传镜像的服务器上修改docker仓库连接方式为http,否则默认https无法连接。这里以harbor.test.cn上我修改的sonarqube镜像为例

vim /etc/docker/daemon.json

加入{

"insecure-registries" : ["harbor.test.cn"]}

重启Docker生效

systemctl restart docker

三、Jenkins

安装

Jenkins 官网地址:https://www.jenkins.io/zh/

可以根据不同的系统安装,可以安装lts版,长期支持版,也可以安装每周更新版,我这里选择安装长期支持版:

[root@soft ~]# wget https://mirrors.tuna.tsinghua.edu.cn/jenkins/redhat-stable/jenkins-2.235.2-1.1.noarch.rpm

安装jdk

[root@soft harbor]# yuminstall -y java-1.8.0-openjdk

安装jenkins

[root@soft harbor]# yum localinstall -y jenkins-2.235.2-1.1.noarch.rpm

之后我们设置开机启动并启动jenkins

[root@soft harbor]# systemctl enable jenkins [root@soft harbor]# systemctl start jenkins

我们访问jenkins页面:http://192.168.184.34:8080/,可以看到jenkins已经可以初始化了

按照提示的路径查看密码

选择安装插件,第一个为默认安装,第二个为手动这里选择默认的

安装完插件后,创建新用户

我们安装插件CloudBees Docker Build and Publish plugin

安装完成后我们新建item

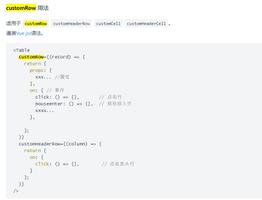

在源码管理的这里按需选择,用svn就选svn,用git就选git,我这里选择了gitee网站上自己写的测试代码 django构建的,同样分支默认应该为master,我这里按需填写了develop,自己按需填写。

注意Dockerfile 一定要和代码放在同一处(当前目录最顶层),这样docker build and publish 插件才能生效

Dockerfile

FROM python:3.6-alpineENV PYTHONUNBUFFERED

1WORKDIR

/appRUN pip

install django -i https://pypi.douban.com/simpleCOPY . /app

CMD python /app/manage.py runserver 0.0.0.0:8000

之后构建处选择docker build and publish

给soft.test.cn主机的/var/run/docker.sock 666权限

[root@soft harbor]# chmod666 /var/run/docker.sock

再次添加构建,选择execute shell

命令中这么写为了在master主机上执行kubectl ,去部署pod

ssh192.168.184.31"cd /data/jenkins/${JOB_NAME} && sh rollout.sh ${BUILD_NUMBER}"

我的脚本是这么写的:

[root@master fanli_admin]# cat rollout.sh#

!/bin/bashworkdir

="/data/jenkins/fanli_admin"project

="fanli_admin"job_number

=$(date +%s)cd ${workdir}

oldversion

=$(cat ${project}_delpoyment.yaml | grep"image:" | awk -F ":""{print $NF}")newversion

=$1echo"old version is: "${oldversion}

echo"new version is: "${newversion}

##tihuan jingxiangbanben

sed -i.bak${job_number} "s/""${oldversion}""/""${newversion}""/g" ${project}_delpoyment.yaml

##zhixing shengjibanben

kubectl apply -f ${project}_delpoyment.yaml --record=true

[root@master fanli_admin]#

当然给配置了soft.test.cn 主机root用户 免密钥访问master.test.cn root用户

[root@soft harbor]# ssh-keygen #生成密钥[root@soft harbor]#

ssh-copy-id192.168.184.31 #将公钥传过去

点击保存

修改为root 启动jenkins

[root@soft harbor]# vim /etc/sysconfig/jenkins将JENKINS_USER

="jenkins"修改为

JENKINS_USER

="root"

重启jenkins

[root@soft harbor]# systemctl restart jenkins

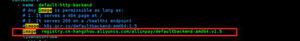

四、K8S 配置和部署Jenkins项目

配置k8s登录harbor

创建secrets

kubectl create secret docker-registry harbor-login-registry --docker-server=harbor.test.cn --docker-username=jenkins --docker-password=123456

部署ingress

master.test.cn 主机

kubectl apply -f https://raw.githubusercontent.com/kubernetes/ingress-nginx/controller-v0.34.1/deploy/static/provider/baremetal/deploy.yaml

过一会可以看到已经安装完成了

[root@master fanli_admin]# kubectl get po,svc -n ingress-nginxNAME READY STATUS RESTARTS AGE

pod

/ingress-nginx-admission-create-c9qfd 0/1 Completed 0 4d2hpod

/ingress-nginx-admission-patch-6bdn4 0/1 Completed 0 4d2hpod

/ingress-nginx-controller-8555c97f66-d7tlj 1/1 Running 2 4d2hNAME TYPE CLUSTER

-IP EXTERNAL-IP PORT(S) AGEservice

/ingress-nginx-controller NodePort 10.110.52.209 <none> 80:30657/TCP,443:30327/TCP 4d2hservice

/ingress-nginx-controller-admission ClusterIP 10.102.19.224 <none> 443/TCP 4d2h

部署前的准备

将fanli_admin_svc.yaml fanli_admin_ingress.yaml 放在和rollout.sh 相同的目录

[root@master fanli_admin]# cat fanli_admin_delpoyment.yamlapiVersion: apps

/v1kind: Deployment

metadata:

name: fanliadmin

namespace: default

spec:

minReadySeconds:

5strategy:

# indicate

which strategy we want for rolling updatetype: RollingUpdate

rollingUpdate:

maxSurge:

1maxUnavailable:

1replicas:

2selector:

matchLabels:

app: fanli_admin

template:

metadata:

labels:

app: fanli_admin

spec:

imagePullSecrets:

- name: harbor-login-registryterminationGracePeriodSeconds:

10containers:

- name: fanliadminimage: harbor.test.cn

/jenkins/django:26imagePullPolicy: IfNotPresent

ports:

- containerPort: 8000 #外部访问端口name: web

protocol: TCP

livenessProbe:

httpGet:

path:

/membersport:

8000initialDelaySeconds:

60 #容器初始化完成后,等待60秒进行探针检查timeoutSeconds:

5failureThreshold:

12 #当Pod成功启动且检查失败时,Kubernetes将在放弃之前尝试failureThreshold次。放弃生存检查意味着重新启动Pod。而放弃就绪检查,Pod将被标记为未就绪。默认为3.最小值为1readinessProbe:

httpGet:

path:

/membersport:

8000initialDelaySeconds:

60timeoutSeconds:

5failureThreshold:

12

fanli_admin_delpoyment.yaml

[root@master fanli_admin]# cat fanli_admin_svc.yamlapiVersion: v1

kind: Service

metadata:

name: fanliadmin

namespace: default

labels:

app: fanli_admin

spec:

selector:

app: fanli_admin

type: ClusterIP

ports:

- name: webport:

8000targetPort:

8000

fanli_admin_svc.yaml

[root@master fanli_admin]# cat fanli_admin_ingress.yamlapiVersion: extensions

/v1beta1kind: Ingress

metadata:

name: fanliadmin

spec:

rules:

- host: fanli.test.cnhttp:

paths:

- path: /backend:

serviceName: fanliadmin

servicePort:

8000

fanli_admin_ingress.yaml

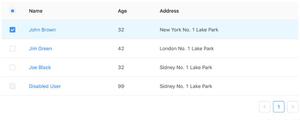

部署

jenkins 首页点击该项目,build now

点击完成后就会自动部署了

点击控制台输出,即可查看详细的部署过程

看到控制台输出success,说明已经成功了

这时候我们看k8s

[root@master fanli_admin]# kubectl get podNAME READY STATUS RESTARTS AGE

fanliadmin

-5575cc56ff-pdk4r 1/1 Running 0 3m3sfanliadmin

-5575cc56ff-sz2j5 1/1 Running 0 3m4s

[root@master fanli_admin]# kubectl rollout history deployment/fanliadmindeployment.apps

/fanliadminREVISION CHANGE

-CAUSE2 <none>3 kubectl apply --filename=fanli_admin_delpoyment.yaml --record=true

4 kubectl apply --filename=fanli_admin_delpoyment.yaml --record=true

5 kubectl apply --filename=fanli_admin_delpoyment.yaml --record=true

已经部署新版本成功了

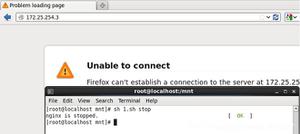

五、访问

通过ingress 访问

[root@master fanli_admin]# kubectl get pod,svc -n ingress-nginxNAME READY STATUS RESTARTS AGE

pod

/ingress-nginx-admission-create-c9qfd 0/1 Completed 0 4d2hpod

/ingress-nginx-admission-patch-6bdn4 0/1 Completed 0 4d2hpod

/ingress-nginx-controller-8555c97f66-d7tlj 1/1 Running 2 4d2hNAME TYPE CLUSTER

-IP EXTERNAL-IP PORT(S) AGEservice

/ingress-nginx-controller NodePort 10.110.52.209 <none> 80:30657/TCP,443:30327/TCP 4d2hservice

/ingress-nginx-controller-admission ClusterIP 10.102.19.224 <none> 443/TCP 4d2h

我们可以看到,从外网访问80端口需要访问ingress的30657端口,443端口要访问30327端口

这个时候我们可以看到自己写的fanliadmin_ingress.yaml中绑定的域名是fanli.test.cn

在windows电脑中绑定hosts

然后访问 http://fanli.test.cn:30657/members

已经成功

原文链接:https://www.cnblogs.com/xiaobo-sre/archive/2020/07/28/13373004.html

以上是 git+jenkins+harbor+k8s(kubernetes)实现自动化部署 的全部内容, 来源链接: utcz.com/z/518809.html