Android 利用OpenCV制作人脸检测APP

前言

本篇文章手把手教大家使用OpenCV来实现一个能在安卓手机上运行的人脸检测APP。其实不仅仅是能检测人脸,还能检测鼻子,嘴巴,眼睛和耳朵。花了不少精力写这篇文章,希望点赞收藏关注。

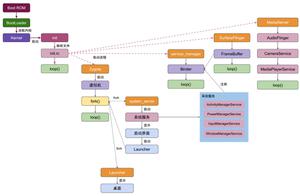

无图无真相,先把APP运行的结果给大家看看。

如上图所示,APP运行后,点击“选择图片”,从手机中选择一张图片,然后点击“处理”,APP会将人脸用矩形给框起来,同时把鼻子也给检测出来了。由于目的是给大家做演示,所以APP设计得很简单,而且也只实现了检测人脸和鼻子,没有实现对其他五官的检测。而且这个APP也只能检测很简单的图片,如果图片中背景太复杂就无法检测出人脸。

下面我将一步一步教大家如何实现上面的APP!

第一步:下载并安装Android studio

为了保证大家能下载到和我相同版本的Android Studio,我把安装包上传了到微云。下载地址

下载后,一路点击下一步就安装好了。当然,安装过程中要联网,所以可能会中途失败,如果失败了,重试几次,如果还是有问题,那么可能要开启VPN。

第二步:下载SDK tools

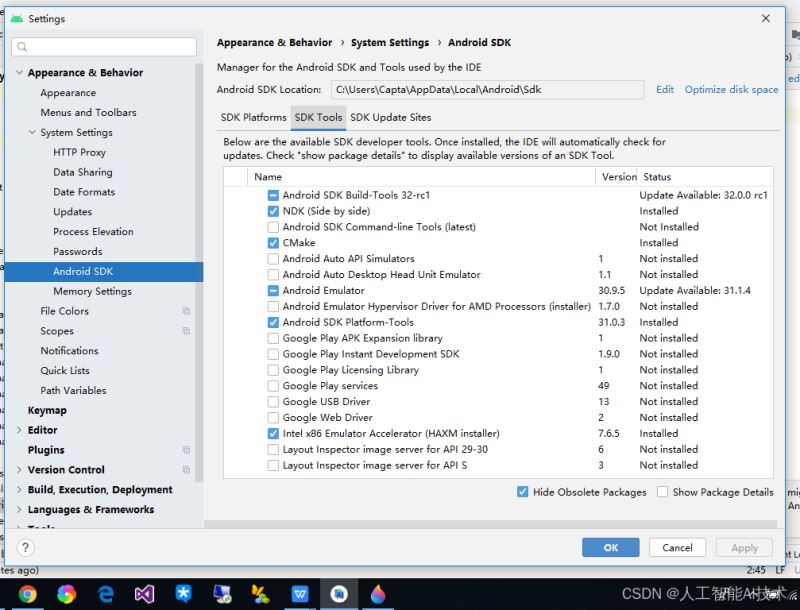

打开Android studio后,点击“File”->“Settings”

点击“Appearance & Behavior”->“System Settings”->“Android SDK”->“SDK Tools”。

然后选中“NDK”和“CMake”,点击“OK”。下载这两个工具可能要花一点时间,如果失败了请重试或开启VPN。

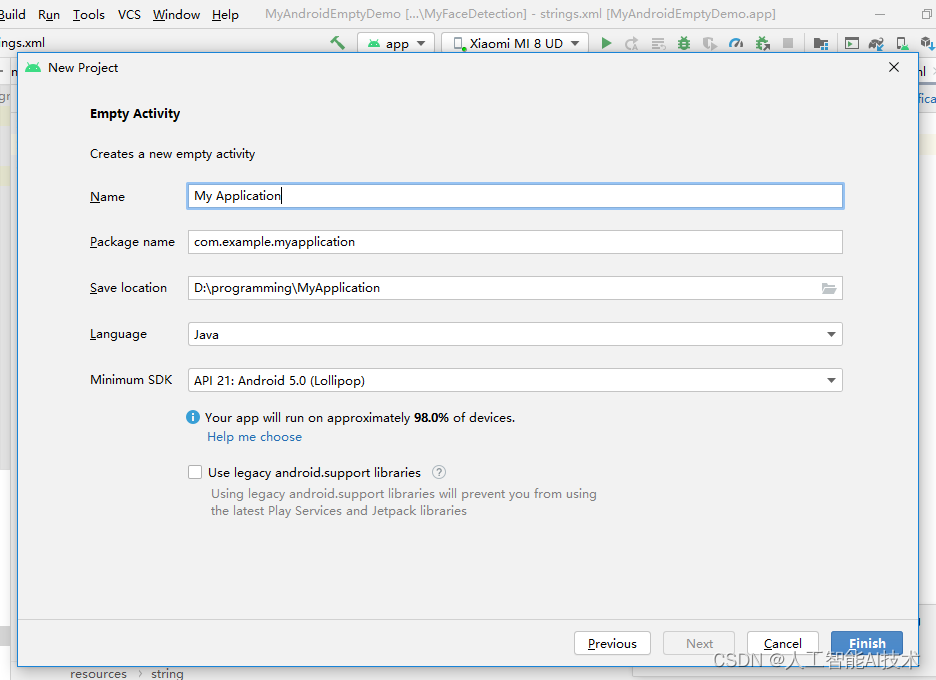

第三步:新建一个Android APP项目

点击“File”->“New”->“New Project”

选中“Empty Activity”,点击“Next”

“Language”选择“Java”,Minimum SDK选择“API 21”。点击“Finish”

第四步:下载Opencv

下载地址

下载后解压。

第五步:导入OpenCV

将opencv-4.5.4-android-sdk\OpenCV-android-sdk下面的sdk复制到你在第三步创建的Android项目下面。就是第三步图中的D:\programming\MyApplication下面。然后将sdk文件夹改名为openCVsdk。

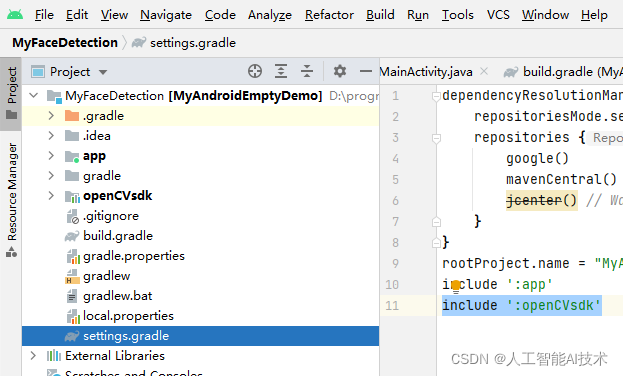

选择“Project”->“settings.gradle”。在文件中添加include ‘:openCVsdk'

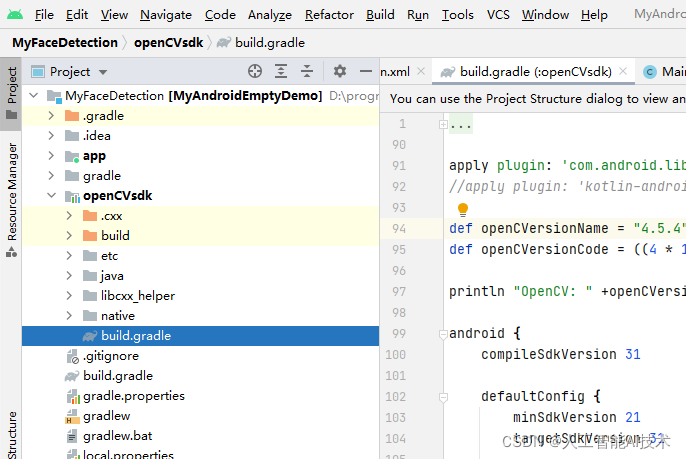

选择“Project”->“openCVsdk”->“build.gradle”。

将apply plugin: 'kotlin-android'改为//apply plugin: ‘kotlin-android'

将compileSdkVersion和minSdkVersion,targetSdkVersion改为31,21,31。

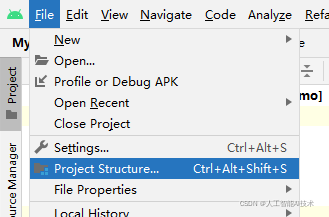

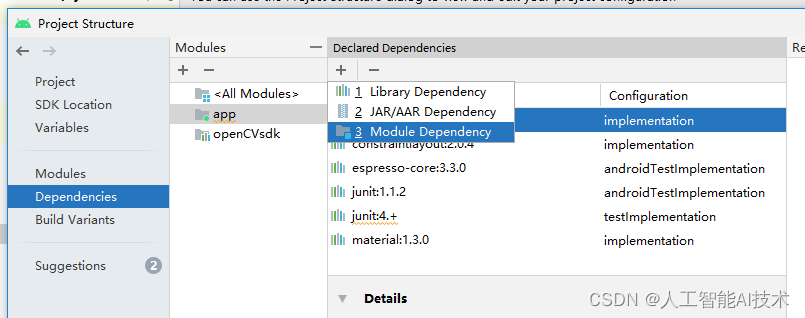

点击“File”->“Project Structure”

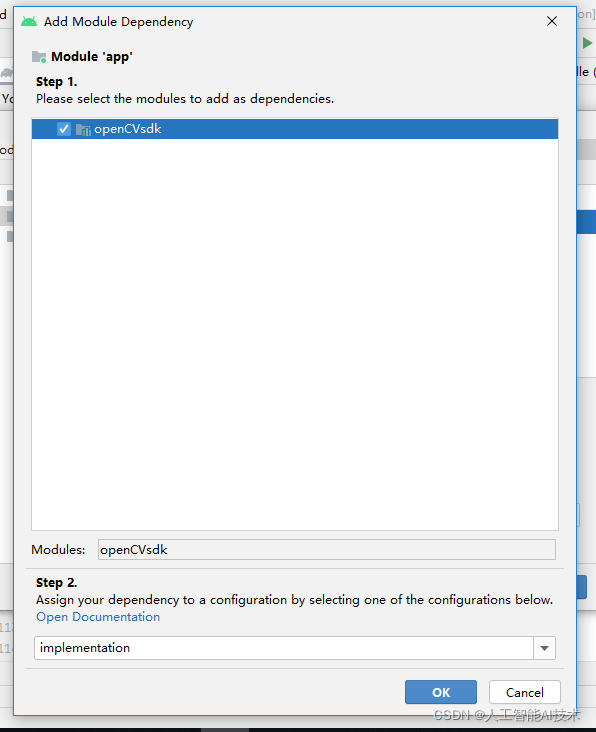

点击“Dependencies”->“app”->“+”->“Module Dependency”

选中“openCVsdk”,点击“OK”,以及母窗口的“OK”

在Android项目文件夹的app\src里面创建一个新文件夹jniLibs,然后把openCV文件夹的opencv-4.5.4-android-sdk\OpenCV-android-sdk\sdk\native\staticlibs里面的东西都copy到jniLibs文件夹中。

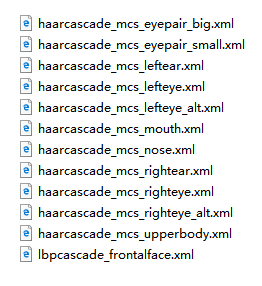

下载分类器。解压后,将下图中的文件都复制到项目文件夹的app\src\main\res\raw文件夹下。

第六步:添加代码

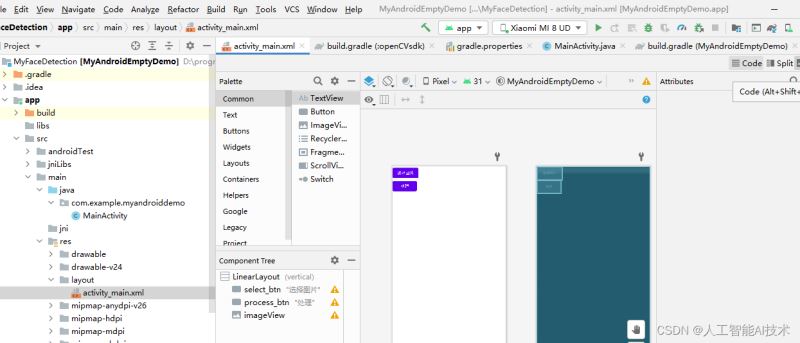

双击“Project”->“app”-》“main”-》“res”下面的“activity_main.xml”。然后点击右上角的“code”。

然后将里面的代码都换成下面的代码

<?xml version="1.0" encoding="utf-8"?>

<LinearLayout xmlns:android="http://schemas.android.com/apk/res/android"

xmlns:app="http://schemas.android.com/apk/res-auto"

xmlns:tools="http://schemas.android.com/tools"

android:layout_width="match_parent"

android:layout_height="match_parent"

tools:context=".MainActivity"

android:orientation="vertical"

>

<Button

android:id="@+id/select_btn"

android:layout_width="wrap_content"

android:layout_height="wrap_content"

android:text="选择图片" />

<Button

android:id="@+id/process_btn"

android:layout_width="wrap_content"

android:layout_height="wrap_content"

android:text="处理" />

<ImageView

android:id="@+id/imageView"

android:layout_width="wrap_content"

android:layout_height="wrap_content" />

</LinearLayout>

双击“Project”->“app”-》“main”-》“java”-》“com.example…”下面的“MainActivity”。然后把里面的代码都换成下面的代码(保留原文件里的第一行代码)

import androidx.appcompat.app.AppCompatActivity;

import android.os.Bundle;

import android.content.Intent;

import android.graphics.Bitmap;

import android.graphics.BitmapFactory;

import android.net.Uri;

import android.util.Log;

import android.view.View;

import android.widget.Button;

import android.widget.ImageView;

import org.opencv.android.OpenCVLoader;

import org.opencv.android.Utils;

import org.opencv.core.CvType;

import org.opencv.core.Mat;

import org.opencv.core.Point;

import org.opencv.imgproc.Imgproc;

import java.io.File;

import java.io.FileOutputStream;

import java.io.IOException;

import java.io.InputStream;

import org.opencv.core.MatOfRect;

import org.opencv.core.Rect;

import org.opencv.core.Scalar;

import org.opencv.core.Size;

import org.opencv.objdetect.CascadeClassifier;

import android.content.Context;

public class MainActivity extends AppCompatActivity {

private double max_size = 1024;

private int PICK_IMAGE_REQUEST = 1;

private ImageView myImageView;

private Bitmap selectbp;

private static final String TAG = "OCVSample::Activity";

private static final Scalar FACE_RECT_COLOR = new Scalar(0, 255, 0, 255);

public static final int JAVA_DETECTOR = 0;

public static final int NATIVE_DETECTOR = 1;

private Mat mGray;

private File mCascadeFile;

private CascadeClassifier mJavaDetector,mNoseDetector;

private int mDetectorType = JAVA_DETECTOR;

private float mRelativeFaceSize = 0.2f;

private int mAbsoluteFaceSize = 0;

@Override

protected void onCreate(Bundle savedInstanceState) {

super.onCreate(savedInstanceState);

setContentView(R.layout.activity_main);

staticLoadCVLibraries();

myImageView = (ImageView)findViewById(R.id.imageView);

myImageView.setScaleType(ImageView.ScaleType.FIT_CENTER);

Button selectImageBtn = (Button)findViewById(R.id.select_btn);

selectImageBtn.setOnClickListener(new View.OnClickListener() {

@Override

public void onClick(View v) {

// makeText(MainActivity.this.getApplicationContext(), "start to browser image", Toast.LENGTH_SHORT).show();

selectImage();

}

private void selectImage() {

Intent intent = new Intent();

intent.setType("image/*");

intent.setAction(Intent.ACTION_GET_CONTENT);

startActivityForResult(Intent.createChooser(intent,"选择图像..."), PICK_IMAGE_REQUEST);

}

});

Button processBtn = (Button)findViewById(R.id.process_btn);

processBtn.setOnClickListener(new View.OnClickListener() {

@Override

public void onClick(View v) {

// makeText(MainActivity.this.getApplicationContext(), "hello, image process", Toast.LENGTH_SHORT).show();

convertGray();

}

});

}

private void staticLoadCVLibraries() {

boolean load = OpenCVLoader.initDebug();

if(load) {

Log.i("CV", "Open CV Libraries loaded...");

}

}

private void convertGray() {

Mat src = new Mat();

Mat temp = new Mat();

Mat dst = new Mat();

Utils.bitmapToMat(selectbp, src);

Imgproc.cvtColor(src, temp, Imgproc.COLOR_BGRA2BGR);

Log.i("CV", "image type:" + (temp.type() == CvType.CV_8UC3));

Imgproc.cvtColor(temp, dst, Imgproc.COLOR_BGR2GRAY);

Utils.matToBitmap(dst, selectbp);

myImageView.setImageBitmap(selectbp);

mGray = dst;

mJavaDetector = loadDetector(R.raw.lbpcascade_frontalface,"lbpcascade_frontalface.xml");

mNoseDetector = loadDetector(R.raw.haarcascade_mcs_nose,"haarcascade_mcs_nose.xml");

if (mAbsoluteFaceSize == 0) {

int height = mGray.rows();

if (Math.round(height * mRelativeFaceSize) > 0) {

mAbsoluteFaceSize = Math.round(height * mRelativeFaceSize);

}

}

MatOfRect faces = new MatOfRect();

if (mJavaDetector != null) {

mJavaDetector.detectMultiScale(mGray, faces, 1.1, 2, 2, // TODO: objdetect.CV_HAAR_SCALE_IMAGE

new Size(mAbsoluteFaceSize, mAbsoluteFaceSize), new Size());

}

Rect[] facesArray = faces.toArray();

for (int i = 0; i < facesArray.length; i++) {

Log.e(TAG, "start to detect nose!");

Mat faceROI = mGray.submat(facesArray[i]);

MatOfRect noses = new MatOfRect();

mNoseDetector.detectMultiScale(faceROI, noses, 1.1, 2, 2,

new Size(30, 30));

Rect[] nosesArray = noses.toArray();

Imgproc.rectangle(src,

new Point(facesArray[i].tl().x + nosesArray[0].tl().x, facesArray[i].tl().y + nosesArray[0].tl().y) ,

new Point(facesArray[i].tl().x + nosesArray[0].br().x, facesArray[i].tl().y + nosesArray[0].br().y) ,

FACE_RECT_COLOR, 3);

Imgproc.rectangle(src, facesArray[i].tl(), facesArray[i].br(), FACE_RECT_COLOR, 3);

}

Utils.matToBitmap(src, selectbp);

myImageView.setImageBitmap(selectbp);

}

private CascadeClassifier loadDetector(int rawID,String fileName) {

CascadeClassifier classifier = null;

try {

// load cascade file from application resources

InputStream is = getResources().openRawResource(rawID);

File cascadeDir = getDir("cascade", Context.MODE_PRIVATE);

mCascadeFile = new File(cascadeDir, fileName);

FileOutputStream os = new FileOutputStream(mCascadeFile);

byte[] buffer = new byte[4096];

int bytesRead;

while ((bytesRead = is.read(buffer)) != -1) {

os.write(buffer, 0, bytesRead);

}

is.close();

os.close();

Log.e(TAG, "start to load file: " + mCascadeFile.getAbsolutePath());

classifier = new CascadeClassifier(mCascadeFile.getAbsolutePath());

if (classifier.empty()) {

Log.e(TAG, "Failed to load cascade classifier");

classifier = null;

} else

Log.i(TAG, "Loaded cascade classifier from " + mCascadeFile.getAbsolutePath());

cascadeDir.delete();

} catch (IOException e) {

e.printStackTrace();

Log.e(TAG, "Failed to load cascade. Exception thrown: " + e);

}

return classifier;

}

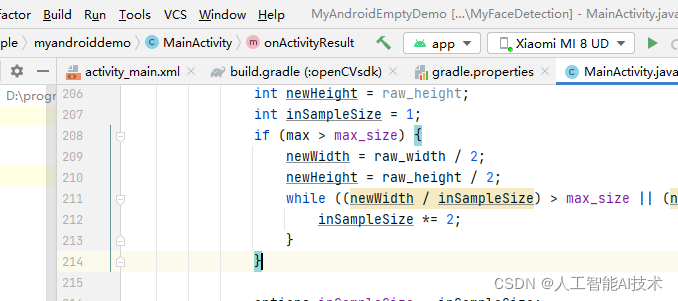

@Override

protected void onActivityResult(int requestCode, int resultCode, Intent data) {

super.onActivityResult(requestCode, resultCode, data);

if (requestCode == PICK_IMAGE_REQUEST && resultCode == RESULT_OK && data != null && data.getData() != null) {

Uri uri = data.getData();

try {

Log.d("image-tag", "start to decode selected image now...");

InputStream input = getContentResolver().openInputStream(uri);

BitmapFactory.Options options = new BitmapFactory.Options();

options.inJustDecodeBounds = true;

BitmapFactory.decodeStream(input, null, options);

int raw_width = options.outWidth;

int raw_height = options.outHeight;

int max = Math.max(raw_width, raw_height);

int newWidth = raw_width;

int newHeight = raw_height;

int inSampleSize = 1;

if (max > max_size) {

newWidth = raw_width / 2;

newHeight = raw_height / 2;

while ((newWidth / inSampleSize) > max_size || (newHeight / inSampleSize) > max_size) {

inSampleSize *= 2;

}

}

options.inSampleSize = inSampleSize;

options.inJustDecodeBounds = false;

options.inPreferredConfig = Bitmap.Config.ARGB_8888;

selectbp = BitmapFactory.decodeStream(getContentResolver().openInputStream(uri), null, options);

myImageView.setImageBitmap(selectbp);

} catch (Exception e) {

e.printStackTrace();

}

}

}

}

第七步:连接手机运行程序

首先要打开安卓手机的开发者模式,每个手机品牌的打开方式不一样,你自行百度一下就知道了。例如在百度中搜索“小米手机如何开启开发者模式”。

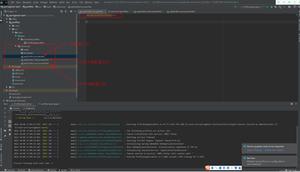

然后用数据线将手机和电脑连接起来。成功后,Android studio里面会显示你的手机型号。如下图中显示的是“Xiaomi MI 8 UD”,本例中的开发手机是小米手机。

点击上图中的“Run”-》“Run ‘app'”就可以将APP运行到手机上面了,注意手机屏幕要处于打开状态。你自拍的图片可以检测不成功,可以下载我的测试图片试试。

到此这篇关于Android 利用OpenCV制作人脸检测APP的文章就介绍到这了,更多相关Android OpenCV 人脸检测APP内容请搜索以前的文章或继续浏览下面的相关文章希望大家以后多多支持!

以上是 Android 利用OpenCV制作人脸检测APP 的全部内容, 来源链接: utcz.com/p/244164.html