结合Selenium和正则表达式提高爬虫效率

用Selenium爬取aliexpress的商品信息

任务

爬取https://www.aliexpress.com/wholesale?SearchText=cartoon+case&d=y&origin=n&catId=0&initiative_id=SB_20200523214041这个页面下的商品详情,由于页面是异步加载的,需要使用Selenium模拟浏览器来获取商品url。但直接使用Selenium定位网页元素速度又很慢,因此需要结合Re或者BeautifulSoup来提高爬取效率。

模拟登陆

使用Selenium模拟登录,登录成功后获取cookie。

def login(username, password, driver=None):driver.get("https://login.aliexpress.com/")

driver.maximize_window()

name = driver.find_element_by_id("fm-login-id")

name.send_keys(username)

name1 = driver.find_element_by_id("fm-login-password")

name1.send_keys(password)

submit = driver.find_element_by_class_name("fm-submit")

time.sleep(1)

submit.click()

return driver

browser = webdriver.Chrome()

browser = login("Wheabion1944@dayrep.com","ab123456",browser)

browser.get("https://www.aliexpress.com/wholesale?trafficChannel=main&d=y&SearchText=cartoon+case<ype=wholesale&SortType=default&page=")

这个网站对用户监管不严,使用邮箱注册都不需要进行验证,可以用这个网站获取假邮箱进行注册:http://www.fakemailgenerator.com/

其实后续真正运行程序爬的时候并没有登录,爬了十页也没碰到反爬。

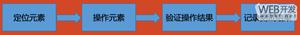

获取商品详情页的URL

这一过程需要解决的问题在于该网页是ajex异步加载的,网页不会在打开的同时加载全部数据,在下拉的同时网页刷新返回新的数据包并渲染,因此通过request无法一次性读到网页的全部源码。解决思路是通过Selenium来模拟浏览器下拉行为以获取一页内全部的数据,然后暂时还是通过sel去获取元素。

登录后打开任务需要的页面会出现广告弹窗,首先需要关闭广告弹窗:

def close_win(browser):time.sleep(10)

try:

closewindow = browser.find_element_by_class_name("next-dialog-close")

browser.execute_script("arguments[0].click();", closewindow)

except Exception as e:

print(f"searchKey: there is no suspond Page1. e = {e}")

模拟下拉行为并获取一页中全部商品的url:

def get_products(browser):wait = WebDriverWait(browser, 1)

for i in range(30):

browser.execute_script("window.scrollBy(0,230)")

time.sleep(1)

products = wait.until(EC.presence_of_all_elements_located((By.CLASS_NAME,"product-info")))

if len(products) >= 60:

break

else:

print(len(products))

continue

products = browser.find_elements_by_class_name("product-info")

return products

后来经学长指点发现不需要这么麻烦,搜索页的商品信息虽然是经过下滑操作才会通过JS动态渲染,但商品信息其实都是写在html文档里的,可以通过以下方式获取:

url = "https://www.aliexpress.com/wholesale?trafficChannel=main&d=y&SearchText=cartoon+case<ype=wholesale&SortType=default&page="driver = webdriver.Chrome()

driver.get(url)

info = re.findall("window.runParams = ({.*})",driver.page_source)[-1]

infos = json.loads(info)

items = infos["items"]

然后就可以慢慢去匹配。

获取商品内页详情

这一部分的问题在于需要爬取的网页很多,继续使用sel会导致爬虫速度很慢,另外商品内页的数据似乎不是异步返回的。解决方案是先使用sel访问商品内页,将整个网页源码down下来后用正则表达式去匹配元素:

def get_pro_info(product):url = product.find_element_by_class_name("item-title").get_attribute("href")

driver = webdriver.Chrome()

driver.get(url)

page = driver.page_source

driver.close()

material=re.findall(r""skuAttr":".*?#(.*?);",page)

color=re.findall(r"skuAttr":".*?#.*?#(.*?)"",page)

stock=re.findall(r"skuAttr":".*?"availQuantity":(.*?),",page)

price=re.findall(r"skuAttr":".*?"actSkuCalPrice":"(.*?)"",page)

pics = re.findall(r"<div class="sku-property-image"><img class="" src="(.*?)"", page)

titles = re.findall(r"<img class="" src=".*?" title="(.*?)">", page)

video = re.findall(r"id="item-video" src="(.*?)"", page)

return material, color, stock, price, pics, titles, video

接入MySQL

爬取到的数据要求用数据库储存,这里需要接入MySQL,数据库crawl和表SKU都是提前建好的:

conn = pymysql.connect(host="localhost", user="root", password="ab226690",db="crawl")

mycursor = conn.cursor()

通过循环实现数组数据的写入,这里很坑的一点是insert的时候pymysql的格式转换和python不是完全一样,参数用"%s"匹配就可以,不需要针对数字型字段搞整形或浮点型:

#写入sku表sql = "INSERT INTO SKU(skuID,material,color,stock,price, url) VALUES (%s,%s,%s,%s,%s,%s)"#就是这里,虽然有些变量是数值型,但还是用%s来对应

for i in range(len(skuID)):

if titles:

params = (skuID[i], material[i], color[i], stock[i], price[i],url)

else:

params = (skuID[i], material[i], " ", stock[i], price[i],url)

try:

mycursor.execute(sql,params)

conn.commit()

except IntegrityError: #当出现duplicate primary key时会抛出这个错误,这里这样写的本意是碰到重复主键就跳过这一条记录,但实际运行这段代码的时候还是会报错。偷懒的解决办法是把主键取消,但这样好像不是很合理,日后知道怎么解决再来更新

conn.rollback()

continue

实现写入操作时碰到的另一个问题是用re匹配不到元素时返回的是一个空的list,这样会导致无法写入mysql而报错,因此要判断待写入的变量是否是空的list,是的话要赋合适的值:

sql = "INSERT INTO product(url, product_name, rating, reviews, video, shipping) VALUES (%s,%s,%s,%s,%s,%s)"if rating:

pass

else:

rating = "0.0"

if review:

pass

else:

review = "0"

if video:

pass

else:

video = " "

if shipping:

pass

else:

shipping = "0.0"

params = (url, pro_name, rating,review, video, shipping)

mycursor.execute(sql,params)

关闭数据库连接:

conn.commit()

提升速度

除了前面提到的使用selenium访问后转用re匹配外,还发现一个提升爬虫效率的点:

browser = webdriver.Chrome()browser.get(source_url)

browser = close_win(browser)

像这样重复地实例化和关闭浏览器驱动是很耗费时间的,因此要使用尽量少的浏览器窗口来访问网站。

本任务中是只实例化了两个webdriver,一个用来访问多个商品的展示页,一个用来访问商品内页,具体方法就是实例化后不要这两个driver,一直用它们来get新的网页。原来的代码中是每打开一个网页都初始化一个新的webdriver去访问,做出这一修改后代码运行时间减少了一半。

def scratch_page(source_url):browser = webdriver.Chrome()

browser.get(source_url)

browser.maximize_window()

browser = close_win(browser)

pros = get_products(browser)

#商品内页的浏览器

browser2 = webdriver.Chrome()

error_file = open("ERROR.txt","a+",encoding="utf8")

for pro in pros:

url, pro_name, skuID, material, color, stock, price, pics, titles, video,rating,shipping, review = get_pro_info(pro, browser2)#对前面的get_pro_info

做简单修改if len(skuID)!=len(color):

error_file.write("url:"+url+"

")

continue

save_data_to_sql(url,pro_name, skuID, material, color, stock, price, pics, titles, video,rating,shipping,review)

error_file.close()

browser.close()

browser2.close()

完整代码

from selenium import webdriverimport time from selenium.webdriver.common.by import Byfrom selenium.webdriver.support.ui import WebDriverWaitfrom selenium.webdriver.support import expected_conditions as ECimport reimport pymysqlfrom sqlalchemy.exc import IntegrityError#捕获重复主键的异常def login(username, password, driver=None):

driver.get("https://login.aliexpress.com/")

driver.maximize_window()

name = driver.find_element_by_id("fm-login-id")

name.send_keys(username)

name1 = driver.find_element_by_id("fm-login-password")

name1.send_keys(password)

submit = driver.find_element_by_class_name("fm-submit")

time.sleep(1)

submit.click()

return driver

def close_win(browser):

time.sleep(5)

try:

closewindow = browser.find_element_by_class_name("next-dialog-close")

closewindow.click()

except Exception as e:

print(f"searchKey: there is no suspond Page1. e = {e}")

return browser

def get_products(browser):

wait = WebDriverWait(browser, 1)

for i in range(30):

browser.execute_script("window.scrollBy(0,230)")

time.sleep(1)

products = wait.until(EC.presence_of_all_elements_located((By.CLASS_NAME,"product-info")))

if len(products) >= 60:

break

else:

continue

products = browser.find_elements_by_class_name("product-info")

return products

def get_pro_info(product, driver):

url = product.find_element_by_class_name("item-title").get_attribute("href")

driver.get(url)

time.sleep(0.5)

page = driver.page_source

material=re.findall(r""skuAttr":".*?#(.*?);",page)

color=re.findall(r""skuAttr":".*?#.*?#(.*?)"",page)

stock=re.findall(r""skuAttr":".*?"availQuantity":(.*?),",page)

price=re.findall(r""skuAttr":".*?"skuCalPrice":"(.*?)"",page)

pics = re.findall(r"<div class="sku-property-image"><img class="" src="(.*?)"", page)

titles = re.findall(r"<img class="" src=".*?" title="(.*?)">", page)

video = re.findall(r"id="item-video" src="(.*?)"", page)

skuID = re.findall(r""skuId":(.*?),",page)

pro_name = re.findall(r""product-title-text">(.*?)</h1>", page)

rating = re.findall(r"itemprop="ratingValue">(.*?)</span>", page)

shipping = re.findall(r"<span class="bold">(.*?) ", page)

review = re.findall(r""reviewCount">(.*?) Reviews</span>", page)

#当商品没有颜色可选时,网页源码结构变化,需要重新匹配

if titles:

pass

else:

material = re.findall(r""skuAttr":".*?#(.*?)"", page)

color=[]

pics = re.findall(r""imagePathList":["(.*?)",", page)

return url, pro_name, skuID, material, color, stock, price, pics, titles, video,rating,shipping, review

def save_data_to_sql(url,pro_name, skuID, material, color, stock, price, pics, titles, video,rating,shipping,review):

url = re.findall("/item/(.*?).html",url)

# try:

conn = pymysql.connect(host="localhost", user="root", password="ab226690",db="crawl")

mycursor = conn.cursor()

#写入sku表

sql = "INSERT INTO SKU(skuID,material,color,stock,price, url) VALUES (%s,%s,%s,%s,%s,%s)"

for i in range(len(skuID)):

if titles:

params = (skuID[i], material[i], color[i], stock[i], price[i],url)

else:

params = (skuID[i], material[i], "", stock[i], price[i],url)

# mycursor.execute(sql,params)

# conn.commit()

try:

mycursor.execute(sql,params)

conn.commit()

except IntegrityError:

conn.rollback()

continue

#写入img表

sql = "INSERT INTO image(url, color, img) VALUES (%s,%s,%s)"

i = 0

if titles:

for i in range(len(titles)):

params = (url, titles[i], pics[i])

# mycursor.execute(sql,params)

# conn.commit()

try:

mycursor.execute(sql,params)

conn.commit()

except IntegrityError:

conn.rollback()

continue

else:

params = (url, "", pics)

# mycursor.execute(sql,params)

# conn.commit()

try:

mycursor.execute(sql,params)

conn.commit()

except IntegrityError:

conn.rollback()

#写入product表

sql = "INSERT INTO product(url, product_name, rating, reviews, video, shipping) VALUES (%s,%s,%s,%s,%s,%s)"

if rating:

pass

else:

rating = "0.0"

if review:

pass

else:

review = "0"

if video:

pass

else:

video = ""

if shipping:

pass

else:

shipping = "0.0"

params = (url, pro_name, rating,review, video, shipping)

mycursor.execute(sql,params)

conn.commit()

# try:

# mycursor.execute(sql,params)

# conn.commit()

# except Exception:

# conn.rollback()

conn.close()

# except Exception as e:

# conn.rollback()

# print(e)

def scratch_page(source_url):

browser = webdriver.Chrome()

browser.get(source_url)

browser.maximize_window()

browser = close_win(browser)

pros = get_products(browser)

#商品内页的浏览器

browser2 = webdriver.Chrome()

error_file = open("ERROR.txt","a+",encoding="utf8")

for pro in pros:

url, pro_name, skuID, material, color, stock, price, pics, titles, video,rating,shipping, review = get_pro_info(pro, browser2)

if len(skuID)!=len(color):

error_file.write("url:"+url+"

")

continue

save_data_to_sql(url,pro_name, skuID, material, color, stock, price, pics, titles, video,rating,shipping,review)

error_file.close()

browser.close()

browser2.close()

url = "https://www.aliexpress.com/wholesale?trafficChannel=main&d=y&SearchText=cartoon+case<ype=wholesale&SortType=default&page="

for p in range(1,11):

url_ = url + str(p)

start_time = time.time()

scratch_page(url_)

end_time = time.time()

print("成功爬取" + str(p) + "页")

print("第" + str(p) + "页耗时: "+str(start_time-end_time)+"s")

原文链接:https://www.cnblogs.com/heiheixiaocai/archive/2020/06/13/13061356.html

以上是 结合Selenium和正则表达式提高爬虫效率 的全部内容, 来源链接: utcz.com/z/517448.html