Python 2.7.6 爬虫实例

环境

Ubuntu 14.04 LTS

Python 2.7.6

Beautifulsoup4-4.6.0

PyCharm Community Edition 2017.1.2

PS:之前使用Python3配合Beautifulsoup老是有问题,改成Python2.7版本就好了。注意版本的搭配。

一 安装BeautifulSoup

BeautifulSoup官网:https://www.crummy.com/software/BeautifulSoup/

各版本:https://www.crummy.com/software/BeautifulSoup/bs4/download/

运行:$sudo pip install beautifulsoup4 进行安装

zh@zh:~$pip install beautifulsoup4Downloading/unpacking beautifulsoup4

Downloading beautifulsoup4-4.6.0-py2-none-any.whl (86kB): 86kB downloaded

Installing collected packages: beautifulsoup4

Cleaning up...

Exception:

Traceback (most recent call last):

File "/usr/lib/python2.7/dist-packages/pip/basecommand.py", line 122, in main

status = self.run(options, args)

File "/usr/lib/python2.7/dist-packages/pip/commands/install.py", line 283, in run

requirement_set.install(install_options, global_options, root=options.root_path)

File "/usr/lib/python2.7/dist-packages/pip/req.py", line 1436, in install

requirement.install(install_options, global_options, *args, **kwargs)

File "/usr/lib/python2.7/dist-packages/pip/req.py", line 672, in install

self.move_wheel_files(self.source_dir, root=root)

File "/usr/lib/python2.7/dist-packages/pip/req.py", line 902, in move_wheel_files

pycompile=self.pycompile,

File "/usr/lib/python2.7/dist-packages/pip/wheel.py", line 206, in move_wheel_files

clobber(source, lib_dir, True)

File "/usr/lib/python2.7/dist-packages/pip/wheel.py", line 193, in clobber

os.makedirs(destsubdir)

File "/usr/lib/python2.7/os.py", line 157, in makedirs

mkdir(name, mode)

OSError: [Errno 13] Permission denied: \'/usr/local/lib/python2.7/dist-packages/bs4\'

Storing debug log for failure in /home/zhanghu/.pip/pip.log

zh@zh:~$

zh@zh:~$

zh@zh:~$sudo pip install beautifulsoup4

[sudo] password for zhanghu:

Downloading/unpacking beautifulsoup4

Downloading beautifulsoup4-4.6.0-py2-none-any.whl (86kB): 86kB downloaded

Installing collected packages: beautifulsoup4

Successfully installed beautifulsoup4

Cleaning up...

zh@zh:~$

二 爬虫脚本案例1

# coding: utf-8import urllib2

from bs4 import BeautifulSoup

class Splider:

def __init__(self):

self.manager = Manager()

self.downloader = Download()

self.parser = Parse()

self.outputer = Output()

def craw_search_word(self, root_url):

count = 0

self.manager.add_new_url(root_url)

while self.manager.has_new_url():

try:

if count >= 10000:

break

print "正在加载第" + str(count) + "到" + str(count + 15) + "条数据"

current_url = self.manager.get_new_url()

html_content = self.downloader.download(current_url)

new_url, data = self.parser.parse(root_url, html_content)

self.manager.add_new_url(new_url)

# self.outputer.collect(data)

self.outputer.output(data)

count += 15

except urllib2.URLError, e:

if hasattr(e, "reason"):

print "craw faild, reason: " + e.reason

class Manager(object):

def __init__(self):

self.new_urls = set()

self.old_urls = set()

def add_new_url(self, new_url):

if new_url is None:

return None

elif new_url not in self.new_urls and new_url not in self.old_urls:

self.new_urls.add(new_url)

def has_new_url(self):

return len(self.new_urls) != 0

def get_new_url(self):

new_url = self.new_urls.pop()

self.old_urls.add(new_url)

return new_url

class Download(object):

def download(self, url):

if url is None:

return None

headers = {

"User-Agent" : "Mozilla/5.0 (Macintosh; Intel Mac OS X 10.11; rv:52.0) Gecko/20100101 Firefox/52.0",

"Cookie" : "Hm_lvt_0c0e9d9b1e7d617b3e6842e85b9fb068=1466075280; __utma=194070582.826403744.1466075281.1466075281.1466075281.1; __utmv=194070582.|2=User%20Type=Visitor=1; signin_redirect=http%3A%2F%2Fwww.jianshu.com%2Fsearch%3Fq%3D%25E7%2594%259F%25E6%25B4%25BB%26page%3D1%26type%3Dnote; _session_id=ajBLb3h5SDArK05NdDY2V0xyUTNpQ1ZCZjNOdEhvNUNicmY0b0NtMnVuUUdkRno2emEyaFNTT3pKWTVkb3ZKT1dvbTU2c3c0VGlGS0wvUExrVW1wbkg1cDZSUTFMVVprbTJ2aXhTcTdHN2lEdnhMRUNkM1FuaW1vdFpNTDFsQXgwQlNjUnVRczhPd2FQM2sveGJCbDVpQUVWN1ZPYW1paUpVakhDbFVPbEVNRWZzUXh5R1d0LzE2RkRnc0lJSHJEOWtnaVM1ZE1yMkt5VC90K2tkeGJQMlVOQnB1Rmx2TFpxamtDQnlSakxrS1lxS0hONXZnZEx0bDR5c2w4Mm5lMitESTBidWE4NTBGNldiZXVQSjhjTGNCeGFOUlpESk9lMlJUTDVibjNBUHdDeVEzMGNaRGlwYkg5bHhNeUxJUVF2N3hYb3p5QzVNTDB4dU4zODljdExnPT0tLU81TTZybUc3MC9BZkltRDBiTEsvU2c9PQ%3D%3D--096a8e4707e00b06b996e8722a58e25aa5117ee9; CNZZDATA1258679142=1544596149-1486533130-https%253A%252F%252Fwww.baidu.com%252F%7C1486561790; _ga=GA1.2.826403744.1466075281; _gat=1",

"Accept" : "text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8"

}

content = ""

try:

request = urllib2.Request(url, headers = headers)

response = urllib2.urlopen(request)

content = response.read()

except urllib2.URLError, e:

if hasattr(e, "reason") and hasattr(e, "code"):

print e.code

print e.reason

else:

print "请求失败"

return content

class Parse(object):

def get_new_data(self, root_url, ul):

data = set()

lis = ul.find_all("li", {"class" : "have-img"})

for li in lis:

cont = li.find("div", {"class" : "content"})

title = cont.find("a", {"class" : "title"}).get_text()

title_url = root_url + cont.a["href"]

data.add((title, title_url))

return data

def get_new_url(self, root_url, ul):

lis = ul.find_all("li", {"class" : "have-img"})

data_category_id = ul["data-category-id"]

# 最后一个文章data-recommended-at -1

max_id = int(lis[-1]["data-recommended-at"]) - 1

new_url = root_url + "?data_category_id=" + data_category_id + "&max_id=" + str(max_id)

return new_url

def parse(self, root_url, content):

soup = BeautifulSoup(content, "html.parser", from_encoding="utf-8")

div = soup.find(id="list-container")

ul = div.find("ul", {"class" : "note-list"})

new_url = self.get_new_url(root_url, ul)

new_data = self.get_new_data(root_url, ul)

return new_url, new_data

class Output(object):

def __init__(self):

self.datas = set()

def collect(self, data):

if data is None:

return None

for item in data:

if item is None or item in self.datas:

continue

self.datas.add(item)

def output(self, data):

for item in data:

title, url = item

print title + " " + url

if __name__ == "__main__":

root_url = "http://www.jianshu.com/recommendations/notes"

splider = Splider()

splider.craw_search_word(root_url)

参考:http://www.jianshu.com/p/ffc221e3e404

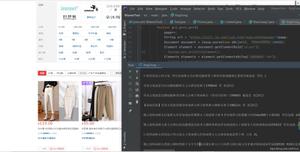

二 爬虫脚本案例2

功能:抓取贴吧的图片

#coding=utf-8import urllib

import re

def getHtml(url):

page = urllib.urlopen(url)

html = page.read()

return html

def getImg(html):

reg = r\'src="(.+?\.jpg)" pic_ext\'

imgre = re.compile(reg)

imglist = re.findall(imgre,html)

x = 0

for imgurl in imglist:

urllib.urlretrieve(imgurl,\'%s.jpg\' % x)

x+=1

html = getHtml("https://tieba.baidu.com/p/2460150866")

print getImg(html)

上面脚本运行结束后,图片已经下载下来了:

参考:http://www.cnblogs.com/fnng/p/3576154.html

类似上面脚本,修改地址和正则表达式,下载"简书"的长微博图片:{这个脚本很不错}

如网址:http://www.jianshu.com/p/ffc221e3e404

1.把整个网页打印出来:

#coding=utf-8import urllib

def getHtml(url):

page = urllib.urlopen(url)

html = page.read()

return html

html = getHtml("http://www.jianshu.com/p/ffc221e3e404")

print html

将上面终端的数据拷贝到文本里面,或通过火狐浏览器右键"查看页面源代码",然后搜索关键字"jpg",找到图片的下载地址,如:

<a class="share-circle" data-toggle="tooltip" href="http://cwb.assets.jianshu.io/notes/images/8978350/weibo/image_796105d290f5.jpg" target="_blank" data-original-title="下载长微博图片">

修改上面脚本中的正则表达式:

reg = r\'src="(.+?\.jpg)" pic_ext\'

修改为:

reg = r\'href="(.+?\.jpg)" target\'

然后运行脚本,完整脚本:

#coding=utf-8import urllib

import re

def getHtml(url):

page = urllib.urlopen(url)

html = page.read()

return html

def getImg(html):

reg = r\'href="(.+?\.jpg)" target\'

imgre = re.compile(reg)

imglist = re.findall(imgre,html)

x = 0

for imgurl in imglist:

urllib.urlretrieve(imgurl,\'%s.jpg\' % x)

x+=1

html = getHtml("http://www.jianshu.com/p/ffc221e3e404")

print getImg(html)

"长微博图片"已经下载下来了:

修改上面的URI和正则表达式就可以抓取网页上你想要的内容了。正则表达式如何写,完全要根据实际页面来匹配,而不是通用的。

2.筛选页面数据

通过"正则表达式"筛选

附:

抓取"http://www.3lian.com/gif/2017/05-12/669d0db509ee5c5a8f4a607f64b8e8c1.html" 界面的图片脚本:

#coding=utf-8import urllib

import re

def getHtml(url):

page = urllib.urlopen(url)

html = page.read()

return html

def getImg(html):

reg = r\'src="(.+?\.jpg)"\'

imgre = re.compile(reg)

imglist = re.findall(imgre,html)

x = 0

for imgurl in imglist:

urllib.urlretrieve(imgurl,\'%s.jpg\' % x)

x+=1

html = getHtml("http://www.3lian.com/gif/2017/05-12/669d0db509ee5c5a8f4a607f64b8e8c1.html")

print getImg(html)

http://blog.csdn.net/lishk314/article/details/44678019# python下载音乐文件

三 爬虫脚本案例3

功能:下载视频网站的视频

import urllib2print "stand"

for i in range(1, 23, 1):

url = \'http://newoss.maiziedu.com/yxyh4/pand-%02d.mp4\' % i

f = urllib2.urlopen(url)

data = f.read()

name = \'python_pandas_%02d.mp4\' % (i)

with open(name, "wb") as code:

code.write(data)

print \'end\'

下载结果:

参考:

http://blog.csdn.net/hpuhjl/article/details/56012081 Python - 下载视频网站的视频

另附

http://blog.csdn.net/hpuhjl/article/details/52253698 python - 文件下载

http://www.cnblogs.com/yinsolence/p/5140297.html?utm_source=tuicool&utm_medium=referral python爬取并下载麦子学院所有视频教程

http://git.oschina.net/getsai/mzSpider/tree/master python爬取并下载麦子学院所有视频教程

http://blog.csdn.net/jiangwei0910410003/article/details/19806999 fidder抓包调试

以上是 Python 2.7.6 爬虫实例 的全部内容, 来源链接: utcz.com/z/386609.html