python实现梯度下降和逻辑回归

本文实例为大家分享了python实现梯度下降和逻辑回归的具体代码,供大家参考,具体内容如下

import numpy as np

import pandas as pd

import os

data = pd.read_csv("iris.csv") # 这里的iris数据已做过处理

m, n = data.shape

dataMatIn = np.ones((m, n))

dataMatIn[:, :-1] = data.ix[:, :-1]

classLabels = data.ix[:, -1]

# sigmoid函数和初始化数据

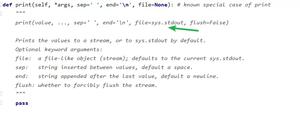

def sigmoid(z):

return 1 / (1 + np.exp(-z))

# 随机梯度下降

def Stocgrad_descent(dataMatIn, classLabels):

dataMatrix = np.mat(dataMatIn) # 训练集

labelMat = np.mat(classLabels).transpose() # y值

m, n = np.shape(dataMatrix) # m:dataMatrix的行数,n:dataMatrix的列数

weights = np.ones((n, 1)) # 初始化回归系数(n, 1)

alpha = 0.001 # 步长

maxCycle = 500 # 最大循环次数

epsilon = 0.001

error = np.zeros((n,1))

for i in range(maxCycle):

for j in range(m):

h = sigmoid(dataMatrix * weights) # sigmoid 函数

weights = weights + alpha * dataMatrix.transpose() * (labelMat - h) # 梯度

if np.linalg.norm(weights - error) < epsilon:

break

else:

error = weights

return weights

# 逻辑回归

def pred_result(dataMatIn):

dataMatrix = np.mat(dataMatIn)

r = Stocgrad_descent(dataMatIn, classLabels)

p = sigmoid(dataMatrix * r) # 根据模型预测的概率

# 预测结果二值化

pred = []

for i in range(len(data)):

if p[i] > 0.5:

pred.append(1)

else:

pred.append(0)

data["pred"] = pred

os.remove("data_and_pred.csv") # 删除List_lost_customers数据集 # 第一次运行此代码时此步骤不要

data.to_csv("data_and_pred.csv", index=False, encoding="utf_8_sig") # 数据集保存

pred_result(dataMatIn)

以上是 python实现梯度下降和逻辑回归 的全部内容, 来源链接: utcz.com/z/328092.html