【Python】scrapy 中如何运行多个爬虫

scrapy crawl kr.py2018-07-09 00:03:06 [scrapy.utils.log] INFO: Scrapy 1.5.0 started (bot: multispider)

2018-07-09 00:03:06 [scrapy.utils.log] INFO: Versions: lxml 3.2.1.0, libxml2 2.9.1, cssselect 1.0.1, parsel 1.4.0, w3lib 1.19.0, Twisted 16.2.0, Python 2.7.5 (default, Apr 11 2018, 07:36:10) - [GCC 4.8.5 20150623 (Red Hat 4.8.5-28)], pyOpenSSL 16.2.0 (OpenSSL 1.0.1e-fips 11 Feb 2013), cryptography 1.7.1, Platform Linux-3.10.0-514.26.2.el7.x86_64-x86_64-with-centos-7.3.1611-Core

Traceback (most recent call last):

File "/usr/bin/scrapy", line 11, in <module>

sys.exit(execute())

File "/usr/lib64/python2.7/site-packages/scrapy/cmdline.py", line 150, in execute

_run_print_help(parser, _run_command, cmd, args, opts)

File "/usr/lib64/python2.7/site-packages/scrapy/cmdline.py", line 90, in _run_print_help

func(*a, **kw)

File "/usr/lib64/python2.7/site-packages/scrapy/cmdline.py", line 157, in _run_command

cmd.run(args, opts)

File "/usr/lib64/python2.7/site-packages/scrapy/commands/crawl.py", line 57, in run

self.crawler_process.crawl(spname, **opts.spargs)

File "/usr/lib64/python2.7/site-packages/scrapy/crawler.py", line 170, in crawl

crawler = self.create_crawler(crawler_or_spidercls)

File "/usr/lib64/python2.7/site-packages/scrapy/crawler.py", line 198, in create_crawler

return self._create_crawler(crawler_or_spidercls)

File "/usr/lib64/python2.7/site-packages/scrapy/crawler.py", line 202, in _create_crawler

spidercls = self.spider_loader.load(spidercls)

File "/usr/lib64/python2.7/site-packages/scrapy/spiderloader.py", line 71, in load

raise KeyError("Spider not found: {}".format(spider_name))

KeyError: 'Spider not found: kr.py'

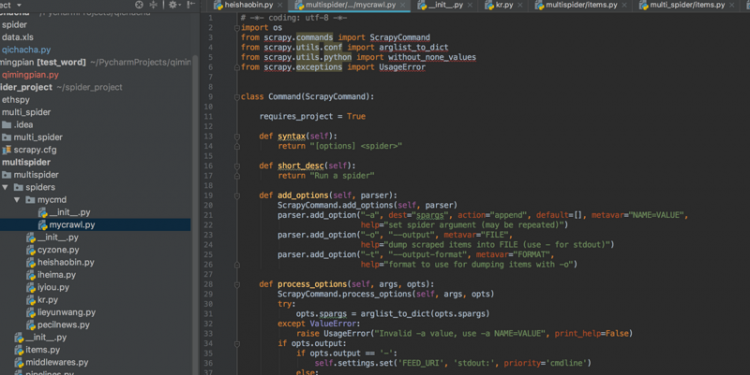

scrapy 中运行多个爬虫报错

截图这样写不知道对否?

回答

以上是 【Python】scrapy 中如何运行多个爬虫 的全部内容, 来源链接: utcz.com/a/79389.html