各位python爬虫大牛看过来,这个网站的反爬虫怎么处理

https://www.everysaving.co.uk/

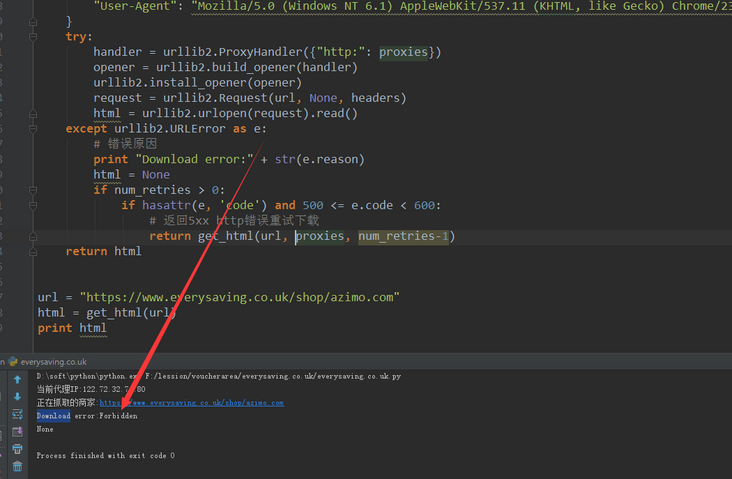

通过python爬取这个网站的数据,然而返回不了数据,!我加入了header和代理IP去抓取,也不行,望各位大牛们不妨试试看。。。

回答:

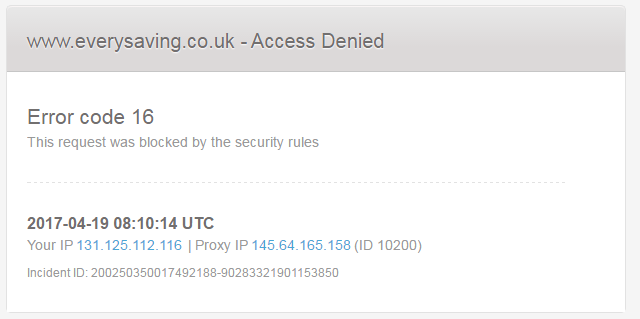

代理访问网站可见下图:

通过https://www.17ce.com/,发现大陆几乎都被屏蔽了,Http状态返回403。

此网站的安全策略级别比较高,建议使用欧美地区的高匿代理 VPN或者服务器,降低抓取频次。

回答:

fiddler抓包,浏览器发什么你就发什么

回答:

你这个地址直接通过浏览器也访问不了呀,被墙了吧?

回答:

我直接点开是不能访问的,测试用了新加坡的代理可以打开

回答:

被墙了 肯定爬不了···

回答:

做观点网的时候要爬知乎,发现反爬虫,没办法先去爬了很多代理下来,python写的,代码给你贴上:

#coding:utf-8import json

import sys

import urllib, urllib2

import datetime

import time

reload(sys)

sys.setdefaultencoding('utf-8')

from Queue import Queue

from bs4 import BeautifulSoup

import MySQLdb as mdb

DB_HOST = '127.0.0.1'

DB_USER = 'root'

DB_PASS = 'root'

ID=0

ST=1000

uk='3758096603'

classify="inha"

proxy = {u'https':u'118.99.66.106:8080'}

class ProxyServer:

def __init__(self):

self.dbconn = mdb.connect(DB_HOST, DB_USER, DB_PASS, 'test', charset='utf8')

self.dbconn.autocommit(False)

self.next_proxy_set = set()

self.chance=0

self.fail=0

self.count_errno=0

self.dbcurr = self.dbconn.cursor()

self.dbcurr.execute('SET NAMES utf8')

def get_prxy(self,num):

while num>0:

global proxy,ID,uk,classify,ST

count=0

for page in range(1,718):

if self.chance >0:

if ST % 100==0:

self.dbcurr.execute("select count(*) from proxy")

for r in self.dbcurr:

count=r[0]

if ST>count:

ST=1000

self.dbcurr.execute("select * from proxy where ID=%s",(ST))

results = self.dbcurr.fetchall()

for r in results:

protocol=r[1]

ip=r[2]

port=r[3]

pro=(protocol,ip+":"+port)

if pro not in self.next_proxy_set:

self.next_proxy_set.add(pro)

self.chance=0

ST+=1

proxy_support = urllib2.ProxyHandler(proxy)

# opener = urllib2.build_opener(proxy_support,urllib2.HTTPHandler(debuglevel=1))

opener = urllib2.build_opener(proxy_support)

urllib2.install_opener(opener)

#添加头信息,模仿浏览器抓取网页,对付返回403禁止访问的问题

# i_headers = {'User-Agent':'Mozilla/5.0 (Windows; U; Windows NT 6.1; en-US; rv:1.9.1.6) Gecko/20091201 Firefox/3.5.6'}

i_headers = {'User-Agent':'Mozilla/5.0 (Windows NT 6.1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/31.0.1650.48'}

#url='http://www.kuaidaili.com/free/inha/' + str(page)

url='http://www.kuaidaili.com/free/'+classify+'/' + str(page)

html_doc=""

try:

req = urllib2.Request(url,headers=i_headers)

response = urllib2.urlopen(req, None,5)

html_doc = response.read()

except Exception as ex:

print "ex=",ex

pass

self.chance+=1

if self.chance>0:

if len(self.next_proxy_set)>0:

protocol,socket=self.next_proxy_set.pop()

proxy= {protocol:socket}

print "proxy",proxy

print "change proxy success."

continue

#html_doc = urllib2.urlopen('http://www.xici.net.co/nn/' + str(page)).read()

if html_doc !="":

soup = BeautifulSoup(html_doc)

#print "soup",soup

#trs = soup.find('table', id='ip_list').find_all('tr') #获得所有行

trs = ""

try:

trs = soup.find('table').find_all('tr')

except:

print "error"

continue

for tr in trs[1:]:

tds = tr.find_all('td')

ip = tds[0].text.strip()

port = tds[1].text.strip()

protocol = tds[3].text.strip()

#tds = tr.find_all('td')

#ip = tds[2].text.strip()

#port = tds[3].text.strip()

#protocol = tds[6].text.strip()

get_time= tds[6].text.strip()

#get_time = "20"+get_time

check_time = datetime.datetime.strptime(get_time,'%Y-%m-%d %H:%M:%S')

temp = time.time()

x = time.localtime(float(temp))

time_now = time.strftime("%Y-%m-%d %H:%M:%S",x) # get time now

http_ip = protocol+'://'+ip+':'+port

if protocol == 'HTTP' or protocol == 'HTTPS':

content=""

try:

proxy_support=urllib2.ProxyHandler({protocol:http_ip})

# proxy_support = urllib2.ProxyHandler({'http':'http://124.200.100.50:8080'})

opener = urllib2.build_opener(proxy_support, urllib2.HTTPHandler)

urllib2.install_opener(opener)

if self.count_errno>50:

self.dbcurr.execute("select UID from visited where ID=%s",(ID))

for uid in self.dbcurr:

uk=str(uid[0])

ID+=1

if ID>50000:

ID=0

self.count_errno=0

test_url="http://yun.baidu.com/pcloud/friend/getfanslist?start=0&query_uk="+uk+"&limit=24"

print "download:",http_ip+">>"+uk

req1 = urllib2.Request(test_url,headers=i_headers)

response1 = urllib2.urlopen(req1, None,5)

content = response1.read()

except Exception as ex:

#print "ex2=",ex

pass

self.fail+=1

if self.fail>10:

self.fail=0

break

continue

if content!="":

json_body = json.loads(content)

errno = json_body['errno']

self.count_errno+=1

if errno!=-55:

print "success."

self.dbcurr.execute('select ID from proxy where IP=%s', (ip))

y = self.dbcurr.fetchone()

if not y:

print 'add','%s//:%s:%s' % (protocol, ip, port)

self.dbcurr.execute('INSERT INTO proxy(PROTOCOL,IP,PORT,CHECK_TIME,ACQ_TIME) VALUES(%s,%s,%s,%s,%s)',(protocol,ip,port,check_time,time_now))

self.dbconn.commit()

num-=1

if num % 4 ==0:

classify="intr"

if num % 4 ==1:

classify="outha"

if num % 4 ==2:

classify="outtr"

if num % 4 ==3:

classify="inha"

if __name__ == '__main__':

proSer = ProxyServer()

proSer.get_prxy(10000)

以上是 各位python爬虫大牛看过来,这个网站的反爬虫怎么处理 的全部内容, 来源链接: utcz.com/a/165416.html