关于scrapy爬虫AJAX页面

问题:

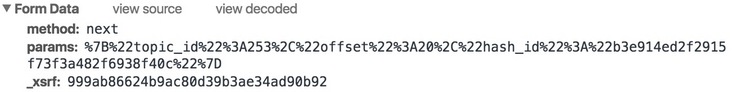

爬取信息页面为:知乎话题广场

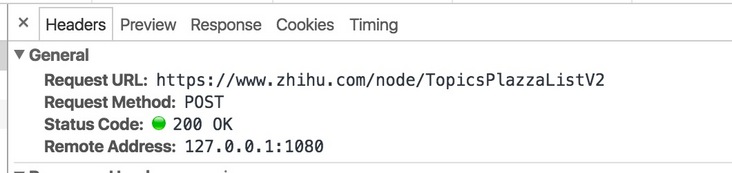

当点击加载的时候,用Chrome 开发者工具,可以看到Network中,实际请求的链接为:

FormData为:

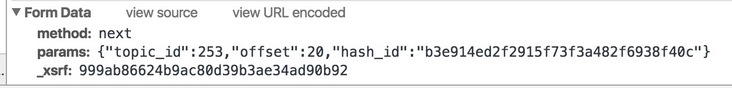

urlencode:

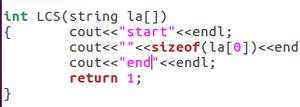

然后我的代码为:

... data = response.css('.zh-general-list::attr(data-init)').extract()

param = json.loads(data[0])

topic_id = param['params']['topic_id']

# hash_id = param['params']['hash_id']

hash_id = ""

for i in range(32):

params = json.dumps({"topic_id": topic_id,"hash_id": hash_id, "offset":i*20})

payload = {"method": "next", "params": params, "_xsrf":_xsrf}

print payload

yield scrapy.Request(

url="https://m.zhihu.com/node/TopicsPlazzaListV2?" + urlencode(payload),

headers=headers,

meta={

"proxy": proxy,

"cookiejar": response.meta["cookiejar"],

},

callback=self.get_topic_url,

)

执行爬虫之后,返回的是:

{'_xsrf': u'161c70f5f7e324b92c2d1a6fd2e80198', 'params': '{"hash_id": "", "offset": 140, "topic_id": 253}', 'method': 'next'}^C^C^C{'_xsrf': u'161c70f5f7e324b92c2d1a6fd2e80198', 'params': '{"hash_id": "", "offset": 160, "topic_id": 253}', 'method': 'next'}

2016-05-09 11:09:36 [scrapy] DEBUG: Retrying <GET https://m.zhihu.com/node/TopicsPlazzaListV2?_xsrf=161c70f5f7e324b92c2d1a6fd2e80198¶ms=%7B%22hash_id%22%3A+%22%22%2C+%22offset%22%3A+80%2C+%22topic_id%22%3A+253%7D&method=next> (failed 1 times): [<twisted.python.failure.Failure OpenSSL.SSL.Error: [('SSL routines', 'SSL3_READ_BYTES', 'ssl handshake failure')]>]

2016-05-09 11:09:36 [scrapy] DEBUG: Retrying <GET https://m.zhihu.com/node/TopicsPlazzaListV2?_xsrf=161c70f5f7e324b92c2d1a6fd2e80198¶ms=%7B%22hash_id%22%3A+%22%22%2C+%22offset%22%3A+40%2C+%22topic_id%22%3A+253%7D&method=next> (failed 1 times): [<twisted.python.failure.Failure OpenSSL.SSL.Error: [('SSL routines', 'SSL3_READ_BYTES', 'ssl handshake failure')]>]

是不是url哪里错了?请指点一二。

回答:

DEBUG: Retrying <GET 这么大个提示看不出来。。。你抓包时的请求是post。scrapy时为什么要用get。。。

回答:

写爬虫应该一步一步来,不能一步到位,不然哪里错了都不知道,一般是先获取到你要的数据,然后再去解析过滤。

你先发送请求看看能不能获取到你想要的数据,如果没有,可能是url不对或者被拦截了

回答:

#coding=utf-8import requests

headers = {'Content-Type':'application/x-www-form-urlencoded; charset=UTF-8'}

url = 'https://www.zhihu.com/node/TopicsPlazzaListV2'

data = 'method=next¶ms=%7B%22topic_id%22%3A833%2C%22offset%22%3A0%2C%22hash_id%22%3A%22%22%7D'

r = requests.post(url, data, headers=headers)

print r.text

回答:

少年教你一个大招。

以上是 关于scrapy爬虫AJAX页面 的全部内容, 来源链接: utcz.com/a/162989.html