redis之master.slave主从复制

简介

主机数据更新后根据配置和策略,自动同步到备机的master/slave机制,master以写为主,slave以读为主从库配置

配置从库,不配主库配置从库:

格式: slaveof 主库ip 主库port

注意: 每次与master断开之后,都需要重新连接,除非配置到redis.conf文件

配置文件细节:

主从同步一--一主多从(同一台机器上同一个redis运行在三个port上)

1.复制redis配置文件三份

[root@izm5e2q95pbpe1hh0kkwoiz redis-5.0.3]# mkdir test-master-slave[root@izm5e2q95pbpe1hh0kkwoiz redis-5.0.3]# cp redis.conf test-master-slave/redis6379.conf

[root@izm5e2q95pbpe1hh0kkwoiz redis-5.0.3]# cp redis.conf test-master-slave/redis6380.conf

[root@izm5e2q95pbpe1hh0kkwoiz redis-5.0.3]# cp redis.conf test-master-slave/redis6381.conf

修改另外两份配置文件

vim redis6379.conf

daemonize yes

port 6379

pidfile /var/run/redis_6379.pid

logfile "6379.log"

dbfilename dump6379.rdb

vim redis6380.conf

daemonize yes

port 6380

pidfile /var/run/redis_6380.pid

logfile "6380.log"

dbfilename dump6380.rdb

vim redis6381.conf

daemonize yes

port 6381

pidfile /var/run/redis_6381.pid

logfile "6381.log"

dbfilename dump6381.rdb

2.启动三个redis

[root@izm5e2q95pbpe1hh0kkwoiz test-master-slave]# cd /alidata/redis-5.0.3/bin[root@izm5e2q95pbpe1hh0kkwoiz bin]# pwd

/alidata/redis-5.0.3/bin

[root@izm5e2q95pbpe1hh0kkwoiz bin]# ps aux | grep redis

root 20603 0.1 0.1 156392 2284 ? Ssl 2018 681:54 redis-server *:8686

root 21288 0.0 0.0 112680 984 pts/0 R+ 10:58 0:00 grep --color=auto redis

[root@izm5e2q95pbpe1hh0kkwoiz bin]# redis-server ../test-master-slave/redis6380.conf

[root@izm5e2q95pbpe1hh0kkwoiz bin]# ps aux | grep redis

root 20603 0.1 0.1 156392 2284 ? Ssl 2018 681:54 redis-server *:8686

root 23628 0.0 0.1 153832 2324 ? Ssl 10:58 0:00 redis-server 127.0.0.1:6380

root 24492 0.0 0.0 112680 980 pts/0 R+ 10:58 0:00 grep --color=auto redis

[root@izm5e2q95pbpe1hh0kkwoiz bin]# redis-server ../test-master-slave/redis6381.conf

[root@izm5e2q95pbpe1hh0kkwoiz bin]# ps aux | grep redis

root 20603 0.1 0.1 156392 2284 ? Ssl 2018 681:54 redis-server *:8686

root 23628 0.0 0.1 153832 2324 ? Ssl 10:58 0:00 redis-server 127.0.0.1:6380

root 27142 0.0 0.1 153832 2304 ? Ssl 10:58 0:00 redis-server 127.0.0.1:6381

root 28470 0.0 0.0 112680 984 pts/0 R+ 10:58 0:00 grep --color=auto redis

[root@izm5e2q95pbpe1hh0kkwoiz bin]# redis-server ../test-master-slave/redis6379.conf

[root@izm5e2q95pbpe1hh0kkwoiz redis-5.0.3]# ps aux | grep redis

root 4705 0.1 0.1 153832 2592 ? Ssl 11:11 0:00 redis-server 127.0.0.1:6380

root 20603 0.1 0.1 156392 2296 ? Ssl 2018 681:55 redis-server *:8686

root 22492 0.0 0.1 153832 2296 ? Ssl 11:14 0:00 redis-server 127.0.0.1:6379

root 23990 0.0 0.0 112680 980 pts/0 R+ 11:14 0:00 grep --color=auto redis

root 27142 0.0 0.1 153832 2304 ? Ssl 10:58 0:00 redis-server 127.0.0.1:6381

3.细节(暂时一主一从)

# 主机器127.0.0.1:6379> info replication

# Replication

role:master

connected_slaves:0

master_replid:d9ff48134d9b166cb7f48f4f71a54c9eddc8531c

master_replid2:0000000000000000000000000000000000000000

master_repl_offset:0

second_repl_offset:-1

repl_backlog_active:0

repl_backlog_size:1048576

repl_backlog_first_byte_offset:0

repl_backlog_histlen:0

# 还没有配置的从机器

127.0.0.1:6380> info replication

# Replication

role:master

connected_slaves:0

master_replid:e06824b502f2bc16c101653a8e36ba954cf35a53

master_replid2:0000000000000000000000000000000000000000

master_repl_offset:0

second_repl_offset:-1

repl_backlog_active:0

repl_backlog_size:1048576

repl_backlog_first_byte_offset:0

repl_backlog_histlen:0

# 配置从redis

127.0.0.1:6380> SLAVEOF 127.0.0.1 6379

OK

127.0.0.1:6380> info replication

# Replication

role:slave

master_host:127.0.0.1

master_port:6379

master_link_status:up

master_last_io_seconds_ago:2

master_sync_in_progress:0

slave_repl_offset:14

slave_priority:100

slave_read_only:1

connected_slaves:0

master_replid:df2697f1f530565d1fb683f209283e319faea59f

master_replid2:0000000000000000000000000000000000000000

master_repl_offset:14

second_repl_offset:-1

repl_backlog_active:1

repl_backlog_size:1048576

repl_backlog_first_byte_offset:1

repl_backlog_histlen:14

# 此时master redis状态

127.0.0.1:6379> info replication

# Replication

role:master

connected_slaves:1

slave0:ip=127.0.0.1,port=6380,state=online,offset=28,lag=0

master_replid:df2697f1f530565d1fb683f209283e319faea59f

master_replid2:0000000000000000000000000000000000000000

master_repl_offset:28

second_repl_offset:-1

repl_backlog_active:1

repl_backlog_size:1048576

repl_backlog_first_byte_offset:1

repl_backlog_histlen:28

redis配置文件中带有密码时即requirepass时,如果配置文件不特殊配置(masterauth不配置),此处为常规配置-->配置文件要将bind配置为0.0.0.0,使用slaveof命令创建主从关系会失败master_link_status:down.不带有密码的时候,配置文件也要将bind配置为0.0.0.0,否则还是会失败master_link_status:down

redis的master跟slave建立主从关系之后,slave redis会从master的redis获取所有的key

如果master redis down掉,slave redis会继续等待,master redis运行后,slave redis自动连接

如果slave redis down掉之后,slave redis重新启动,不会自动连接master redis,需要重新运行slaveof命令

master redis写入的key,slave redis不能去修改master已经存在的key,在slave中是readonly的

主从同步二--薪火相传

简介

上一个slave可以是下一个slave的master,slave同样可以接受其他slaves的连接和同步请求.那么该slave作为链条中下一个的master,可以有效减轻master的写压力

中途变更转向:会清除之前的数据,重新建立拷贝最新的.

slaveof 新主库ip 新主库port

细节

# 主master127.0.0.1:6379> info replication

# Replication

role:master

connected_slaves:1

slave0:ip=127.0.0.1,port=6380,state=online,offset=28,lag=0

master_replid:df2697f1f530565d1fb683f209283e319faea59f

master_replid2:0000000000000000000000000000000000000000

master_repl_offset:28

second_repl_offset:-1

repl_backlog_active:1

repl_backlog_size:1048576

repl_backlog_first_byte_offset:1

repl_backlog_histlen:28

# master6379的slave6380

127.0.0.1:6380> info replication

# Replication

role:slave

master_host:127.0.0.1

master_port:6379

master_link_status:up

master_last_io_seconds_ago:3

master_sync_in_progress:0

slave_repl_offset:2142

slave_priority:100

slave_read_only:1

connected_slaves:1

slave0:ip=127.0.0.1,port=6381,state=online,offset=2142,lag=1

master_replid:df2697f1f530565d1fb683f209283e319faea59f

master_replid2:0000000000000000000000000000000000000000

master_repl_offset:2142

second_repl_offset:-1

repl_backlog_active:1

repl_backlog_size:1048576

repl_backlog_first_byte_offset:1

repl_backlog_histlen:2142

# slave6380的slave6381

127.0.0.1:6381> SLAVEOF 127.0.0.1 6380

OK

127.0.0.1:6381> info replication

# Replication

role:slave

master_host:127.0.0.1

master_port:6380

master_link_status:up

master_last_io_seconds_ago:3

master_sync_in_progress:0

slave_repl_offset:2128

slave_priority:100

slave_read_only:1

connected_slaves:0

master_replid:df2697f1f530565d1fb683f209283e319faea59f

master_replid2:0000000000000000000000000000000000000000

master_repl_offset:2128

second_repl_offset:-1

repl_backlog_active:1

repl_backlog_size:1048576

repl_backlog_first_byte_offset:2129

repl_backlog_histlen:0

主从复制三--反客为主

简介

从库运行slaveof no one ,使当前数据库停止与其他数据库的同步,转成主数据库其他的从库使用"slaveof 新主库ip 新主库port",重新绑定最新的主库

复制原理

slave启动成功,连接到master后会发送一个sync命令master接到命令启动后台的存盘进程,同时手机所有接收到的用于修改数据命令,

在后台进程执行完毕之后,master将传送整个数据文件到slave,以完成一次完全同步

全量复制: 而slave在接收到数据库文件之后,将其存盘并加载到内存中

增量复制: master继续将新的所有收集到的修改命令依次传递给slave,完成同步

但是只要是重新连接master,一次完全同步(全量复制)将被自动执行

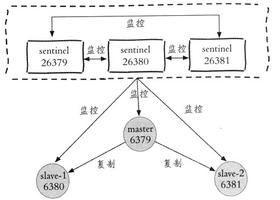

哨兵模式(sentinel)

参考链接: https://redis.io/topics/sentinel

简介

反客为主的自动版,能够后退监控主机是否故障,如果故障了根据投票数自动将从库转换为主库如何操作

1.调整结构6379带着6380,63812.自定义/alidata/redis-5.0.3/test-master-slave目录下新建sentinel.conf文件,名字绝对不能错

3.配置哨兵

sentinel monitor 被监控数据库名字(自己定义) 被监控数据库ip 被监控数据库port 1

上面最后一个数字1,表示主机挂掉后slave投票让谁接替成为主机,得票数多少后成为主机

4.启动哨兵

redis-sentinel /alidata/redis-5.0.3/test-master-slave/sentinel.conf

如果没有redis-sentinel,则在/alidata/redis-5.0.3/bin目录下使用如下命令:

redis-server ../test-master-slave/sentinel.conf --sentinel

[root@izm5e2q95pbpe1hh0kkwoiz bin]# redis-server ../test-master-slave/sentinel.conf --sentinel

16452:X 10 Jan 2020 15:56:54.511 # oO0OoO0OoO0Oo Redis is starting oO0OoO0OoO0Oo

16452:X 10 Jan 2020 15:56:54.511 # Redis version=5.0.3, bits=64, commit=00000000, modified=0, pid=16452, just started

16452:X 10 Jan 2020 15:56:54.511 # Configuration loaded

_._

_.-``__ ''-._

_.-`` `. `_. ''-._ Redis 5.0.3 (00000000/0) 64 bit

.-`` .-```. ```/ _.,_ ''-._

( ' , .-` | `, ) Running in sentinel mode

|`-._`-...-` __...-.``-._|'` _.-'| Port: 26379

| `-._ `._ / _.-' | PID: 16452

`-._ `-._ `-./ _.-' _.-'

|`-._`-._ `-.__.-' _.-'_.-'|

| `-._`-._ _.-'_.-' | http://redis.io

`-._ `-._`-.__.-'_.-' _.-'

|`-._`-._ `-.__.-' _.-'_.-'|

| `-._`-._ _.-'_.-' |

`-._ `-._`-.__.-'_.-' _.-'

`-._ `-.__.-' _.-'

`-._ _.-'

`-.__.-'

16452:X 10 Jan 2020 15:56:54.516 # Sentinel ID is a7d6715b13d229862bd5082e1f0e7734897a1693

16452:X 10 Jan 2020 15:56:54.516 # +monitor master host6379 127.0.0.1 6379 quorum 1

16452:X 10 Jan 2020 15:56:54.517 * +slave slave 127.0.0.1:6380 127.0.0.1 6380 @ host6379 127.0.0.1 6379

16452:X 10 Jan 2020 15:56:54.520 * +slave slave 127.0.0.1:6381 127.0.0.1 6381 @ host6379 127.0.0.1 6379

5.原有的master挂掉之后,自动重新选择master,另外的slave重新挂到新的master下,6379挂掉,投票产生新的master为6381

16452:X 10 Jan 2020 15:59:43.676 # +odown master host6379 127.0.0.1 6379 #quorum 1/1

16452:X 10 Jan 2020 15:59:43.676 # +new-epoch 1

16452:X 10 Jan 2020 15:59:43.676 # +try-failover master host6379 127.0.0.1 6379

16452:X 10 Jan 2020 15:59:43.682 # +vote-for-leader a7d6715b13d229862bd5082e1f0e7734897a1693 1

16452:X 10 Jan 2020 15:59:43.682 # +elected-leader master host6379 127.0.0.1 6379

16452:X 10 Jan 2020 15:59:43.682 # +failover-state-select-slave master host6379 127.0.0.1 6379

16452:X 10 Jan 2020 15:59:43.745 # +selected-slave slave 127.0.0.1:6381 127.0.0.1 6381 @ host6379 127.0.0.1 6379

16452:X 10 Jan 2020 15:59:43.745 * +failover-state-send-slaveof-noone slave 127.0.0.1:6381 127.0.0.1 6381 @ host6379 127.0.0.1 6379

16452:X 10 Jan 2020 15:59:43.809 * +failover-state-wait-promotion slave 127.0.0.1:6381 127.0.0.1 6381 @ host6379 127.0.0.1 6379

16452:X 10 Jan 2020 15:59:44.588 # +promoted-slave slave 127.0.0.1:6381 127.0.0.1 6381 @ host6379 127.0.0.1 6379

16452:X 10 Jan 2020 15:59:44.588 # +failover-state-reconf-slaves master host6379 127.0.0.1 6379

16452:X 10 Jan 2020 15:59:44.660 * +slave-reconf-sent slave 127.0.0.1:6380 127.0.0.1 6380 @ host6379 127.0.0.1 6379

16452:X 10 Jan 2020 15:59:45.615 * +slave-reconf-inprog slave 127.0.0.1:6380 127.0.0.1 6380 @ host6379 127.0.0.1 6379

16452:X 10 Jan 2020 15:59:45.615 * +slave-reconf-done slave 127.0.0.1:6380 127.0.0.1 6380 @ host6379 127.0.0.1 6379

16452:X 10 Jan 2020 15:59:45.691 # +failover-end master host6379 127.0.0.1 6379

## 切换到投票出来的新的master之6381

16452:X 10 Jan 2020 15:59:45.691 # +switch-master host6379 127.0.0.1 6379 127.0.0.1 6381

16452:X 10 Jan 2020 15:59:45.691 * +slave slave 127.0.0.1:6380 127.0.0.1 6380 @ host6379 127.0.0.1 6381

16452:X 10 Jan 2020 15:59:45.691 * +slave slave 127.0.0.1:6379 127.0.0.1 6379 @ host6379 127.0.0.1 6381

16452:X 10 Jan 2020 16:00:15.768 # +sdown slave 127.0.0.1:6379 127.0.0.1 6379 @ host6379 127.0.0.1 6381

## 6381变为新的master,6380为slave,只有6380一个slave

127.0.0.1:6381> info replication

# Replication

role:master

connected_slaves:1

slave0:ip=127.0.0.1,port=6380,state=online,offset=23490630,lag=0

master_replid:9772fad6a5ca29661aeb5e07ca72c013347c5679

master_replid2:df2697f1f530565d1fb683f209283e319faea59f

master_repl_offset:23490630

second_repl_offset:23480570

repl_backlog_active:1

repl_backlog_size:1048576

repl_backlog_first_byte_offset:22442055

repl_backlog_histlen:1048576

## 6380是6381的slave

127.0.0.1:6380> info replication

# Replication

role:slave

master_host:127.0.0.1

master_port:6381

master_link_status:up

master_last_io_seconds_ago:1

master_sync_in_progress:0

slave_repl_offset:23491043

slave_priority:100

slave_read_only:1

connected_slaves:0

master_replid:9772fad6a5ca29661aeb5e07ca72c013347c5679

master_replid2:df2697f1f530565d1fb683f209283e319faea59f

master_repl_offset:23491043

second_repl_offset:23480570

repl_backlog_active:1

repl_backlog_size:1048576

repl_backlog_first_byte_offset:22442468

repl_backlog_histlen:1048576

6.最先挂掉的master重新运行之后,自动挂载到重新选举出来的master下面,此时最原先的master变为slave

哨兵检测到后将6379启动,将6379变为6381的slave:

16452:X 10 Jan 2020 16:04:28.076 * +convert-to-slave slave 127.0.0.1:6379 127.0.0.1 6379 @ host6379 127.0.0.1 6381

6381下的slave多了6379

127.0.0.1:6381> info replication

# Replication

role:master

connected_slaves:2

slave0:ip=127.0.0.1,port=6380,state=online,offset=23501237,lag=2

slave1:ip=127.0.0.1,port=6379,state=online,offset=23501237,lag=1

master_replid:9772fad6a5ca29661aeb5e07ca72c013347c5679

master_replid2:df2697f1f530565d1fb683f209283e319faea59f

master_repl_offset:23501237

second_repl_offset:23480570

repl_backlog_active:1

repl_backlog_size:1048576

repl_backlog_first_byte_offset:22452662

repl_backlog_histlen:1048576

6379的info replication

127.0.0.1:6379> info replication

# Replication

role:slave

master_host:127.0.0.1

master_port:6381

master_link_status:up

master_last_io_seconds_ago:1

master_sync_in_progress:0

slave_repl_offset:23507614

slave_priority:100

slave_read_only:1

connected_slaves:0

master_replid:9772fad6a5ca29661aeb5e07ca72c013347c5679

master_replid2:0000000000000000000000000000000000000000

master_repl_offset:23507614

second_repl_offset:-1

repl_backlog_active:1

repl_backlog_size:1048576

repl_backlog_first_byte_offset:23499724

repl_backlog_histlen:7891

7.假如此时投票选举出来的master又挂掉了,此时是什么情况?

哨兵检测到6381挂掉之后的动作

16452:X 10 Jan 2020 16:07:53.458 # +sdown master host6379 127.0.0.1 6381

16452:X 10 Jan 2020 16:07:53.458 # +odown master host6379 127.0.0.1 6381 #quorum 1/1

16452:X 10 Jan 2020 16:07:53.458 # +new-epoch 2

16452:X 10 Jan 2020 16:07:53.458 # +try-failover master host6379 127.0.0.1 6381

16452:X 10 Jan 2020 16:07:53.463 # +vote-for-leader a7d6715b13d229862bd5082e1f0e7734897a1693 2

16452:X 10 Jan 2020 16:07:53.463 # +elected-leader master host6379 127.0.0.1 6381

16452:X 10 Jan 2020 16:07:53.463 # +failover-state-select-slave master host6379 127.0.0.1 6381

16452:X 10 Jan 2020 16:07:53.523 # +selected-slave slave 127.0.0.1:6380 127.0.0.1 6380 @ host6379 127.0.0.1 6381

16452:X 10 Jan 2020 16:07:53.523 * +failover-state-send-slaveof-noone slave 127.0.0.1:6380 127.0.0.1 6380 @ host6379 127.0.0.1 6381

16452:X 10 Jan 2020 16:07:53.581 * +failover-state-wait-promotion slave 127.0.0.1:6380 127.0.0.1 6380 @ host6379 127.0.0.1 6381

16452:X 10 Jan 2020 16:07:53.643 # +promoted-slave slave 127.0.0.1:6380 127.0.0.1 6380 @ host6379 127.0.0.1 6381

16452:X 10 Jan 2020 16:07:53.643 # +failover-state-reconf-slaves master host6379 127.0.0.1 6381

16452:X 10 Jan 2020 16:07:53.694 * +slave-reconf-sent slave 127.0.0.1:6379 127.0.0.1 6379 @ host6379 127.0.0.1 6381

16452:X 10 Jan 2020 16:07:54.323 * +slave-reconf-inprog slave 127.0.0.1:6379 127.0.0.1 6379 @ host6379 127.0.0.1 6381

16452:X 10 Jan 2020 16:07:55.333 * +slave-reconf-done slave 127.0.0.1:6379 127.0.0.1 6379 @ host6379 127.0.0.1 6381

16452:X 10 Jan 2020 16:07:55.386 # +failover-end master host6379 127.0.0.1 6381

## 将master从6381切换为6380

16452:X 10 Jan 2020 16:07:55.386 # +switch-master host6379 127.0.0.1 6381 127.0.0.1 6380

16452:X 10 Jan 2020 16:07:55.386 * +slave slave 127.0.0.1:6379 127.0.0.1 6379 @ host6379 127.0.0.1 6380

16452:X 10 Jan 2020 16:07:55.386 * +slave slave 127.0.0.1:6381 127.0.0.1 6381 @ host6379 127.0.0.1 6380

16452:X 10 Jan 2020 16:08:25.418 # +sdown slave 127.0.0.1:6381 127.0.0.1 6381 @ host6379 127.0.0.1 6380

6380变为master,6379变更为6380的slave:

127.0.0.1:6380> info replication

# Replication

role:master

connected_slaves:1

slave0:ip=127.0.0.1,port=6379,state=online,offset=23512243,lag=0

master_replid:08b69c14d46567b6d0506e84e7c51da49a9661ec

master_replid2:9772fad6a5ca29661aeb5e07ca72c013347c5679

master_repl_offset:23512243

second_repl_offset:23511276

repl_backlog_active:1

repl_backlog_size:1048576

repl_backlog_first_byte_offset:22463668

repl_backlog_histlen:1048576

6379变为6380的slave:

127.0.0.1:6379> info replication

# Replication

role:slave

master_host:127.0.0.1

master_port:6380

master_link_status:up

master_last_io_seconds_ago:1

master_sync_in_progress:0

slave_repl_offset:23525130

slave_priority:100

slave_read_only:1

connected_slaves:0

master_replid:08b69c14d46567b6d0506e84e7c51da49a9661ec

master_replid2:9772fad6a5ca29661aeb5e07ca72c013347c5679

master_repl_offset:23525130

second_repl_offset:23511276

repl_backlog_active:1

repl_backlog_size:1048576

repl_backlog_first_byte_offset:23499724

repl_backlog_histlen:25407

复制缺点

由于所有的写操作都是现在master上操作,然后同步更新到slave上,所以master通不到slave机器有一定的延迟,当系统很繁忙的时候,延迟问题会更加严重,slave机器数量的增加也会使这个问题更加严重

以上是 redis之master.slave主从复制 的全部内容, 来源链接: utcz.com/z/532183.html