python爬虫之Scrapy使用代理配置

在爬取网站内容的时候,最常遇到的问题是:网站对IP有限制,会有防抓取功能,最好的办法就是IP轮换抓取(加代理)

下面来说一下Scrapy如何配置代理,进行抓取

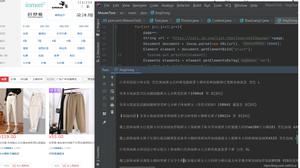

1.在Scrapy工程下新建“middlewares.py”

# Importing base64 library because we'll need it ONLY in case if the proxy we are going to use requires authentication

import base64

# Start your middleware class

class ProxyMiddleware(object):

# overwrite process request

def process_request(self, request, spider):

# Set the location of the proxy

request.meta['proxy'] = "http://YOUR_PROXY_IP:PORT"

# Use the following lines if your proxy requires authentication

proxy_user_pass = "USERNAME:PASSWORD"

# setup basic authentication for the proxy

encoded_user_pass = base64.encodestring(proxy_user_pass)

request.headers['Proxy-Authorization'] = 'Basic ' + encoded_user_pass

2.在项目配置文件里(./pythontab/settings.py)添加

DOWNLOADER_MIDDLEWARES = {

'scrapy.contrib.downloadermiddleware.httpproxy.HttpProxyMiddleware': 110,

'pythontab.middlewares.ProxyMiddleware': 100,

}

以上是 python爬虫之Scrapy使用代理配置 的全部内容, 来源链接: utcz.com/z/520871.html