k8s之Service详解Service使用

实验环境准备

在使用service之前,首先利用deployment创建出3个pod,注意要为pod设置app=nginx-pod的标签

创建deployment.yaml,内容如下

apiVersion: apps/v1kind: Deployment

metadata:

name: pc

-deploymentnamespace: devspec:

replicas:

3selector:

matchLabels:

app: nginx

-podtemplate:

metadata:

labels:

app: nginx

-podspec:

containers:

- name: nginximage: nginx:

1.17.1ports:

- containerPort: 80

使用配置文件

[root@master ~]# vim deployment.yaml[root@master

~]# kubectl create -f deployment.yamldeployment.apps

/pc-deployment created[root@master

~]# kubectl get pod -n dev -o wideNAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

pc

-deployment-6696798b78-5d7rh 1/1 Running 0 4s 10.244.2.4 node2 <none> <none>pc

-deployment-6696798b78-5jbcr 1/1 Running 0 4s 10.244.1.26 node1 <none> <none>pc

-deployment-6696798b78-wbrfh 1/1 Running 0 4s 10.244.2.3 node2 <none> <none>

通过pod的Ip加上容器端口80访问nginx,发现可以访问

[root@master ~]# curl 10.244.2.4:80<!DOCTYPE html>

<html>

<head>

<title>Welcome to nginx!</title>

为了方便后面的测试,修改下面三台nginx的index.html页面(三台修改的Ip地址不一致)

#修改第一个pod[root@master

~]# kubectl exec -it pc-deployment-6696798b78-5d7rh -n dev /bin/sh# echo

"10.244.2.4" > /usr/share/nginx/html/index.html# exit

#测试访问

[root@master

~]# curl 10.244.2.4:8010.244.2.4

#修改第二个pod

[root@master ~]# kubectl exec -it pc-deployment-6696798b78-5jbcr -n dev /bin/sh

# echo "10.244.1.26" > /usr/share/nginx/html/index.html

# exit

#修改第三个pod

[root@master ~]# kubectl exec -it pc-deployment-6696798b78-wbrfh -n dev /bin/sh

# echo "10.244.2.3" > /usr/share/nginx/html/index.html

# exit

ClusterIp类型的Service

创建service-clusterip.yaml文件

apiVersion: v1kind: Service

metadata:

name: service

-clusteripnamespace: devspec:

selector:

app: nginx

-podclusterIP:

10.97.97.97 #service的Ip地址,如果不写,会默认生成一个type: ClusterIP

ports:

- port: 80 #service端口targetPort:

80 #pod端口

使用配置文件

[root@master ~]# vim service-clusterip.yaml[root@master

~]# kubectl create -f service-clusterip.yamlservice

/service-clusterip created[root@master

~]# kubectl get svc service-clusterip -n devNAME TYPE CLUSTER

-IP EXTERNAL-IP PORT(S) AGEservice

-clusterip ClusterIP 10.97.97.97 <none> 80/TCP 44s#查看service详细信息

[root@master

~]# kubectl describe svc service-clusterip -n devName: service

-clusteripNamespace: dev

Labels:

<none>Annotations:

<none>Selector: app

=nginx-podType: ClusterIP

IP:

10.97.97.97Port:

<unset> 80/TCPTargetPort:

80/TCPEndpoints:

10.244.1.26:80,10.244.2.3:80,10.244.2.4:80Session Affinity: None

Events:

<none>#查看ipvs映射规则

[root@master

~]# ipvsadm -LnIP Virtual Server version

1.2.1 (size=4096)Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConnTCP

10.97.97.97:80 rr-> 10.244.1.27:80 Masq 100-> 10.244.2.5:80 Masq 100

-> 10.244.2.6:80 Masq 100

EndPoint

Endpoint是k8s中的一个资源对象,存储在etcd中,用来记录一个service对应的所有pod的访问地址,它是根据service配置文件中的selector描述产生的

一个service由一组pod组成,这些pod通过endpoints暴露出来,endpoints是实现实际服务的端点集合。换句话说,service和pod之间的联系是通过endpoints实现的。

[root@master ~]# kubectl get endpoints -n dev -o wideNAME ENDPOINTS AGE

service

-clusterip 10.244.1.26:80,10.244.2.3:80,10.244.2.4:80 18m

负载分发策略

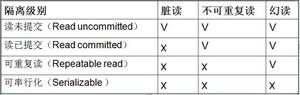

对service的访问被分发到了后端的pod上去,目前k8s提供了两种负载分发策略:

- 如果不定义,默认使用kube-proxy的策略,比如随机,轮询

- 基于客户端地址的会话保持模式,即来自同一个客户端发起的所有请求都会转发到一个固定的pod上,此模式可以使在spec中添加sessionAffinity:ClientIP选项

#查看ipvs映射规则[root@master

~]# ipvsadm -LnIP Virtual Server version

1.2.1 (size=4096)Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConnTCP

10.97.97.97:80 rr-> 10.244.1.27:80 Masq 100-> 10.244.2.5:80 Masq 100

-> 10.244.2.6:80 Masq 100

#循环访问测试

[root@master ~]# whiletrue;do curl 10.97.97.97:80;sleep 5;done;

10.244.1.26

10.244.2.3

10.244.2.4

10.244.1.26

10.244.2.3

10.244.2.4

10.244.1.26

10.244.2.3

10.244.2.4

修改分发策略为sessionAffinity:ClientIP

#删除原来的service[root@master

~]# kubectl delete -f service-clusterip.yamlservice

"service-clusterip" deleted#更改service

-clusterip.yaml[root@master

~]# vim service-clusterip.yamlapiVersion: v1

kind: Service

metadata:

name: service

-clusteripnamespace: devspec:

sessionAffinity: ClientIP

selector:

app: nginx

-podclusterIP:

10.97.97.97 #service的Ip地址,如果不写,会默认生成一个type: ClusterIP

ports:

- port: 80 #service端口targetPort:

80 #pod端口#重建service

[root@master

~]# kubectl create -f service-clusterip.yamlservice

/service-clusterip created#查看svc,可以发现新增了一项SessionAffinity

[root@master

~]# kubectl describe svc service-clusterip -n devName: service

-clusteripNamespace: dev

Labels:

<none>Annotations:

<none>Selector: app

=nginx-podType: ClusterIP

IP:

10.97.97.97Port:

<unset> 80/TCPTargetPort:

80/TCPEndpoints:

10.244.1.27:80,10.244.2.5:80,10.244.2.6:80Session Affinity: ClientIP

Events:

<none>

重新查看ipvs映射规则【persistent代表持久】,发现新增了persistent 10800秒,代表持续180分钟

[root@master ~]# ipvsadm -LnIP Virtual Server version

1.2.1 (size=4096)Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConnTCP

10.97.97.97:80 rr persistent 10800-> 10.244.1.27:80 Masq 100

-> 10.244.2.5:80 Masq 100

-> 10.244.2.6:80 Masq 100

再次进行循环访问测试,发现这次只访问一个pod

[root@master ~]# whiletrue;do curl 10.97.97.97:80;sleep 5;done;10.244.2.410.244.2.4

10.244.2.4

10.244.2.4

10.244.2.4

10.244.2.4

HeadLiness类型的Service

在某些场景中,开发人员可能不想使用Service提供的负载均衡功能,而希望自己来控制负载均衡策略,针对这种情况,k8s提供了HeadLiness Service,这类Service不会分配ClusterIP,如果想要访问Service,只能通过service的域名进行查询。

创建service-headliness.yaml

apiVersion: v1kind: Service

metadata:

name: service

-headlinessnamespace: devspec:

selector:

app: nginx

-podclusterIP: None #将clusterIP设置为None,即可创建headliness Service

type: ClusterIP

ports:

- port: 80targetPort:

80

使用配置文件

[root@master ~]# vim service-headliness.yaml[root@master

~]# kubectl create -f service-headliness.yamlservice

/service-headliness created[root@master

~]# kubectl get svc service-headliness -n devNAME TYPE CLUSTER

-IP EXTERNAL-IP PORT(S) AGEservice

-headliness ClusterIP None <none> 80/TCP 31s[root@master

~]# kubectl describe svc service-headliness -n devName: service

-headlinessNamespace: dev

Labels:

<none>Annotations:

<none>Selector: app

=nginx-podType: ClusterIP

IP: None

Port:

<unset> 80/TCPTargetPort:

80/TCPEndpoints:

10.244.1.27:80,10.244.2.5:80,10.244.2.6:80Session Affinity: None

Events:

<none>

查看域名解析情况

[root@master ~]# kubectl exec -it pc-deployment-6696798b78-5d7rh -n dev /bin/sh# cat

/etc/resolv.confnameserver

10.96.0.10search dev.svc.cluster.local svc.cluster.local cluster.local

options ndots:

5# exit

#通过域名进行查询

[root@master

~]# dig @10.96.0.10 service-headliness.dev.svc.cluster.local;

<<>> DiG 9.11.4-P2-RedHat-9.11.4-26.P2.el7_9.5 <<>> @10.96.0.10 service-headliness.dev.svc.cluster.local; (

1 server found);;

global options: +cmd;; Got answer:

;; WARNING: .local

is reserved for Multicast DNS;; You are currently testing what happens when an mDNS query

is leaked to DNS;;

->>HEADER<<- opcode: QUERY, status: NOERROR, id: 40115;; flags: qr aa rd; QUERY:

1, ANSWER: 3, AUTHORITY: 0, ADDITIONAL: 1;; WARNING: recursion requested but not available

;; OPT PSEUDOSECTION:

; EDNS: version:

0, flags:; udp: 4096;; QUESTION SECTION:

;service

-headliness.dev.svc.cluster.local. IN A;; ANSWER SECTION:

service

-headliness.dev.svc.cluster.local. 30 IN A 10.244.2.6service

-headliness.dev.svc.cluster.local. 30 IN A 10.244.1.27service

-headliness.dev.svc.cluster.local. 30 IN A 10.244.2.5;; Query time:

334 msec;; SERVER:

10.96.0.10#53(10.96.0.10);; WHEN: 四 8月

1211:26:01 CST 2021;; MSG SIZE rcvd:

237

NodePort类型的Service

在之前的样例中,创建的Service的IP地址只有集群内部可以访问,如果希望Service暴露给集群外部使用,那么就要使用到另外一种类型的Service,称为NodePort类型。NodePor的工作原理其实就是将service的端口映射到Node的一个端口上,然后就可以通过NodeIp:NodePort来访问service了。

创建service-nodeport.yaml,内容如下

apiVersion: v1

kind: Service

metadata:

name: service-nodeport

namespace: dev

spec:

selector:

app: nginx-pod

type: NodePort #service类型

ports:

- port: 80

nodePort: 30002 #指定绑定的node的端口(默认的取值范围是:30000-32767),如果不指定,会默认分配

targetPort: 80

使用配置文件

[root@master ~]# vim service-nodeport.yaml[root@master

~]# kubectl create -f service-nodeport.yamlservice

/service-nodeport created[root@master

~]# kubectl get svc service-nodeport -n devNAME TYPE CLUSTER

-IP EXTERNAL-IP PORT(S) AGEservice

-nodeport NodePort 10.103.29.82 <none> 80:30002/TCP 14s

通过电脑的浏览器对node进行访问,访问地址:master的主机ip加上30002端口

发现能够成功访问了

LoadBalancer类型的Service

LoadBalancer和NodePort很相似,目的都是向外部暴露一个端口,区别在于LoadBalancer会在集群的外部再来做一个负载均衡设备,而这个设备需要外部环境支持的,外部服务发送到这个设备上的请求,会被设备负载之后转发到集群中。

ExternalName类型的Service

ExternalName类型的Service用于引入集群外部的服务,它通过externalName属性指定外部一个服务的地址,然后在集群内部访问此Service就可以访问到外部的服务了

创建service-externalname.yaml,内容如下

apiVersion: v1kind: Service

metadata:

name: service

-externalnamenamespace: devspec:

type: ExternalName #service类型

externalName: www.baidu.com #改成ip地址也可以

使用配置文件

[root@master ~]# vim service-externalname.yaml[root@master

~]# kubectl create -f service-externalname.yamlservice

/service-externalname created[root@master

~]# kubectl get svc service-externalname -n devNAME TYPE CLUSTER

-IP EXTERNAL-IP PORT(S) AGEservice

-externalname ExternalName <none> www.baidu.com <none> 17s

域名解析

[root@master ~]# dig @10.96.0.10 service-externalname.dev.svc.cluster.local;

<<>> DiG 9.11.4-P2-RedHat-9.11.4-26.P2.el7_9.5 <<>> @10.96.0.10 service-externalname.dev.svc.cluster.local; (

1 server found);;

global options: +cmd;; Got answer:

;; WARNING: .local

is reserved for Multicast DNS;; You are currently testing what happens when an mDNS query

is leaked to DNS;;

->>HEADER<<- opcode: QUERY, status: NOERROR, id: 27636;; flags: qr aa rd; QUERY:

1, ANSWER: 4, AUTHORITY: 0, ADDITIONAL: 1;; WARNING: recursion requested but not available

;; OPT PSEUDOSECTION:

; EDNS: version:

0, flags:; udp: 4096;; QUESTION SECTION:

;service

-externalname.dev.svc.cluster.local. IN A;; ANSWER SECTION:

service

-externalname.dev.svc.cluster.local. 30 IN CNAME www.baidu.com.www.baidu.com.

30 IN CNAME www.a.shifen.com.www.a.shifen.com.

30 IN A 39.156.66.14www.a.shifen.com.

30 IN A 39.156.66.18;; Query time:

31 msec;; SERVER:

10.96.0.10#53(10.96.0.10);; WHEN: 四 8月

1211:58:31 CST 2021;; MSG SIZE rcvd:

247

以上是 k8s之Service详解Service使用 的全部内容, 来源链接: utcz.com/z/519847.html