伙伴系统分配内存

内核中常用的分配物理内存页面的接口函数是alloc_pages(),用于分配一个或者多个连续的物理页面,分配页面个数只能是2个整数次幂。相比于多次分配离散的物理页面,分配连续的物理页面有利于提高系统内存的碎片化,内存碎片化是一个很让人头疼的问题。alloc_pages()函数有两个,一个是分配gfp_mask,另一个是分配阶数order。

[include/linux/gfp.h]#define alloc_pages(gfp_mask, order)

alloc_pages_node(numa_node_id(), gfp_mask, order)

分配掩码是非常重要的参数,它同样定义在gfp.h头文件中。

/* Plain integer GFP bitmasks. Do not use this directly. */#define ___GFP_DMA 0x01u

#define ___GFP_HIGHMEM 0x02u

#define ___GFP_DMA32 0x04u

#define ___GFP_MOVABLE 0x08u

#define ___GFP_WAIT 0x10u

#define ___GFP_HIGH 0x20u

#define ___GFP_IO 0x40u

#define ___GFP_FS 0x80u

#define ___GFP_COLD 0x100u

#define ___GFP_NOWARN 0x200u

#define ___GFP_REPEAT 0x400u

#define ___GFP_NOFAIL 0x800u

#define ___GFP_NORETRY 0x1000u

#define ___GFP_MEMALLOC 0x2000u

#define ___GFP_COMP 0x4000u

#define ___GFP_ZERO 0x8000u

#define ___GFP_NOMEMALLOC 0x10000u

#define ___GFP_HARDWALL 0x20000u

#define ___GFP_THISNODE 0x40000u

#define ___GFP_RECLAIMABLE 0x80000u

#define ___GFP_NOTRACK 0x200000u

#define ___GFP_NO_KSWAPD 0x400000u

#define ___GFP_OTHER_NODE 0x800000u

#define ___GFP_WRITE 0x1000000u

分配掩码是在内核代码中分成两类,一类叫zone modifiers,另一类是action modifiers。zone modifiers指定从哪一个zone中分配所需的页面。zone modifiers由分配掩码的最低4位来定义,分别是___GFP_DMA、___GFP_HIGHMEM、___GFP_DMA32和___GFP_MOVABLE。

/* If the above are modified, __GFP_BITS_SHIFT may need updating *//*

* GFP bitmasks..

*

* Zone modifiers (see linux/mmzone.h - low three bits)

*

* Do not put any conditional on these. If necessary modify the definitions

* without the underscores and use them consistently. The definitions here may

* be used in bit comparisons.

*/

#define __GFP_DMA ((__force gfp_t)___GFP_DMA)

#define __GFP_HIGHMEM ((__force gfp_t)___GFP_HIGHMEM)

#define __GFP_DMA32 ((__force gfp_t)___GFP_DMA32)

#define __GFP_MOVABLE ((__force gfp_t)___GFP_MOVABLE) /* Page is movable */

#define GFP_ZONEMASK (__GFP_DMA|__GFP_HIGHMEM|__GFP_DMA32|__GFP_MOVABLE)

action modifiers并不限制从哪个内存域中分配内存,但会改变分配行为,其定义如下:

/* * Action modifiers - doesn"t change the zoning

*

* __GFP_REPEAT: Try hard to allocate the memory, but the allocation attempt

* _might_ fail. This depends upon the particular VM implementation.

*

* __GFP_NOFAIL: The VM implementation _must_ retry infinitely: the caller

* cannot handle allocation failures. This modifier is deprecated and no new

* users should be added.

*

* __GFP_NORETRY: The VM implementation must not retry indefinitely.

*

* __GFP_MOVABLE: Flag that this page will be movable by the page migration

* mechanism or reclaimed

*/

#define __GFP_WAIT ((__force gfp_t)___GFP_WAIT) /* Can wait and reschedule? */

#define __GFP_HIGH ((__force gfp_t)___GFP_HIGH) /* Should access emergency pools? */

#define __GFP_IO ((__force gfp_t)___GFP_IO) /* Can start physical IO? */

#define __GFP_FS ((__force gfp_t)___GFP_FS) /* Can call down to low-level FS? */

#define __GFP_COLD ((__force gfp_t)___GFP_COLD) /* Cache-cold page required */

#define __GFP_NOWARN ((__force gfp_t)___GFP_NOWARN) /* Suppress page allocation failure warning */

#define __GFP_REPEAT ((__force gfp_t)___GFP_REPEAT) /* See above */

#define __GFP_NOFAIL ((__force gfp_t)___GFP_NOFAIL) /* See above */

#define __GFP_NORETRY ((__force gfp_t)___GFP_NORETRY) /* See above */

#define __GFP_MEMALLOC ((__force gfp_t)___GFP_MEMALLOC)/* Allow access to emergency reserves */

#define __GFP_COMP ((__force gfp_t)___GFP_COMP) /* Add compound page metadata */

#define __GFP_ZERO ((__force gfp_t)___GFP_ZERO) /* Return zeroed page on success */

#define __GFP_NOMEMALLOC ((__force gfp_t)___GFP_NOMEMALLOC) /* Don"t use emergency reserves.

* This takes precedence over the

* __GFP_MEMALLOC flag if both are

* set

*/

#define __GFP_HARDWALL ((__force gfp_t)___GFP_HARDWALL) /* Enforce hardwall cpuset memory allocs */

#define __GFP_THISNODE ((__force gfp_t)___GFP_THISNODE)/* No fallback, no policies */

#define __GFP_RECLAIMABLE ((__force gfp_t)___GFP_RECLAIMABLE) /* Page is reclaimable */

#define __GFP_NOTRACK ((__force gfp_t)___GFP_NOTRACK) /* Don"t track with kmemcheck */

#define __GFP_NO_KSWAPD ((__force gfp_t)___GFP_NO_KSWAPD)

#define __GFP_OTHER_NODE ((__force gfp_t)___GFP_OTHER_NODE) /* On behalf of other node */

#define __GFP_WRITE ((__force gfp_t)___GFP_WRITE) /* Allocator intends to dirty page */

上述这些标志位,我们在后续代码中遇到时再详细介绍。

下面是GFP_KERNEL为例,为看理想情况下alloc_pages()函数是如何分配出物理内存的。

[分配物理内存的例子]page = alloc_pages(GFP_KERNEL, order);

GFP_KERNEL分配掩码定义在gfp.h头文件上,是一个分配掩码的组合。常用的分配掩码组合如下:

/* This equals 0, but use constants in case they ever change */#define GFP_NOWAIT (GFP_ATOMIC & ~__GFP_HIGH)

/* GFP_ATOMIC means both !wait (__GFP_WAIT not set) and use emergency pool */

#define GFP_ATOMIC (__GFP_HIGH)

#define GFP_NOIO (__GFP_WAIT)

#define GFP_NOFS (__GFP_WAIT | __GFP_IO)

#define GFP_KERNEL (__GFP_WAIT | __GFP_IO | __GFP_FS)

#define GFP_TEMPORARY (__GFP_WAIT | __GFP_IO | __GFP_FS |

__GFP_RECLAIMABLE)

#define GFP_USER (__GFP_WAIT | __GFP_IO | __GFP_FS | __GFP_HARDWALL)

#define GFP_HIGHUSER (GFP_USER | __GFP_HIGHMEM)

#define GFP_HIGHUSER_MOVABLE (GFP_HIGHUSER | __GFP_MOVABLE)

#define GFP_IOFS (__GFP_IO | __GFP_FS)

#define GFP_TRANSHUGE (GFP_HIGHUSER_MOVABLE | __GFP_COMP |

__GFP_NOMEMALLOC | __GFP_NORETRY | __GFP_NOWARN |

__GFP_NO_KSWAPD)

所以GFP_KERNEL分配掩码包含了__GFP_WAIT、__GFP_IO、__GFP_FS这三个标志位,换算成十六进制0xd0;

alloc_pages()最终调用__alloc_pages_nodemask()函数,它是伙伴系统的核心函数;

/* * This is the "heart" of the zoned buddy allocator.

*/

struct page *

__alloc_pages_nodemask(gfp_t gfp_mask, unsigned int order,

struct zonelist *zonelist, nodemask_t *nodemask)

{

struct zoneref *preferred_zoneref;

struct page *page = NULL;

unsigned int cpuset_mems_cookie;

int alloc_flags = ALLOC_WMARK_LOW|ALLOC_CPUSET|ALLOC_FAIR;

gfp_t alloc_mask; /* The gfp_t that was actually used for allocation */

struct alloc_context ac = {

.high_zoneidx = gfp_zone(gfp_mask),

.nodemask = nodemask,

.migratetype = gfpflags_to_migratetype(gfp_mask),

};

struct alloc_context数据结构是伙伴系统分配函数中用于保存相关参数的数据结构。gfp_zone()函数从分配掩码中计算出zone的zoneidx,并存放high_zoneidx成员中。

static inline enum zone_type gfp_zone(gfp_t flags){

enum zone_type z;

int bit = (__force int) (flags & GFP_ZONEMASK);

z = (GFP_ZONE_TABLE >> (bit * ZONES_SHIFT)) &

((1 << ZONES_SHIFT) - 1);

VM_BUG_ON((GFP_ZONE_BAD >> bit) & 1);

return z;

}

gfp_zone()函数会用到GFP_ZONEMASK、GFP_ZONE_TABLE和ZONES_SHIFT等宏。它们的定义如下:

#define GFP_ZONEMASK (__GFP_DMA|__GFP_HIGHMEM|__GFP_DMA32|__GFP_MOVABLE)#define GFP_ZONE_TABLE (

(ZONE_NORMAL << 0 * ZONES_SHIFT)

| (OPT_ZONE_DMA << ___GFP_DMA * ZONES_SHIFT)

| (OPT_ZONE_HIGHMEM << ___GFP_HIGHMEM * ZONES_SHIFT)

| (OPT_ZONE_DMA32 << ___GFP_DMA32 * ZONES_SHIFT)

| (ZONE_NORMAL << ___GFP_MOVABLE * ZONES_SHIFT)

| (OPT_ZONE_DMA << (___GFP_MOVABLE | ___GFP_DMA) * ZONES_SHIFT)

| (ZONE_MOVABLE << (___GFP_MOVABLE | ___GFP_HIGHMEM) * ZONES_SHIFT)

| (OPT_ZONE_DMA32 << (___GFP_MOVABLE | ___GFP_DMA32) * ZONES_SHIFT)

)

#if MAX_NR_ZONES < 2

#define ZONES_SHIFT 0

#elif MAX_NR_ZONES <= 2

#define ZONES_SHIFT 1

#elif MAX_NR_ZONES <= 4

#define ZONES_SHIFT 2

GFP_ZONEMASK是分配掩码的低4位,在ARM Vexprss平台上,只有ZONE_NORMAL和ZONE_HIGHMEM这两个zone,但是计算__MAX_NR_ZONES需要加上ZONE_MOVABLE,所以MAX_NR_ZONES等于3,这里ZONE_SHIFT等于2,那么GFP_ZONE_TABLE计算结果等于0x200010。

在上述例子中,以GFP_KERNEL分配掩码(0xd0)为参数代入gfp_zone()函数中,最终结果为0,即high_zoneidx为0。

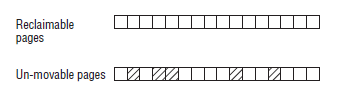

另外__alloc_pages_nodemask()第15行代码中的gfpflags_to_migratetype()函数把gfp_mask分配掩码转换成MIGRATE_TYPES类型是MIGRATE_UNMOVABLE;如果分配掩码为GFP_HIGHUSER_MOVABLE,那么MIGRATE_TYPES类型是MIGRATE_MOVABLE。

/* Convert GFP flags to their corresponding migrate type */static inline int gfpflags_to_migratetype(const gfp_t gfp_flags)

{

WARN_ON((gfp_flags & GFP_MOVABLE_MASK) == GFP_MOVABLE_MASK);

if (unlikely(page_group_by_mobility_disabled))

return MIGRATE_UNMOVABLE;

/* Group based on mobility */

return (((gfp_flags & __GFP_MOVABLE) != 0) << 1) |

((gfp_flags & __GFP_RECLAIMABLE) != 0);

}

继续回到__alloc_pages_nodemask()函数中。

[__alloc_pages_nodemask]retry_cpuset:

cpuset_mems_cookie = read_mems_allowed_begin();

/* We set it here, as __alloc_pages_slowpath might have changed it */

ac.zonelist = zonelist;

/* The preferred zone is used for statistics later */

preferred_zoneref = first_zones_zonelist(ac.zonelist, ac.high_zoneidx,

ac.nodemask ? : &cpuset_current_mems_allowed,

&ac.preferred_zone);

if (!ac.preferred_zone)

goto out;

ac.classzone_idx = zonelist_zone_idx(preferred_zoneref);

/* First allocation attempt */

alloc_mask = gfp_mask|__GFP_HARDWALL;

page = get_page_from_freelist(alloc_mask, order, alloc_flags, &ac);

if (unlikely(!page)) {

/*

* Runtime PM, block IO and its error handling path

* can deadlock because I/O on the device might not

* complete.

*/

alloc_mask = memalloc_noio_flags(gfp_mask);

page = __alloc_pages_slowpath(alloc_mask, order, &ac);

}

if (kmemcheck_enabled && page)

kmemcheck_pagealloc_alloc(page, order, gfp_mask);

trace_mm_page_alloc(page, order, alloc_mask, ac.migratetype);

out:

/*

* When updating a task"s mems_allowed, it is possible to race with

* parallel threads in such a way that an allocation can fail while

* the mask is being updated. If a page allocation is about to fail,

* check if the cpuset changed during allocation and if so, retry.

*/

if (unlikely(!page && read_mems_allowed_retry(cpuset_mems_cookie)))

goto retry_cpuset;

return page;

首先get_page_from_freelist()会去尝试分配物理页面,如果这里分配失败,就会调用到__alloc_pages_slowpath()函数,这个函数会处理许多特殊的场景。这里假设在理想情况下,get_page_from_freelist()能分配成功;

/* * get_page_from_freelist goes through the zonelist trying to allocate

* a page.

*/

static struct page *

get_page_from_freelist(gfp_t gfp_mask, unsigned int order, int alloc_flags,

const struct alloc_context *ac)

{

struct zonelist *zonelist = ac->zonelist;

struct zoneref *z;

struct page *page = NULL;

struct zone *zone;

nodemask_t *allowednodes = NULL;/* zonelist_cache approximation */

int zlc_active = 0; /* set if using zonelist_cache */

int did_zlc_setup = 0; /* just call zlc_setup() one time */

bool consider_zone_dirty = (alloc_flags & ALLOC_WMARK_LOW) &&

(gfp_mask & __GFP_WRITE);

int nr_fair_skipped = 0;

bool zonelist_rescan;

zonelist_scan:

zonelist_rescan = false;

/*

* Scan zonelist, looking for a zone with enough free.

* See also __cpuset_node_allowed() comment in kernel/cpuset.c.

*/

for_each_zone_zonelist_nodemask(zone, z, zonelist, ac->high_zoneidx,

ac->nodemask) {

get_page_from_freelist()函数首先需要先判断可以从哪一个zone来分配内存。for_each_zone_zonelist_nodemask宏扫描内存节点中的zonelist去查找合适分配内存的zone。

/** * for_each_zone_zonelist_nodemask - helper macro to iterate over valid zones in a zonelist at or below a given zone index and within a nodemask

* @zone - The current zone in the iterator

* @z - The current pointer within zonelist->zones being iterated

* @zlist - The zonelist being iterated

* @highidx - The zone index of the highest zone to return

* @nodemask - Nodemask allowed by the allocator

*

* This iterator iterates though all zones at or below a given zone index and

* within a given nodemask

*/

#define for_each_zone_zonelist_nodemask(zone, z, zlist, highidx, nodemask)

for (z = first_zones_zonelist(zlist, highidx, nodemask, &zone);

zone;

z = next_zones_zonelist(++z, highidx, nodemask),

zone = zonelist_zone(z))

for_each_zone_zonelist_nodemask首先通过first_zones_zonelist()从给定的zoneidx开始查找,这个给定的zoneidx就是highidx,之前通过gfp_zone()函数转换得来的。

/** * first_zones_zonelist - Returns the first zone at or below highest_zoneidx within the allowed nodemask in a zonelist

* @zonelist - The zonelist to search for a suitable zone

* @highest_zoneidx - The zone index of the highest zone to return

* @nodes - An optional nodemask to filter the zonelist with

* @zone - The first suitable zone found is returned via this parameter

*

* This function returns the first zone at or below a given zone index that is

* within the allowed nodemask. The zoneref returned is a cursor that can be

* used to iterate the zonelist with next_zones_zonelist by advancing it by

* one before calling.

*/

static inline struct zoneref *first_zones_zonelist(struct zonelist *zonelist,

enum zone_type highest_zoneidx,

nodemask_t *nodes,

struct zone **zone)

{

struct zoneref *z = next_zones_zonelist(zonelist->_zonerefs,

highest_zoneidx, nodes);

*zone = zonelist_zone(z);

return z;

}

first_zones_zonelist()函数会调用next_zones_zonelist()函数来计算zoneref,最后返回zone数据结构;

struct zoneref *next_zones_zonelist(struct zoneref *z, enum zone_type highest_zoneidx,

nodemask_t *nodes)

{

/*

* Find the next suitable zone to use for the allocation.

* Only filter based on nodemask if it"s set

*/

if (likely(nodes == NULL))

while (zonelist_zone_idx(z) > highest_zoneidx)

z++;

else

while (zonelist_zone_idx(z) > highest_zoneidx ||

(z->zone && !zref_in_nodemask(z, nodes)))

z++;

return z;

}

计算zone的核心函数在next_zones_zonelist()函数中,这里highest_zoneidx是gfp_zone()函数计算分配掩码得来。zonelist有一个zoneref数组,zoneref数据结构里有一个成员zone指针会指向zone数据结构,还有一个zone_index成员指向zone的编号。zone在系统处理时会初始化这个数组,具体函数在build_zonelists_node()中。在ARM Vexpress平台中,zone类型、zoneref[]数组和zoneidx的关系如下:

ZONE_HIGHMEM __zonerefs[0]->zone_index=1ZONE_NORMAL __zonerefs[1]->zone_index=0

zonerefs[0]表示ZONE_HIGHMEM,其zone编号zone_index的值为1;zonerefs[1]表示ZONE_NORMAL,其zone的编号zone_index为0。也就是说,基于zone的设计思想是:分配物理页面时会优先考虑ZONE_HIGHMEM,因为ZONE_HIGHMEM在zonelist中排在ZONE_NORMAL前面;

回到我们之前的例子,gfp_zone(GFP_KERNEL)函数返回0,即highest_zoneidx为0,而这个内存节点的第一个zone是ZONE_HIGHMEM,其zone编号zone_index的值为1.因此在next_zones_zonelist()中,z++,最终first_zones_zonelist()函数会返回ZONE_NORMAL。在for_each_zone_zonelist_nodemask()遍历过程中也只能遍历ZONE_NORMAL这一个zone了。

再举一个例子,分配掩码GFP_HIGHUSER_MOVABLE,GFP_HIGHUSER_MOVEABLE包含了__GFP_HIGHMEM,那么next_zones_zonelist()函数返回哪个zone呢?

GFP_HIGHUSER_MOVABLE的值为0x200da,那么gfp_zone(GFP_HIGHUSER_MOVABLE)函数等于2,即highest_zoneidx为2,而这个内存节点的第一个ZONE_HIGHME,其zone编号zone_index的值为1;

在

first_zones_zonelist()函数中,由于第一个zone的zone_index值小于highest_zoneidx,因此会返回ZONE_HIGHMEM。在

for_each_zone_zonelist_nodemask()函数中,next_zones_zonelist(++z, highidx, nodemask)依然会返回ZONE_NORMAL;因此这里会遍历ZONE_HIGHMEM和ZONE_NORMAL,这两个zone,但是会先遍历ZONE_HIGHMEM,然后才是ZONE_NORMAL。

要正确理解

for_each_zone_zonelist_nodemask()这个宏的行为,需要理解如下两个方面:- highest_zoneidx是怎么计算得来的,即如何解析分配掩码,这是gfp_zone()函数的职责。

- 每个内存节点都有一个struct pglist_data数据结构,其成员node_zonelists是一个struct zonelist数据结构,zonelist中包含了struct zoneref __zonerefs[]数组来描述这些zone。其中ZONE_HIGHMEM排在前面,并且

_zonerefs[0]->zone_index=1,ZONE_NORMAL排在后面,且 _zonerefs[1]->zone_index=0;

上述这些设计让人感觉复杂,但是这是正确理解以zone为基础的物理页面分配机制的基石。(说实话zone的分配实在是奇妙~)

在

__alloc_page_nodemask()的第24行代码调用first_zones_zonelist(),计算出preferred_zoneref并且保存到ac.classzone_idx变量中,该变量在kswapd内核线程中还会用到。例如以GFP_KERNEL为分配掩码,preferred_zone指的是ZONE_NORMAL,ac.classzone_idx的值为0;回到get_page_from_freelist()函数中,for_each_zone_zonelist_nodemask()找到了接下来可以从哪些zone中分配内存,下面做一些必要的检查;

[get_page_from_freelist()]....

if (cpusets_enabled() &&

(alloc_flags & ALLOC_CPUSET) &&

!cpuset_zone_allowed(zone, gfp_mask))

continue;

/*

* Distribute pages in proportion to the individual

* zone size to ensure fair page aging. The zone a

* page was allocated in should have no effect on the

* time the page has in memory before being reclaimed.

*/

if (alloc_flags & ALLOC_FAIR) {

if (!zone_local(ac->preferred_zone, zone))

break;

if (test_bit(ZONE_FAIR_DEPLETED, &zone->flags)) {

nr_fair_skipped++;

continue;

}

}

/*

* When allocating a page cache page for writing, we

* want to get it from a zone that is within its dirty

* limit, such that no single zone holds more than its

* proportional share of globally allowed dirty pages.

* The dirty limits take into account the zone"s

* lowmem reserves and high watermark so that kswapd

* should be able to balance it without having to

* write pages from its LRU list.

*

* This may look like it could increase pressure on

* lower zones by failing allocations in higher zones

* before they are full. But the pages that do spill

* over are limited as the lower zones are protected

* by this very same mechanism. It should not become

* a practical burden to them.

*

* XXX: For now, allow allocations to potentially

* exceed the per-zone dirty limit in the slowpath

* (ALLOC_WMARK_LOW unset) before going into reclaim,

* which is important when on a NUMA setup the allowed

* zones are together not big enough to reach the

* global limit. The proper fix for these situations

* will require awareness of zones in the

* dirty-throttling and the flusher threads.

*/

if (consider_zone_dirty && !zone_dirty_ok(zone))

continue;

.....

下面代码用于检测当前zone的watermark水位是否充足。

[get_page_from_freelist()]...

mark = zone->watermark[alloc_flags & ALLOC_WMARK_MASK];

if (!zone_watermark_ok(zone, order, mark,

ac->classzone_idx, alloc_flags)) {

...

ret = zone_reclaim(zone, gfp_mask, order);

switch (ret) {

case ZONE_RECLAIM_NOSCAN:

/* did not scan */

continue;

case ZONE_RECLAIM_FULL:

/* scanned but unreclaimable */

continue;

default:

/* did we reclaim enough */

if (zone_watermark_ok(zone, order, mark,

ac->classzone_idx, alloc_flags))

goto try_this_zone;

/*

* Failed to reclaim enough to meet watermark.

* Only mark the zone full if checking the min

* watermark or if we failed to reclaim just

* 1<<order pages or else the page allocator

* fastpath will prematurely mark zones full

* when the watermark is between the low and

* min watermarks.

*/

if (((alloc_flags & ALLOC_WMARK_MASK) == ALLOC_WMARK_MIN) ||

ret == ZONE_RECLAIM_SOME)

goto this_zone_full;

continue;

}

}

try_this_zone:

page = buffered_rmqueue(ac->preferred_zone, zone, order,

gfp_mask, ac->migratetype);

if (page) {

if (prep_new_page(page, order, gfp_mask, alloc_flags))

goto try_this_zone;

return page;

}

...

zone数据结构中有一个成员watermark记录各种水位的情况。系统定义了3种水位,分别是

WMARK_MIN、WMARK_LOW和WMARK_HIGH。watermark水位的计算在__setup_per_zone_wmarks()函数中。[mm/page_alloc.c]static void __setup_per_zone_wmarks(void)

{

unsigned long pages_min = min_free_kbytes >> (PAGE_SHIFT - 10);

unsigned long lowmem_pages = 0;

struct zone *zone;

unsigned long flags;

/* Calculate total number of !ZONE_HIGHMEM pages */

for_each_zone(zone) {

if (!is_highmem(zone))

lowmem_pages += zone->managed_pages;

}

for_each_zone(zone) {

u64 tmp;

spin_lock_irqsave(&zone->lock, flags);

tmp = (u64)pages_min * zone->managed_pages;

do_div(tmp, lowmem_pages);

if (is_highmem(zone)) {

/*

* __GFP_HIGH and PF_MEMALLOC allocations usually don"t

* need highmem pages, so cap pages_min to a small

* value here.

*

* The WMARK_HIGH-WMARK_LOW and (WMARK_LOW-WMARK_MIN)

* deltas controls asynch page reclaim, and so should

* not be capped for highmem.

*/

unsigned long min_pages;

min_pages = zone->managed_pages / 1024;

min_pages = clamp(min_pages, SWAP_CLUSTER_MAX, 128UL);

zone->watermark[WMARK_MIN] = min_pages;

} else {

/*

* If it"s a lowmem zone, reserve a number of pages

* proportionate to the zone"s size.

*/

zone->watermark[WMARK_MIN] = tmp;

}

zone->watermark[WMARK_LOW] = min_wmark_pages(zone) + (tmp >> 2);

zone->watermark[WMARK_HIGH] = min_wmark_pages(zone) + (tmp >> 1);

__mod_zone_page_state(zone, NR_ALLOC_BATCH,

high_wmark_pages(zone) - low_wmark_pages(zone) -

atomic_long_read(&zone->vm_stat[NR_ALLOC_BATCH]));

setup_zone_migrate_reserve(zone);

spin_unlock_irqrestore(&zone->lock, flags);

}

/* update totalreserve_pages */

calculate_totalreserve_pages();

}

计算watermark水位用到min_free_kbytes这个值,它是在系统启动时通过系统空闲页面的数量计算的,具体计算在

init_per_zone_wmark_min()这个函数中。另外系统起来之后也可以通过sysfs来设置,节点在/proc/sys/vm/min_free_kbytes。计算watermark水位的公式不算复杂,最后结果保存在每个zone的watermark数组中,后续伙伴系统和kswapd内核线程中用到;回到get_page_from_freelist()函数,这里会读取WMARK_LOW水位的值到变量mark中,这里zone_watermark_ok()函数判断当前zone的空闲页面是否满足WMARK_LOW水位。

[get_page_from_freelist->zone_watermark_ok->__zone_watermark_ok]/*

* Return true if free pages are above "mark". This takes into account the order

* of the allocation.

*/

static bool __zone_watermark_ok(struct zone *z, unsigned int order,

unsigned long mark, int classzone_idx, int alloc_flags,

long free_pages)

{

/* free_pages may go negative - that"s OK */

long min = mark;

int o;

long free_cma = 0;

free_pages -= (1 << order) - 1;

if (alloc_flags & ALLOC_HIGH)

min -= min / 2;

if (alloc_flags & ALLOC_HARDER)

min -= min / 4;

#ifdef CONFIG_CMA

/* If allocation can"t use CMA areas don"t use free CMA pages */

if (!(alloc_flags & ALLOC_CMA))

free_cma = zone_page_state(z, NR_FREE_CMA_PAGES);

#endif

if (free_pages - free_cma <= min + z->lowmem_reserve[classzone_idx])

return false;

for (o = 0; o < order; o++) {

/* At the next order, this order"s pages become unavailable */

free_pages -= z->free_area[o].nr_free << o;

/* Require fewer higher order pages to be free */

min >>= 1;

if (free_pages <= min)

return false;

}

return true;

}

参数z表示要判断的zone,order是要分配的内存的阶数,mark是要检查的水位。通常分配物理内存页面的内核路径是检查WMARK_LOW水位,而页面回收kswapd内核线程则是检查WMARK_HIGH水位,这会导致一个内存节点各个zone的页面老化速度不一致的问题,为了解决这个问题,内核提出了许多的诡异的补丁,这个问题可以参见之后的内容。

__zone_watermark_ok()函数首先判断zone的空闲页面是否小于某个水位值和zone的最低保留值(lowmem_reserve)之和。返回true表示空闲页面在某个水位在上,否则返回false;回到get_page_from_freelist()函数中,当判断当前zone的空闲页面低于WMARK_LOW水位,会调用zone_reclaim()函数来回收页面。我们这里假设zone_watermark_ok()判断空闲页面充沛,接下来就会调用buffered_rmqueue()函数从伙伴系统中分配物理页面。

[__alloc_pages_nodemask()->get_page_from_freelist()->buffered_rmqueue()]/*

* Allocate a page from the given zone. Use pcplists for order-0 allocations.

*/

static inline

struct page *buffered_rmqueue(struct zone *preferred_zone,

struct zone *zone, unsigned int order,

gfp_t gfp_flags, int migratetype)

{

unsigned long flags;

struct page *page;

bool cold = ((gfp_flags & __GFP_COLD) != 0);

if (likely(order == 0)) {

struct per_cpu_pages *pcp;

struct list_head *list;

local_irq_save(flags);

pcp = &this_cpu_ptr(zone->pageset)->pcp;

list = &pcp->lists[migratetype];

if (list_empty(list)) {

pcp->count += rmqueue_bulk(zone, 0,

pcp->batch, list,

migratetype, cold);

if (unlikely(list_empty(list)))

goto failed;

}

if (cold)

page = list_entry(list->prev, struct page, lru);

else

page = list_entry(list->next, struct page, lru);

list_del(&page->lru);

pcp->count--;

} else {

if (unlikely(gfp_flags & __GFP_NOFAIL)) {

/*

* __GFP_NOFAIL is not to be used in new code.

*

* All __GFP_NOFAIL callers should be fixed so that they

* properly detect and handle allocation failures.

*

* We most definitely don"t want callers attempting to

* allocate greater than order-1 page units with

* __GFP_NOFAIL.

*/

WARN_ON_ONCE(order > 1);

}

spin_lock_irqsave(&zone->lock, flags);

page = __rmqueue(zone, order, migratetype);

spin_unlock(&zone->lock);

if (!page)

goto failed;

__mod_zone_freepage_state(zone, -(1 << order),

get_freepage_migratetype(page));

}

__mod_zone_page_state(zone, NR_ALLOC_BATCH, -(1 << order));

if (atomic_long_read(&zone->vm_stat[NR_ALLOC_BATCH]) <= 0 &&

!test_bit(ZONE_FAIR_DEPLETED, &zone->flags))

set_bit(ZONE_FAIR_DEPLETED, &zone->flags);

__count_zone_vm_events(PGALLOC, zone, 1 << order);

zone_statistics(preferred_zone, zone, gfp_flags);

local_irq_restore(flags);

VM_BUG_ON_PAGE(bad_range(zone, page), page);

return page;

failed:

local_irq_restore(flags);

return NULL;

}

这里根据order数值兵分两路:一路是order等于0 的情况,也就是分配一个物理页面时,从zone->per_cpu_pageset列表中分配;另一路order大于0的情况,就从伙伴系统中分配。我们只关注order大于0 的情况,它最终会调用到__rmqueue_smallest()函数。

[get_page_from_freelist()->buffered_rmqueue()->buffered_rmqueue->__rmqueue()->__rmqueue_smallest()]/*

* Go through the free lists for the given migratetype and remove

* the smallest available page from the freelists

*/

static inline

struct page *__rmqueue_smallest(struct zone *zone, unsigned int order,

int migratetype)

{

unsigned int current_order;

struct free_area *area;

struct page *page;

/* Find a page of the appropriate size in the preferred list */

for (current_order = order; current_order < MAX_ORDER; ++current_order) {

area = &(zone->free_area[current_order]);

if (list_empty(&area->free_list[migratetype]))

continue;

page = list_entry(area->free_list[migratetype].next,

struct page, lru);

list_del(&page->lru);

rmv_page_order(page);

area->nr_free--;

expand(zone, page, order, current_order, area, migratetype);

set_freepage_migratetype(page, migratetype);

return page;

}

return NULL;

}

在__rmqueue_smallest()函数中,首先从order开始查找zone中的空闲链表。如果zone的当前order对应的空闲区free_area中相应的migratetype类型的链表里没有空闲链表,那么就会查找下一级order。

为什么会这样?因为在系统启动时,空闲页面会尽可能分配到MAX_ORDER-1的链表中,这个可以在系统刚起来之后,通过"cat /proc/pagetypeinfo"命令可以看出端倪。当找到某个order的空闲区中对应的mirgratetype类型的空闲链表中有空闲内存块时,就会从一个内存块摘下来,然后摘用expand()函数来切“蛋糕”。因为通常摘下来的内存块会比需要的内存大,切完之后需要把剩下来的内存块重新放回伙伴系统中。

expand()函数就是实现“切蛋糕”的功能。这里的参数high就是current_order,通常是current_order要比需求的order要大。每比较一次,area减一,相当于退了一级order,最后通过list_add把剩下的内存块添加到低一级的空闲链表中。

[get_page_from_freelist()->buffered_rmqueue()->buffered_rmqueue->__rmqueue()->__rmqueue_smallest()->expand()]/*

* The order of subdivision here is critical for the IO subsystem.

* Please do not alter this order without good reasons and regression

* testing. Specifically, as large blocks of memory are subdivided,

* the order in which smaller blocks are delivered depends on the order

* they"re subdivided in this function. This is the primary factor

* influencing the order in which pages are delivered to the IO

* subsystem according to empirical testing, and this is also justified

* by considering the behavior of a buddy system containing a single

* large block of memory acted on by a series of small allocations.

* This behavior is a critical factor in sglist merging"s success.

*

* -- nyc

*/

static inline void expand(struct zone *zone, struct page *page,

int low, int high, struct free_area *area,

int migratetype)

{

unsigned long size = 1 << high;

while (high > low) {

area--;

high--;

size >>= 1;

VM_BUG_ON_PAGE(bad_range(zone, &page[size]), &page[size]);

if (IS_ENABLED(CONFIG_DEBUG_PAGEALLOC) &&

debug_guardpage_enabled() &&

high < debug_guardpage_minorder()) {

/*

* Mark as guard pages (or page), that will allow to

* merge back to allocator when buddy will be freed.

* Corresponding page table entries will not be touched,

* pages will stay not present in virtual address space

*/

set_page_guard(zone, &page[size], high, migratetype);

continue;

}

list_add(&page[size].lru, &area->free_list[migratetype]);

area->nr_free++;

set_page_order(&page[size], high);

}

}

所需要的页面分配成功之后,__rmqueue()函数返回到这个内存块的起始页面struct page数据结构。回到buffered_rmqueue()函数,最后还需要利用zone_statistics()函数做一些统计数据的计算。

回到get_page_from_freelist()函数,最后还要通过prep_new_page()函数做一些有趣的检查,才能最终出厂。

[__alloc_page_nodemask()->get_page_from_freelist()->prep_new_page()->check_new_page()]/*

* This page is about to be returned from the page allocator

*/

static inline int check_new_page(struct page *page)

{

const char *bad_reason = NULL;

unsigned long bad_flags = 0;

if (unlikely(page_mapcount(page)))

bad_reason = "nonzero mapcount";

if (unlikely(page->mapping != NULL))

bad_reason = "non-NULL mapping";

if (unlikely(atomic_read(&page->_count) != 0))

bad_reason = "nonzero _count";

if (unlikely(page->flags & PAGE_FLAGS_CHECK_AT_PREP)) {

bad_reason = "PAGE_FLAGS_CHECK_AT_PREP flag set";

bad_flags = PAGE_FLAGS_CHECK_AT_PREP;

}

#ifdef CONFIG_MEMCG

if (unlikely(page->mem_cgroup))

bad_reason = "page still charged to cgroup";

#endif

if (unlikely(bad_reason)) {

bad_page(page, bad_reason, bad_flags);

return 1;

}

return 0;

}

check_new_page()函数主要做如下的检查:

- 刚分配的页面struct page的_macpcount计数应该为0。

- 这是page->mapping为NULL。

- 判断这是page的_count是否为0。注意alloc_page()分配的 _count为1,但这里为0,因为这个函数之后还调用set_page_refcounted()->set_page_count(),把 _count设置为1;

- 检查PAGE_FLAGS_CHECK_AT_PREP标志位,这个flag在free_page时已经清除了,而这时该flag被设置,说明分配过程中有问题。

上述检查都通过后,我们分配的页面就合格了,可以出厂了,页面page便开启了属于它的精彩生命周期。

以上是 伙伴系统分配内存 的全部内容, 来源链接: utcz.com/z/516494.html