【原创】(十三)Linux内存管理之vma/malloc/mmap

背景

Read the fucking source code!--By 鲁迅A picture is worth a thousand words.--By 高尔基

说明:

- Kernel版本:4.14

- ARM64处理器,Contex-A53,双核

- 使用工具:Source Insight 3.5, Visio

1. 概述

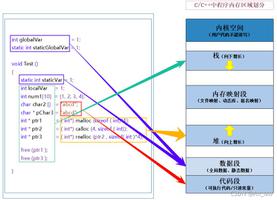

这篇文章,让我们来看看用户态进程的地址空间情况,主要会包括以下:

vma;malloc;mmap;

进程地址空间中,我们常见的代码段,数据段,bss段等,实际上都是一段地址空间区域。Linux将地址空间中的区域称为Virtual Memory Area, 简称VMA,使用struct vm_area_struct来描述。

在进行内存申请和映射时,都会去地址空间中申请一段虚拟地址区域,而这部分操作也与vma关系密切,因此本文将vma/malloc/mmap三个放到一块来进行分析。

开启探索之旅吧。

2. 数据结构

主要涉及两个结构体:struct mm_struct和struct vm_area_struct。

struct mm_struct用于描述与进程地址空间有关的全部信息,这个结构也包含在进程描述符中,关键字段的描述见注释。

struct mm_struct { struct vm_area_struct *mmap; /* list of VMAs */ //指向VMA对象的链表头

struct rb_root mm_rb; //指向VMA对象的红黑树的根

u64 vmacache_seqnum; /* per-thread vmacache */

#ifdef CONFIG_MMU

unsigned long (*get_unmapped_area) (struct file *filp,

unsigned long addr, unsigned long len,

unsigned long pgoff, unsigned long flags); // 在进程地址空间中搜索有效线性地址区间的方法

#endif

unsigned long mmap_base; /* base of mmap area */

unsigned long mmap_legacy_base; /* base of mmap area in bottom-up allocations */

#ifdef CONFIG_HAVE_ARCH_COMPAT_MMAP_BASES

/* Base adresses for compatible mmap() */

unsigned long mmap_compat_base;

unsigned long mmap_compat_legacy_base;

#endif

unsigned long task_size; /* size of task vm space */

unsigned long highest_vm_end; /* highest vma end address */

pgd_t * pgd; //指向页全局目录

/**

* @mm_users: The number of users including userspace.

*

* Use mmget()/mmget_not_zero()/mmput() to modify. When this drops

* to 0 (i.e. when the task exits and there are no other temporary

* reference holders), we also release a reference on @mm_count

* (which may then free the &struct mm_struct if @mm_count also

* drops to 0).

*/

atomic_t mm_users; //使用计数器

/**

* @mm_count: The number of references to &struct mm_struct

* (@mm_users count as 1).

*

* Use mmgrab()/mmdrop() to modify. When this drops to 0, the

* &struct mm_struct is freed.

*/

atomic_t mm_count; //使用计数器

atomic_long_t nr_ptes; /* PTE page table pages */ //进程页表数

#if CONFIG_PGTABLE_LEVELS > 2

atomic_long_t nr_pmds; /* PMD page table pages */

#endif

int map_count; /* number of VMAs */ //VMA的个数

spinlock_t page_table_lock; /* Protects page tables and some counters */

struct rw_semaphore mmap_sem;

struct list_head mmlist; /* List of maybe swapped mm's. These are globally strung

* together off init_mm.mmlist, and are protected

* by mmlist_lock

*/

unsigned long hiwater_rss; /* High-watermark of RSS usage */

unsigned long hiwater_vm; /* High-water virtual memory usage */

unsigned long total_vm; /* Total pages mapped */ //进程地址空间的页数

unsigned long locked_vm; /* Pages that have PG_mlocked set */ //锁住的页数,不能换出

unsigned long pinned_vm; /* Refcount permanently increased */

unsigned long data_vm; /* VM_WRITE & ~VM_SHARED & ~VM_STACK */ //数据段内存的页数

unsigned long exec_vm; /* VM_EXEC & ~VM_WRITE & ~VM_STACK */ //可执行内存映射的页数

unsigned long stack_vm; /* VM_STACK */ //用户态堆栈的页数

unsigned long def_flags;

unsigned long start_code, end_code, start_data, end_data; //代码段,数据段等的地址

unsigned long start_brk, brk, start_stack; //堆栈段的地址,start_stack表示用户态堆栈的起始地址,brk为堆的当前最后地址

unsigned long arg_start, arg_end, env_start, env_end; //命令行参数的地址,环境变量的地址

unsigned long saved_auxv[AT_VECTOR_SIZE]; /* for /proc/PID/auxv */

/*

* Special counters, in some configurations protected by the

* page_table_lock, in other configurations by being atomic.

*/

struct mm_rss_stat rss_stat;

struct linux_binfmt *binfmt;

cpumask_var_t cpu_vm_mask_var;

/* Architecture-specific MM context */

mm_context_t context;

unsigned long flags; /* Must use atomic bitops to access the bits */

struct core_state *core_state; /* coredumping support */

#ifdef CONFIG_MEMBARRIER

atomic_t membarrier_state;

#endif

#ifdef CONFIG_AIO

spinlock_t ioctx_lock;

struct kioctx_table __rcu *ioctx_table;

#endif

#ifdef CONFIG_MEMCG

/*

* "owner" points to a task that is regarded as the canonical

* user/owner of this mm. All of the following must be true in

* order for it to be changed:

*

* current == mm->owner

* current->mm != mm

* new_owner->mm == mm

* new_owner->alloc_lock is held

*/

struct task_struct __rcu *owner;

#endif

struct user_namespace *user_ns;

/* store ref to file /proc/<pid>/exe symlink points to */

struct file __rcu *exe_file;

#ifdef CONFIG_MMU_NOTIFIER

struct mmu_notifier_mm *mmu_notifier_mm;

#endif

#if defined(CONFIG_TRANSPARENT_HUGEPAGE) && !USE_SPLIT_PMD_PTLOCKS

pgtable_t pmd_huge_pte; /* protected by page_table_lock */

#endif

#ifdef CONFIG_CPUMASK_OFFSTACK

struct cpumask cpumask_allocation;

#endif

#ifdef CONFIG_NUMA_BALANCING

/*

* numa_next_scan is the next time that the PTEs will be marked

* pte_numa. NUMA hinting faults will gather statistics and migrate

* pages to new nodes if necessary.

*/

unsigned long numa_next_scan;

/* Restart point for scanning and setting pte_numa */

unsigned long numa_scan_offset;

/* numa_scan_seq prevents two threads setting pte_numa */

int numa_scan_seq;

#endif

/*

* An operation with batched TLB flushing is going on. Anything that

* can move process memory needs to flush the TLB when moving a

* PROT_NONE or PROT_NUMA mapped page.

*/

atomic_t tlb_flush_pending;

#ifdef CONFIG_ARCH_WANT_BATCHED_UNMAP_TLB_FLUSH

/* See flush_tlb_batched_pending() */

bool tlb_flush_batched;

#endif

struct uprobes_state uprobes_state;

#ifdef CONFIG_HUGETLB_PAGE

atomic_long_t hugetlb_usage;

#endif

struct work_struct async_put_work;

#if IS_ENABLED(CONFIG_HMM)

/* HMM needs to track a few things per mm */

struct hmm *hmm;

#endif

} __randomize_layout;

struct vm_area_struct用于描述进程地址空间中的一段虚拟区域,每一个

VMA都对应一个struct vm_area_struct。

/* * This struct defines a memory VMM memory area. There is one of these

* per VM-area/task. A VM area is any part of the process virtual memory

* space that has a special rule for the page-fault handlers (ie a shared

* library, the executable area etc).

*/

struct vm_area_struct {

/* The first cache line has the info for VMA tree walking. */

unsigned long vm_start; /* Our start address within vm_mm. */ //起始地址

unsigned long vm_end; /* The first byte after our end address

within vm_mm. */ //结束地址,区间中不包含结束地址

/* linked list of VM areas per task, sorted by address */ //按起始地址排序的链表

struct vm_area_struct *vm_next, *vm_prev;

struct rb_node vm_rb; //红黑树节点

/*

* Largest free memory gap in bytes to the left of this VMA.

* Either between this VMA and vma->vm_prev, or between one of the

* VMAs below us in the VMA rbtree and its ->vm_prev. This helps

* get_unmapped_area find a free area of the right size.

*/

unsigned long rb_subtree_gap;

/* Second cache line starts here. */

struct mm_struct *vm_mm; /* The address space we belong to. */

pgprot_t vm_page_prot; /* Access permissions of this VMA. */

unsigned long vm_flags; /* Flags, see mm.h. */

/*

* For areas with an address space and backing store,

* linkage into the address_space->i_mmap interval tree.

*/

struct {

struct rb_node rb;

unsigned long rb_subtree_last;

} shared;

/*

* A file's MAP_PRIVATE vma can be in both i_mmap tree and anon_vma

* list, after a COW of one of the file pages. A MAP_SHARED vma

* can only be in the i_mmap tree. An anonymous MAP_PRIVATE, stack

* or brk vma (with NULL file) can only be in an anon_vma list.

*/

struct list_head anon_vma_chain; /* Serialized by mmap_sem &

* page_table_lock */

struct anon_vma *anon_vma; /* Serialized by page_table_lock */

/* Function pointers to deal with this struct. */

const struct vm_operations_struct *vm_ops;

/* Information about our backing store: */

unsigned long vm_pgoff; /* Offset (within vm_file) in PAGE_SIZE

units */

struct file * vm_file; /* File we map to (can be NULL). */ //指向文件的一个打开实例

void * vm_private_data; /* was vm_pte (shared mem) */

atomic_long_t swap_readahead_info;

#ifndef CONFIG_MMU

struct vm_region *vm_region; /* NOMMU mapping region */

#endif

#ifdef CONFIG_NUMA

struct mempolicy *vm_policy; /* NUMA policy for the VMA */

#endif

struct vm_userfaultfd_ctx vm_userfaultfd_ctx;

} __randomize_layout;

关系图来了:

是不是有点眼熟?这个跟内核中的vmap机制很类似。

宏观的看一下进程地址空间中的各个VMA:

针对VMA的操作,有如下接口:

/* VMA的查找 *//* Look up the first VMA which satisfies addr < vm_end, NULL if none. */

extern struct vm_area_struct * find_vma(struct mm_struct * mm, unsigned long addr); //查找第一个满足addr < vm_end的VMA块

extern struct vm_area_struct * find_vma_prev(struct mm_struct * mm, unsigned long addr,

struct vm_area_struct **pprev); //与find_vma功能类似,不同之处在于还会返回VMA链接的前一个VMA;

static inline struct vm_area_struct * find_vma_intersection(struct mm_struct * mm, unsigned long start_addr, unsigned long end_addr); //查找与start_addr~end_addr区域有交集的VMA

/* VMA的插入 */

extern int insert_vm_struct(struct mm_struct *, struct vm_area_struct *); //插入VMA到红黑树中和链表中

/* VMA的合并 */

extern struct vm_area_struct *vma_merge(struct mm_struct *,

struct vm_area_struct *prev, unsigned long addr, unsigned long end,

unsigned long vm_flags, struct anon_vma *, struct file *, pgoff_t,

struct mempolicy *, struct vm_userfaultfd_ctx); //将VMA与附近的VMA进行融合操作

/* VMA的拆分 */

extern int split_vma(struct mm_struct *, struct vm_area_struct *,

unsigned long addr, int new_below); //将VMA以addr为界线分成两个VMA

上述的操作基本上也就是针对红黑树的操作。

3. malloc

malloc大家都很熟悉,那么它是怎么与底层去交互并申请到内存的呢?

图来了:

如图所示,malloc最终会调到底层的sys_brk函数和sys_mmap函数,在分配小内存时调用sys_brk函数,动态的调整进程地址空间中的brk位置;在分配大块内存时,调用sys_mmap函数,在堆和栈之间找到一片区域进行映射处理。

先来看sys_brk函数,通过SYSCALL_DEFINE1来定义,整体的函数调用流程如下:

从函数的调用过程中可以看出有不少操作是针对vma的,那么结合起来的效果图如下:

整个过程看起来就比较清晰和简单了,每个进程都用struct mm_struct来描述自身的进程地址空间,这些空间都是一些vma区域,通过一个红黑树和链表来管理。因此针对malloc的处理,会去动态的调整brk的位置,具体的大小则由struct vm_area_struct结构中的vm_start ~ vm_end来指定。在实际过程中,会根据请求分配区域是否与现有vma重叠的情况来进行处理,或者重新申请一个vma来描述这段区域,并最终插入到红黑树和链表中。

完成这段申请后,只是开辟了一段区域,通常还不会立马分配物理内存,物理内存的分配会发生在访问时出现缺页异常后再处理,这个后续也会有文章来进一步分析。

4. mmap

mmap用于内存映射,也就是将一段区域映射到自己的进程地址空间中,分为两种:

- 文件映射: 将文件区域映射到进程空间,文件存放在存储设备上;

- 匿名映射:没有文件对应的区域映射,内容存放在物理内存上;

同时,针对其他进程是否可见,又分为两种:

- 私有映射:将数据源拷贝副本,不影响其他进程;

- 共享映射:共享的进程都能看到;

根据排列组合,就存在以下几种情况了:

- 私有匿名映射: 通常分配大块内存时使用,堆,栈,bss段等;

- 共享匿名映射:常用于父子进程间通信,在内存文件系统中创建

/dev/zero设备; - 私有文件映射:常用的比如动态库加载,代码段,数据段等;

- 共享文件映射:常用于进程间通信,文件读写等;

常见的prot权限和flags如下:

#define PROT_READ 0x1 /* page can be read */#define PROT_WRITE 0x2 /* page can be written */

#define PROT_EXEC 0x4 /* page can be executed */

#define PROT_SEM 0x8 /* page may be used for atomic ops */

#define PROT_NONE 0x0 /* page can not be accessed */

#define PROT_GROWSDOWN 0x01000000 /* mprotect flag: extend change to start of growsdown vma */

#define PROT_GROWSUP 0x02000000 /* mprotect flag: extend change to end of growsup vma */

#define MAP_SHARED 0x01 /* Share changes */

#define MAP_PRIVATE 0x02 /* Changes are private */

#define MAP_TYPE 0x0f /* Mask for type of mapping */

#define MAP_FIXED 0x10 /* Interpret addr exactly */

#define MAP_ANONYMOUS 0x20 /* don't use a file */

#define MAP_GROWSDOWN 0x0100 /* stack-like segment */

#define MAP_DENYWRITE 0x0800 /* ETXTBSY */

#define MAP_EXECUTABLE 0x1000 /* mark it as an executable */

#define MAP_LOCKED 0x2000 /* pages are locked */

#define MAP_NORESERVE 0x4000 /* don't check for reservations */

#define MAP_POPULATE 0x8000 /* populate (prefault) pagetables */

#define MAP_NONBLOCK 0x10000 /* do not block on IO */

#define MAP_STACK 0x20000 /* give out an address that is best suited for process/thread stacks */

#define MAP_HUGETLB 0x40000 /* create a huge page mapping */

mmap的操作,最终会调用到do_mmap函数,最后来一张调用图:

以上是 【原创】(十三)Linux内存管理之vma/malloc/mmap 的全部内容, 来源链接: utcz.com/z/511684.html