python推荐淘宝物美价廉商品

完成的目标:

输入搜索的商品 以及 淘宝的已评价数目、店铺的商品描述(包括如实描述、服务态度、快递的5.0打分);

按要求,晒选出要求数量的结果,并按“物美价廉算法”排序后输出

思路:

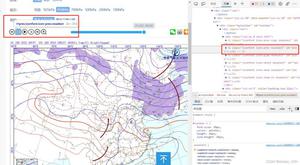

1,利用淘宝搜索\'https://s.taobao.com/search?\'的价格filter 先进行价格筛选,得到结果的网站

2,用urllib打开结果网站,构造正则表达式匹配出各个商品结果的 价格、已评价数量、店铺的如实描述等信息;

并把结果保存至二维数组里。

3,利用商品及店铺信息,用“物美价廉算法”给各个商品打分

4,按打分排序, 各个信息总结果按排序输出到新建txt文件里;

并将各个商品图片下载到文件及建立相同排序开头的txt(其名字包好简要的商品信息),这样图片和商品信息同时能在一个文件夹里用大图排列看到。

5.,可以把输入的参数(价格范围等要求)以函数输入,,用pyinstaller 把整个py程序打包为EXE 就可以发布了。

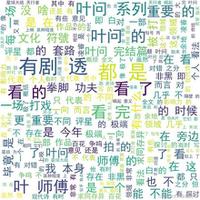

“物美价廉算法”说明:

利用商铺顾客的评分作为主要筛选保证质量,价格偏离平均值的幅度筛选便宜的商品,销量热门度以已有评价数度量。具体算法见源代码,参数与函数都是自己调试的,因为抓取的评分5分制分布比较集中,从而算法 提高了评分的重要性,削弱价格、销量的重要性(销量越高,增长一个级别体现的评价统计显著性越不重要,因为二八法则,以及很多消费者是都跟着销量买的,有叠加效应)

优点就是,结果里商品很少有差评的,都是高分评价

这样推荐的缺点是,可能会高估销量较少的商铺、新开商铺的商品质量。因为总的评价的人少,打分的体现的价值就不稳定,高分的偶然性也越大,不过是按照淘宝综合排序搜索前多少个满足的,销量还算是比较靠前的,只要不是那些市场份额很少商家也少的商品。另一个问题是 淘宝和天猫没有区分,淘宝的审查没有天猫的严格。

如要求条件为:

reserch_goods=\'ssd120g\' #淘宝搜索词

price_min=22 #价格区间

price_max=400

descripHrequ=0 # % 默认高于average, 输出结果大于此值

servHrequ=0 # % 默认高于average, 输出结果大于此值

descripNrequ=6

counts=10 #要求选出多少个商品

结果显示在results文件里

打包发布的EXE体验版分享在链接:http://pan.baidu.com/s/1boXwjTH 密码:m32i

源代码如下:

1 # -*- coding: utf-8 -*-2 \'\'\'

3 author : willowj

4 http://www.cnblogs.com/willowj

5 注意robots 协议,别给网站带来较多负载

6 \'\'\'

7

8 import urllib

9 import urllib2

10 import re

11 import time

12 import random

13 import os

14 from math import log

15 from math import log10

16 from math import sqrt

17 import sys

18

19 \'\'\'在Python自己IDE上要注释掉一下两行\'\'\'

20 reload(sys)

21 sys.setdefaultencoding(\'utf8\') # python2.x的的defaultencoding是ascii

22

23 class counter(object):

24 #计数器

25 def __init__(self):

26 self.count = 0

27 self.try_time = 0

28 self.fail_time = 0

29 self.url_list = []

30 self.new_flag = True

31 self.results=[]

32 self.p=0

33 self.d=0

34

35 def print_counter(self):

36 print \'try_time:\', self.try_time, " get_count:" , self.count, " fail_time:",self.fail_time

37

38 counter1 = counter()

39

40

41 def post_request(url):

42 \'\'\'

43 #使用代理

44 proxy = {\'http\':\'27.24.158.155:84\'}

45 proxy_support = urllib2.ProxyHandler(proxy)

46 # opener = urllib2.build_opener(proxy_support,urllib2.HTTPHandler(debuglevel=1))

47 opener = urllib2.build_opener(proxy_support)

48 urllib2.install_opener(opener)

49 \'\'\'

50

51 #构造随机头部文件访问请求

52 User_Agents=["Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:47.0) Gecko/20100101 Firefox/47.0",

53 "Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/50.0.2661.102 Safari/537.36",

54 "Mozilla/5.0 (Macintosh; U; Intel Mac OS X 10_6_8; en-us) AppleWebKit/534.50 (KHTML, like Gecko) Version/5.1 Safari/534.50", #

55 "Opera/9.80 (Windows NT 6.1; U; en) Presto/2.8.131 Version/11.11",

56 "Opera/9.80 (Macintosh; Intel Mac OS X 10.6.8; U; en) Presto/2.8.131 Version/11.11O"

57 ]

58 random_User_Agent = random.choice(User_Agents)

59 #print random_User_Agent

60

61 req =urllib2.Request(url) #!!

62

63 req.add_header("User-Agent",random_User_Agent)

64 req.add_header("GET",url)

65 req.add_header("Referer",url)

66 return req

67

68

69 def recommend_rate(price,description,delivery,service,comments):

70 #描述为绝对值

71 av_p=counter1.p/counter1.count

72 av_d=counter1.d/counter1.count

73 rate=(description/av_d)**20*(description+delivery+service)*(av_p/(price))**0.1+log((comments+5),1000)

74 #print \'all count=\',counter1.count

75 #print "avrage price=",av_p,\';\',av_p/(price),\';price\',price,\';comments=\',comments,\';descrip=\',description

76 #print \'rate=\',rate,\'(price)yinzi\',(av_p/(price))**0.1,\'descrip_yinzi\',(description/av_d)**20,\'comments_factor=\',log((comments+50),100)

77 return rate

78

79

80 def product_rank(list):

81 for x in list:

82 #0开始为 x0商品名 、x1图片链接、x2商品链接、x3价格、x4评论数、 x5店铺名、 x6快递情况、x7描述相符情况3个、x8服务情况

83 rate=recommend_rate(x[3],x[7],x[6],x[8],x[4])

84 x.append(rate)

85

86

87 def get_user_rate(item_url):

88 #暂时未使用该功能

89 \'\'\'获取卖家信用情况;未登录情况不能访问,或者需要在头部文件中加入cookie。。。;\'\'\'

90 html=urllib2.urlopen(item_url)

91 #"//rate.taobao.com/user-rate-282f910f3b70f2128abd0ee9170e6428.htm"

92 regrex_rate=\'"(//.*?user\-rate.*?)"\'

93 codes= re.findall(regrex_rate,html.read())

94 html.close()

95

96 user_rate_url= \'http:\'+codes[0]

97 print \'uu\', user_rate_url

98

99 user_rate_html = urllib2.urlopen(user_rate_url)

100 print user_rate_html.read()

101 #title="4.78589分"

102 desc_regex=u\'title="(4.[0-9]{5}).*?\'

103 de_pat=re.compile(desc_regex)

104

105 descs = re.findall(de_pat,user_rate_html.read())

106 print len(descs)

107 item_url=\'https://item.taobao.com/item.htm?id=530635294653&ns=1&abbucket=0#detail\'

108 #get_user_rate(item_url)

109 \'\'\'获取卖家信用情况;未登录情况不能访问。。。暂时 无用\'\'\'

110

111 def makeNewdir(savePath):

112 while os.path.exists(savePath):

113 savePath = savePath+\'%s\'%random.randrange(1,10)

114 #print "the path exist,we\'ll make a new one"

115 try:

116 os.makedirs(savePath)

117 print \'ok,file_path we reserve results: %s\'%savePath

118 print \'保存的路径为:\'.decode(\'utf-8\')

119 except:

120 print "failed to make file path\nplease restart program"

121 print \'创建文件夹失败,请重新启动程序\'.decode(\'utf-8\')

122

123

124 def get_praised_good(url, file_open, keyword, counts, descripHrequ, servHrequ, descripNrequ):

125 #从给定的淘宝链接中 获取符合条件的商品list

126 html = urllib2.urlopen(post_request(url))

127 code = html.read()

128 html.close()

129

130 regrex2=ur\'raw_title":"(.*?)","pic_url":"(.*?)","detail_url":"(.*?)","view_price":"(.*?)".*?"comment_count":"(.*?)".*?"nick":"(.*?)".*?"delivery":\[(.*?),(.*?),(.*?)\],"description":\[(.*?),(.*?),(.*?)\],"service":\[(.*?),(.*?),(.*?)\]\'

131 #每一个匹配项 返回 15个 字符串

132 #x[0]开始为 x0商品名 、x1图片链接、x2商品链接、x3价格、x4评论数、 x5店铺名、 x6快递情况3个、x9描述相符情况3个、x12服务情况3个

133 pat = re.compile(regrex2)

134 meet_code = re.findall(regrex2,code)#

135

136 for x in meet_code:

137 if counter1.count>=counts :

138 print "have get enough pruducts"

139 break

140 description_higher=int(x[10])*float(x[11])/100

141 service_higher=int(x[13])*float(x[14])/100

142 try:

143 x4=int(x[4]) #description_count

144 except:

145 x4=0

146 if (description_higher>=descripHrequ) and (service_higher>=servHrequ) and x4>=descripNrequ:

147 if re.findall(keyword,x[0]) : # 中文keyword在结果中匹配问题暂时没有解决,,直接加在搜索词里吧

148 x0=x[0].replace(\' \',\'\').replace(\'/\',\'\')

149 detail_url=\'http:\'+x[2].decode(\'unicode-escape\').encode(\'utf-8\')

150 x1=\'http:\'+x[1].decode(\'unicode-escape\').encode(\'utf-8\')

151 #print type(x)

152 if detail_url in counter1.url_list:

153 counter1.new_flag=False

154 print \'no more new met products\'

155 print counter1.url_list

156 print detail_url

157 break

158 counter1.url_list.append(detail_url)

159 counter1.try_time+=1

160 counter1.count+=1

161

162 x11=float(x[11])/100

163 x9=float(x[9])/100

164 x12=float(x[12])/100

165 x6=float(x[6])/100

166 x3=float(x[3])

167 counter1.p+=x3

168 counter1.d+=x9

169 x5=unicode(x[5],\'utf-8\')

170

171 result_list=[]

172 result_list.append(x0)

173 result_list.append(x1)

174 result_list.append(detail_url)

175 result_list.append(x3)

176 result_list.append(x4)

177 result_list.append(x5)

178 result_list.append(x6)

179 result_list.append(x9)

180 result_list.append(x12)

181 #0开始为 x0商品名 、x1图片链接、x2商品链接、x3价格、x4评论数、 x5店铺名、 x6快递情况、x7描述相符情况、x8服务情况

182 counter1.results.append(result_list)

183

184

185 def save_downpic(lis,file_open,savePath):

186 \'\'\'从商品list下载图片到reserve_file_path,并写入信息至fileopen\'\'\'

187 #0开始为 x0商品名 、x1图片链接、x2商品链接、x3价格、x4评论数、 x5店铺名、 x6快递情况、x7描述相符情况、x8服务情况、x9:rate

188 len_list=len(lis)

189 print len_list

190 cc=0

191 for x in lis:

192 try :

193 urllib.urlretrieve(x[1],savePath+\'\\%s___\'%cc +unicode(x[0],\'utf-8\')+\'.jpg\')

194

195 txt_name = savePath+\'\\\'+ \'%s__\'%cc+ \'custome_description_%s __\'%x[7] +\'__comments_%s_\'%x[4]+ \'___price_%srmb___\'%x[3] +x[5] +\'.txt\'

196

197 file_o = open(txt_name,\'a\')

198 file_o.write(x[2])

199 file_o.close()

200

201 print \'\nget_one_possible_fine_goods:\n\',\'good_name:\',x[0].decode(\'utf-8\')

202 print \'rate=\',x[9]

203 print \'price:\',x[3],x[5].decode(\'utf-8\')

204 print \'custome_description:\',x[7],\'--\',\'described_number:\',x[4],\' service:\',x[8]

205 print x[2].decode(\'utf-8\'),\'\ngood_pic_url:\',x[1].decode(\'utf-8\')

206

207 print txt_name

208 print cc+1,"th"

209

210 file_open.write(u\'%s__\'%cc +u\'%s\'%x[0]+\'\nprice:\'+str(x[3])+\'¥,\'+\'\n\'+str(x[2])+\' \n\'+str(x[5])+\'\ncustomer_description:\'+str(x[7])+\'described_number:\'+str(x[4])+\'\n\n\n\')

211

212 print \'get one -^-\'

213 except :

214 print "failed to down picture or creat txt"

215 counter1.fail_time += 1

216 cc+=1

217 time.sleep(0.5)

218

219

220 def get_all_praised_goods(serchProd,counts,savePath ,keyword, price_min=0,price_max=0,descripHrequ =0,servHrequ=0 ,descripNrequ=0):

221 #边里搜索结果每一页

222 #initial url and page number

223 initial_url=\'https://s.taobao.com/search?q=\'+serchProd

224 if price_min :

225 if price_min < price_max :

226 initial_url = initial_url+\'&filter=reserve_price%5B\'+\'%s\'%price_min+\'%2C\' +\'%s\'%price_max

227

228 initial_url = initial_url +\'%5D&s=\'

229

230 #tian_mall = \'https://list.tmall.com/search_product.htm?q=\'

231

232 print "initial_url",initial_url

233 page_n=0

234 reserve_file=savePath+r\'\found_goods.txt\'

235 file_open=open(reserve_file,\'a\')

236

237 file_open.write(\'****************************\n\')

238 file_open.write(time.ctime())

239 file_open.write(\'\n****************************\n\')

240

241 while counter1.new_flag and counter1.count<counts :

242

243 url_1=initial_url+\'%s\'%(44*page_n)

244 #print initial_url

245 print \'url_1:\', url_1

246 #print \'ss\',initial_url+\'%s\'%(44*page_n)

247 page_n += 1

248

249 get_praised_good(url_1,file_open,keyword,counts,descripHrequ,servHrequ ,descripNrequ)

250 print "let web network rest for 2s lest make traffic jams "

251 time.sleep(2)

252 # except:

253 print "%s"%page_n,"pages have been searched"

254 if page_n >=11 :

255 print "check keyword,maybe too restrict"

256 break

257 print url_1

258 product_rank(counter1.results)

259

260 counter1.results.sort(key=lambda x :x[9],reverse=True)

261

262 save_downpic(counter1.results,file_open,savePath)

263

264 #

265 for a in counter1.results:

266 for b in a :

267 file_open.write(unicode(str(b),\'utf-8\'))

268 file_open.write(\'\t\')

269 file_open.write(\'\n\n\')

270

271 file_open.close()

272 counter1.print_counter()

273

274

275 def main(serchProd):

276 \'\'\' 用户选择是否自定义要求,根据要求进行获取商品,并按推荐排序输出\'\'\'

277 if serchProd:

278

279 print "if customise price_range ,decriptiom require .etc.\ninput Y/N \n default by : no price limit avarage than descriptiom,get 30 products \n 默认要求为:无价格限制,商品描述、快递、服务高于均值,获取30个商品。自定义要求请输入 ‘Y’ (区分大小写)".decode(\'utf-8\')

280 if raw_input() == \'Y\':

281 print "\nplease input _minimal price and _maximal price; \ndefault by 0,10000\nnext by \'enter\'key input nothing means by default,the same below "

282 print \'请输入价格范围 ;默认0-10000 ;两项用半角逗号","分隔 按回车键确认;什么也不输入代表使用默认值 \'.decode(\'utf-8\')

283 try:

284 price_min, price_max=input()

285 except:

286 print \'not input or wrong number,use default range\'

287 price_min, price_max = 0 ,10000

288 #

289 # #

290 print "please input _keyword that goods name must include:\n(more than one keyword must use Regular Expression); default by no kewords"

291 try:

292 keyword=raw_input().decode("gbk").encode("utf-8") #个人限定词,商品名字必须包含,防止淘宝推荐了其他相关词 (正则表达式). 为任意表示不作限制

293 except:

294 keyword=\'\'

295 #

296

297 print "\nplease input _description_higher_percent_require and _service_higher__percent_require\n range:(-100,100) ; \ndefault by 0,0 I.e better than average"

298 print \'请输入商品描述、服务高于平均值的百分比-100 ~100\'.decode(\'utf-8\')

299 # % 默认高于average, 输出结果大于此值

300 try:

301 descripHrequ,servHrequ=input()

302 except:

303 print \'not input or wrong number,use default range\'

304 descripHrequ = 0 # % 默认高于average, 输出结果大于此值

305 servHrequ = 0

306

307 #

308 print "\nplease input description count limit, default more than 5\n" ,\'输入最低商品评价数,默认大于5\'.decode(\'utf-8\')

309 try:

310 descripNrequ=input()

311 except :

312 print \'not input or wrong number,use default range\'

313 descripNrequ=5

314 #

315

316 # print "\nIF customise file reserve path, Y or N \ndefault/sample as: C:\\Users\\Administrator\\Desktop\\find_worthy_goods\\results "

317 # print \'是否自定义保存文件目录 Y or N\'.decode(\'utf-8\')

318 # if raw_input()==\'Y\':

319 # print "please input path that you want to reserve; \n "

320 # savePath = raw_input()

321 # else:

322 # #savePath=r"C:\Users\Administrator\Desktop\find_worthy_goods\results"#结果保存路径

323 #

324 print "\nplease input how many results you want, default by 30\n" ,\'您要获取的商品数目,默认30\'.decode(\'utf-8\')

325 try:

326 counts=input()

327 except :

328 counts=30

329 else :

330 counts =30

331 keyword = \'\'

332 price_min ,price_max ,descripHrequ ,servHrequ ,descripNrequ = 0,0,0,0,0

333

334 #

335 savePath=\'results\'

336 makeNewdir(savePath)

337

338 get_all_praised_goods(serchProd, counts, savePath, keyword, price_min ,price_max ,descripHrequ ,servHrequ ,descripNrequ)

339

340 print \'\n\'

341 counter1.print_counter()

342 print "finished,please look up in %s"%savePath

343 print \'下载完成\'.decode(\'utf-8\')

344

345 input()

346 else:

347 print "no search goods,please restart"

348 print \'没有输入商品名称,请重新启动程序\'.decode(\'utf-8\')

349

350

351 def input_para_inner():

352 serchProd=\'ssd120g\' #淘宝搜索词

353 keyword=\'非240\' #raw_input().decode("gbk").encode("utf-8") #个人限定词,商品名字必须包含,防止淘宝推荐了其他相关词 (正则表达式). 为任意表示不作限制

354 price_min=22 #价格区间

355 price_max=400

356 descripHrequ=0 # % 默认高于average, 输出结果大于此值

357 servHrequ=0 # % 默认高于average, 输出结果大于此值

358 descripNrequ=6

359 counts=10

360 #要求选出多少个商品

361 #savePath=r"C:\Users\Administrator\Desktop\Python scrapy\find_worthy_goods\results"#结果保存路径

362 savePath=r"results" #结果保存路径

363 makeNewdir(savePath)

364

365 get_all_praised_goods(serchProd, counts, savePath, keyword, price_min, price_max ,descripHrequ ,servHrequ ,descripNrequ)

366

367

368 if __name__=="__main__" :

369 x = 2

370 if x==1:

371 input_para_inner() #自己在源代码中输入 筛选要求

372 else:

373 print \'说明:\n本程序用于在淘宝上搜索商品时主动通过 价格范围、商品描述、服务态度、评论数来筛选商品;\n筛选出来的商品图片下载保存到磁盘(默认桌面新建find_worty_goods文件夹)并建立同序号开头的txt文件,图片显示商品,其旁的txt文件名显示价格等关键信息,txt里保存商品的淘宝链接\'.decode(\'utf-8\')

374 print "please input reserch _goods_name"

375 serchProd=raw_input().replace(\' \',\'\') #淘宝搜索词 ,并去除中间意外输入的空格

376 main(serchProd)

377

378

379 #保存图片,以文件名为商品图片名字,并以序号开头

380 #同时,输出 价格、商家名,商品描述、服务等 到 txt文本

381 #在商品图片看中后,便可按序号查找

382 #按描述、服务评价高于平均,购物体验应该可以的

View Code

预计可添加功能:

交互界面

MySQL的数据存储,以实现价格变动的比较

以上是 python推荐淘宝物美价廉商品 的全部内容, 来源链接: utcz.com/z/387674.html