【Python】Python爬虫,30秒爬取3000+篇微信文章

引言

由于工作需要,给公司前端做了一个小工具,使用python语言,爬取搜狗微信的微信文章,附搜狗微信官方网址

从热门到时尚圈,并且包括每个栏目下面的额加载更多内容选项

一共加起来3000+篇文章

需求

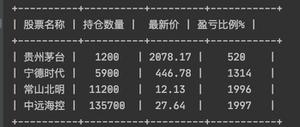

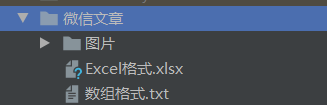

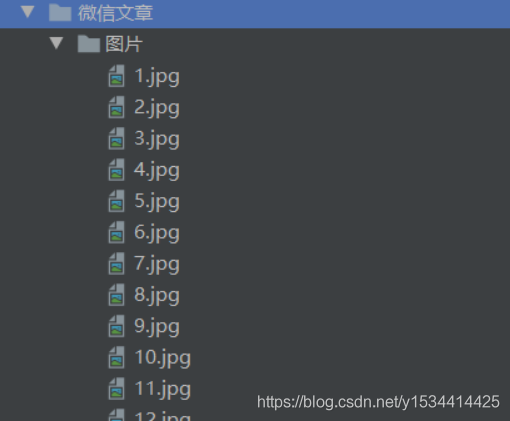

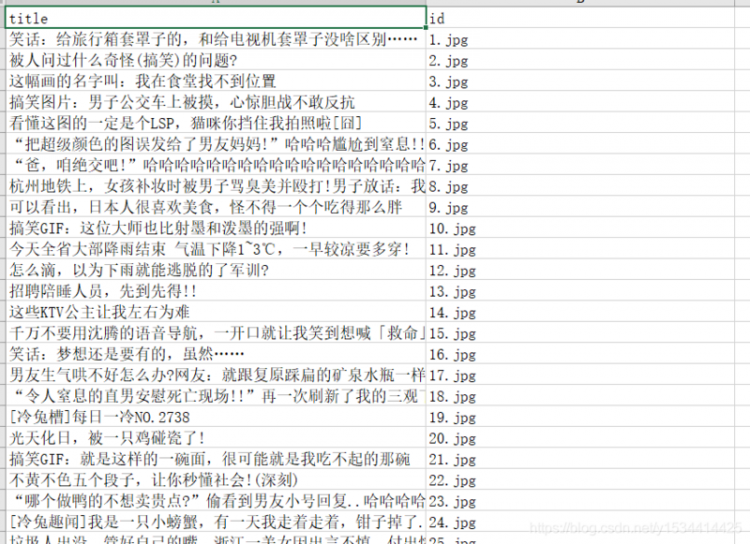

爬取这些文章获取到每篇文章的标题和右侧的图片,将爬取到的图片以规定的命名方式输出到规定文件夹中,并将文章标题和图片名称对应输出到Excel和txt中

效果

![]()

完整代码如下

Package Version------------------------- ---------

altgraph 0.17

certifi 2020.6.20

chardet 3.0.4

future 0.18.2

idna 2.10

lxml 4.5.2

pefile 2019.4.18

pip 19.0.3

pyinstaller 4.0

pyinstaller-hooks-contrib 2020.8

pywin32-ctypes 0.2.0

requests 2.24.0

setuptools 40.8.0

urllib3 1.25.10

XlsxWriter 1.3.3

xlwt 1.3.0

# !/usr/bin/python# -*- coding: UTF-8 -*-

import os

import requests

import xlsxwriter

from lxml import etree

# 请求微信文章的头部信息

headers = {

'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,image/avif,image/webp,image/apng,*/*;q=0.8,application/signed-exchange;v=b3;q=0.9',

'Accept-Encoding': 'gzip, deflate, br',

'Accept-Language': 'zh-CN,zh;q=0.9',

'Host': 'weixin.sogou.com',

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/85.0.4183.102 Safari/537.36'

}

# 下载图片的头部信息

headers_images = {

'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,image/avif,image/webp,image/apng,*/*;q=0.8,application/signed-exchange;v=b3;q=0.9',

'Accept-Encoding': 'gzip, deflate',

'Accept-Language': 'zh-CN,zh;q=0.9',

'Host': 'img01.sogoucdn.com',

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/85.0.4183.102 Safari/537.36'

}

a = 0

all = []

# 创建根目录

save_path = './微信文章'

folder = os.path.exists(save_path)

if not folder:

os.makedirs(save_path)

# 创建图片文件夹

images_path = '%s/图片' % save_path

folder = os.path.exists(images_path)

if not folder:

os.makedirs(images_path)

for i in range(0, 20):

for j in range(1, 10):

url = "https://weixin.sogou.com/pcindex/pc/pc_%d/%d.html" % (i, j)

# 请求搜狗文章的url地址

response = requests.get(url=url, headers=headers).text.encode('iso-8859-1').decode('utf-8')

# 构造了一个XPath解析对象并对HTML文本进行自动修正

html = etree.HTML(response)

# XPath使用路径表达式来选取用户名

xpath = html.xpath('/html/body/li')

for content in xpath:

# 计数

a = a + 1

# 文章标题

title = content.xpath('./div[@class="txt-box"]/h3//text()')[0]

author_ = content.xpath('./div[@class="txt-box"]/div/a//text()')

article = {}

# 作者存在

if author_:

article['title'] = title

article['id'] = '%d.jpg' % a

article['author'] = author_

all.append(article)

# 图片路径

path = 'http:' + content.xpath('./div[@class="img-box"]//img/@src')[0]

# 下载文章图片

images = requests.get(url=path, headers=headers_images).content

try:

with open('%s/%d.jpg' % (images_path, a), "wb") as f:

print('正在下载第%d篇文章图片' % a)

f.write(images)

except Exception as e:

print('下载文章图片失败%s' % e)

# 信息存储在excel中

# 创建一个workbookx

workbook = xlsxwriter.Workbook('%s/Excel格式.xlsx' % save_path)

# 创建一个worksheet

worksheet = workbook.add_worksheet()

print('正在生成Excel...')

try:

for i in range(0, len(all) + 1):

# 第一行用于写入表头

if i == 0:

worksheet.write(i, 0, 'title')

worksheet.write(i, 1, 'id')

worksheet.write(i, 2, 'author')

continue

author_ = all[i - 1]['author']

# 作者存在

if author_:

worksheet.write(i, 2, author_[0])

worksheet.write(i, 0, all[i - 1]['title'])

worksheet.write(i, 1, all[i - 1]['id'])

workbook.close()

print("生成Excel成功")

except Exception as e:

print('生成Excel失败%s' % e)

print('正在生成txt...')

try:

with open('%s/数组格式.txt' % save_path, "w") as f:

f.write(str(all))

print('生成txt成功')

except Exception as e:

print('生成txt失败%s' % e)

print('共爬取%d篇文章' % a)

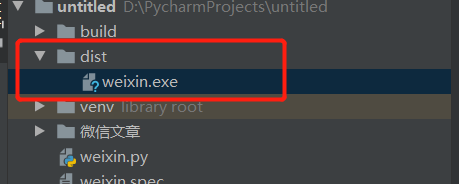

最后将程序打包成exe文件,在windows系统下可以直接运行程序

我使用的是pyinstaller

// 安装pyinstallerpip install pyinstaller

// 打包成exe文件

pyinstaller -F weixin.py

执行exe文件

点赞收藏关注,你的支持是我最大的动力!

以上是 【Python】Python爬虫,30秒爬取3000+篇微信文章 的全部内容, 来源链接: utcz.com/a/89641.html