Kubernetes m6S在IPVS装饰模式下Service服务的ClusterIP类型访问没法处理

Kubernetes K8S使用IPVS代理模式,当Service的类型为ClusterIP时,如何处理访问service却不能访问后端pod的情况。

背景现象

Kubernetes K8S使用IPVS代理模式,当Service的类型为ClusterIP时,出现访问service却不能访问后端pod的情况。

主机配置规划

| 服务器名称(hostname) | 系统版本 | 配置 | 内网IP | 外网IP(模拟) |

|---|---|---|---|---|

| k8s-master | CentOS7.7 | 2C/4G/20G | 172.16.1.110 | 10.0.0.110 |

| k8s-node01 | CentOS7.7 | 2C/4G/20G | 172.16.1.111 | 10.0.0.111 |

| k8s-node02 | CentOS7.7 | 2C/4G/20G | 172.16.1.112 | 10.0.0.112 |

场景复现

Deployment的yaml信息

yaml文件

1 [root@k8s-master service]# pwd2 /root/k8s_practice/service

3 [root@k8s-master service]# cat myapp-deploy.yaml

4 apiVersion: apps/v1

5kind: Deployment

6metadata:

7 name: myapp-deploy

8 namespace: default

9spec:

10 replicas: 3

11 selector:

12 matchLabels:

13 app: myapp

14 release: v1

15 template:

16 metadata:

17 labels:

18 app: myapp

19 release: v1

20env: test

21 spec:

22 containers:

23 - name: myapp

24 image: registry.cn-beijing.aliyuncs.com/google_registry/myapp:v1

25 imagePullPolicy: IfNotPresent

26 ports:

27 - name: http

28 containerPort: 80

启动Deployment并查看状态

1 [root@k8s-master service]# kubectl apply -f myapp-deploy.yaml 2 deployment.apps/myapp-deploy created 3 [root@k8s-master service]# 4 [root@k8s-master service]# kubectl get deploy -o wide 5 NAME READY UP-TO-DATE AVAILABLE AGE CONTAINERS IMAGES SELECTOR 6 myapp-deploy 3/333 14s myapp registry.cn-beijing.aliyuncs.com/google_registry/myapp:v1 app=myapp,release=v1 7 [root@k8s-master service]# kubectl get rs -o wide 8NAME DESIRED CURRENT READY AGE CONTAINERS IMAGES SELECTOR 9 myapp-deploy-5695bb5658 333 21s myapp registry.cn-beijing.aliyuncs.com/google_registry/myapp:v1 app=myapp,pod-template-hash=5695bb5658,release=v110 [root@k8s-master service]#11 [root@k8s-master service]# kubectl get pod -o wide --show-labels12NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES LABELS13 myapp-deploy-5695bb5658-7tgfx 1/1 Running 0 39s 10.244.2.111 k8s-node02 <none> <none> app=myapp,env=test,pod-template-hash=5695bb5658,release=v114 myapp-deploy-5695bb5658-95zxm 1/1 Running 0 39s 10.244.3.165 k8s-node01 <none> <none> app=myapp,env=test,pod-template-hash=5695bb5658,release=v115 myapp-deploy-5695bb5658-xtxbp 1/1 Running 0 39s 10.244.3.164 k8s-node01 <none> <none> app=myapp,env=test,pod-template-hash=5695bb5658,release=v1

curl访问

1 [root@k8s-master service]# curl 10.244.2.111/hostname.html2 myapp-deploy-5695bb5658-7tgfx3 [root@k8s-master service]#4 [root@k8s-master service]# curl 10.244.3.165/hostname.html5 myapp-deploy-5695bb5658-95zxm6 [root@k8s-master service]#7 [root@k8s-master service]# curl 10.244.3.164/hostname.html8 myapp-deploy-5695bb5658-xtxbp

Service的ClusterIP类型信息

yaml文件

1 [root@k8s-master service]# pwd2 /root/k8s_practice/service

3 [root@k8s-master service]# cat myapp-svc-ClusterIP.yaml

4apiVersion: v1

5kind: Service

6metadata:

7 name: myapp-clusterip

8 namespace: default

9spec:

10 type: ClusterIP # 可以不写,为默认类型

11 selector:

12 app: myapp

13 release: v1

14 ports:

15 - name: http

16 port: 8080 # 对外暴露端口

17 targetPort: 80 # 转发到后端端口

启动Service并查看状态

1 [root@k8s-master service]# kubectl apply -f myapp-svc-ClusterIP.yaml2 service/myapp-clusterip created3 [root@k8s-master service]#4 [root@k8s-master service]# kubectl get svc -o wide5 NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR6 kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 16d <none>7 myapp-clusterip ClusterIP 10.102.246.104 <none> 8080/TCP 6s app=myapp,release=v1

查看ipvs信息

1 [root@k8s-master service]# ipvsadm -Ln2 IP Virtual Server version 1.2.1 (size=4096)3Prot LocalAddress:Port Scheduler Flags4 -> RemoteAddress:Port Forward Weight ActiveConn InActConn5………………6 TCP 10.102.246.104:8080 rr7 -> 10.244.2.111:80 Masq 1008 -> 10.244.3.164:80 Masq 100

9 -> 10.244.3.165:80 Masq 100

由此可见,正常情况下:当我们访问Service时,访问链路是能够传递到后端的Pod并返回信息。

Curl访问结果

直接访问Pod,如下所示是能够正常访问的。

1 [root@k8s-master service]# curl 10.244.2.111/hostname.html2 myapp-deploy-5695bb5658-7tgfx3 [root@k8s-master service]#4 [root@k8s-master service]# curl 10.244.3.165/hostname.html5 myapp-deploy-5695bb5658-95zxm6 [root@k8s-master service]#7 [root@k8s-master service]# curl 10.244.3.164/hostname.html8 myapp-deploy-5695bb5658-xtxbp

但通过Service访问结果异常,信息如下。

1 [root@k8s-master service]# curl 10.102.246.104:80802 curl: (7) Failed connect to 10.102.246.104:8080; Connection timed out

处理过程

抓包核实

使用如下命令进行抓包,并通过Wireshark工具进行分析。

tcpdump -i any -n -nn port 80 -w ./$(date +%Y%m%d%H%M%S).pcap

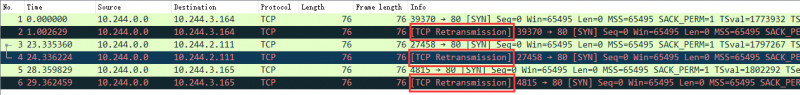

结果如下图:

可见,已经向Pod发了请求,但是没有得到回复。结果TCP又重传了【TCP Retransmission】。

查看kube-proxy日志

1 [root@k8s-master service]# kubectl get pod -A | grep'kube-proxy'2 kube-system kube-proxy-6bfh7 1/1 Running 1 3h52m

3 kube-system kube-proxy-6vfkf 1/1 Running 1 3h52m

4 kube-system kube-proxy-bvl9n 1/1 Running 1 3h52m

5 [root@k8s-master service]#

6 [root@k8s-master service]# kubectl logs -n kube-system kube-proxy-6bfh7

7 W0601 13:01:13.1705061 feature_gate.go:235] Setting GA feature gate SupportIPVSProxyMode=true. It will be removed in a future release.

8 I0601 13:01:13.3389221 node.go:135] Successfully retrieved node IP: 172.16.1.112

9 I0601 13:01:13.3389601 server_others.go:172] Using ipvs Proxier. ##### 可见使用的是ipvs模式

10 W0601 13:01:13.3394001 proxier.go:420] IPVS scheduler not specified, use rr by default

11 I0601 13:01:13.3396381 server.go:571] Version: v1.17.4

12 I0601 13:01:13.3401261 conntrack.go:100] Set sysctl 'net/netfilter/nf_conntrack_max' to 131072

13 I0601 13:01:13.3401591 conntrack.go:52] Setting nf_conntrack_max to 131072

14 I0601 13:01:13.3405001 conntrack.go:83] Setting conntrack hashsize to 32768

15 I0601 13:01:13.3469911 conntrack.go:100] Set sysctl 'net/netfilter/nf_conntrack_tcp_timeout_established' to 86400

16 I0601 13:01:13.3470351 conntrack.go:100] Set sysctl 'net/netfilter/nf_conntrack_tcp_timeout_close_wait' to 3600

17 I0601 13:01:13.3477031 config.go:313] Starting service config controller

18 I0601 13:01:13.3477181 shared_informer.go:197] Waiting for caches to syncfor service config

19 I0601 13:01:13.3477361 config.go:131] Starting endpoints config controller

20 I0601 13:01:13.3477431 shared_informer.go:197] Waiting for caches to syncfor endpoints config

21 I0601 13:01:13.4482231 shared_informer.go:204] Caches are synced for endpoints config

22 I0601 13:01:13.4482361 shared_informer.go:204] Caches are synced for service config

可见kube-proxy日志无异常

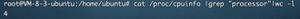

网卡设置并修改

备注:在k8s-master节点操作的

之后进一步搜索表明,这可能是由于“Checksum offloading” 造成的。信息如下:

1 [root@k8s-master service]# ethtool -k flannel.1 | grep checksum2 rx-checksumming: on3 tx-checksumming: on ##### 当前为 on4 tx-checksum-ipv4: off [fixed]5 tx-checksum-ip-generic: on ##### 当前为 on6 tx-checksum-ipv6: off [fixed]7 tx-checksum-fcoe-crc: off [fixed]8 tx-checksum-sctp: off [fixed]

flannel的网络设置将发送端的checksum打开了,而实际应该关闭,从而让物理网卡校验。操作如下:

1# 临时关闭操作 2 [root@k8s-master service]# ethtool -K flannel.1 tx-checksum-ip-generic off 3Actual changes: 4 tx-checksumming: off 5 tx-checksum-ip-generic: off 6 tcp-segmentation-offload: off 7 tx-tcp-segmentation: off [requested on] 8 tx-tcp-ecn-segmentation: off [requested on] 9 tx-tcp6-segmentation: off [requested on]10 tx-tcp-mangleid-segmentation: off [requested on]11 udp-fragmentation-offload: off [requested on]12 [root@k8s-master service]#13# 再次查询结果14 [root@k8s-master service]# ethtool -k flannel.1 | grep checksum15 rx-checksumming: on16 tx-checksumming: off ##### 当前为 off17 tx-checksum-ipv4: off [fixed]18 tx-checksum-ip-generic: off ##### 当前为 off19 tx-checksum-ipv6: off [fixed]20 tx-checksum-fcoe-crc: off [fixed]21 tx-checksum-sctp: off [fixed]

当然上述操作只能临时生效。机器重启后flannel虚拟网卡还会开启Checksum校验。

之后我们再次curl尝试

1 [root@k8s-master ~]# curl 10.102.246.104:80802 Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

3 [root@k8s-master ~]#

4 [root@k8s-master ~]# curl 10.102.246.104:8080/hostname.html

5 myapp-deploy-5695bb5658-7tgfx

6 [root@k8s-master ~]#

7 [root@k8s-master ~]# curl 10.102.246.104:8080/hostname.html

8 myapp-deploy-5695bb5658-95zxm

9 [root@k8s-master ~]#

10 [root@k8s-master ~]# curl 10.102.246.104:8080/hostname.html

11 myapp-deploy-5695bb5658-xtxbp

12 [root@k8s-master ~]#

13 [root@k8s-master ~]# curl 10.102.246.104:8080/hostname.html

14 myapp-deploy-5695bb5658-7tgfx

由上可见,能够正常访问了。

永久关闭flannel网卡发送校验

备注:所有机器都操作

使用以下代码创建服务

1 [root@k8s-node02 ~]# cat /etc/systemd/system/k8s-flannel-tx-checksum-off.service 2[Unit] 3 Description=Turn off checksum offload on flannel.14 After=sys-devices-virtual-net-flannel.1.device

5

6[Install]

7 WantedBy=sys-devices-virtual-net-flannel.1.device

8

9[Service]

10 Type=oneshot

11 ExecStart=/sbin/ethtool -K flannel.1 tx-checksum-ip-generic off

开机自启动,并启动服务

1 systemctl enable k8s-flannel-tx-checksum-off2 systemctl start k8s-flannel-tx-checksum-off

相关阅读

1、关于k8s的ipvs转发svc服务访问慢的问题分析(一)

2、Kubernetes + Flannel: UDP packets dropped for wrong checksum – Workaround

———END———

如果觉得不错就关注下呗 (-^O^-) !

以上是 Kubernetes m6S在IPVS装饰模式下Service服务的ClusterIP类型访问没法处理 的全部内容, 来源链接: utcz.com/a/49485.html