Python爬虫,图片下载完后是损坏的,怎么解决?

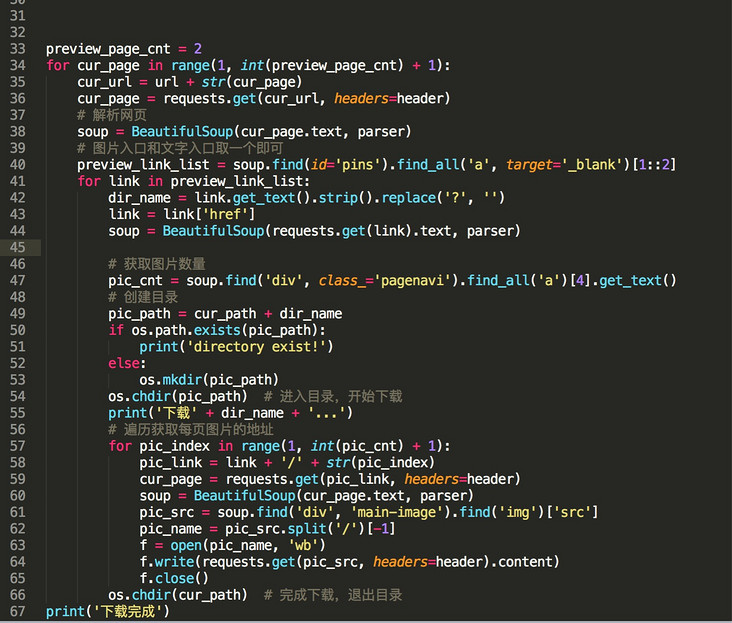

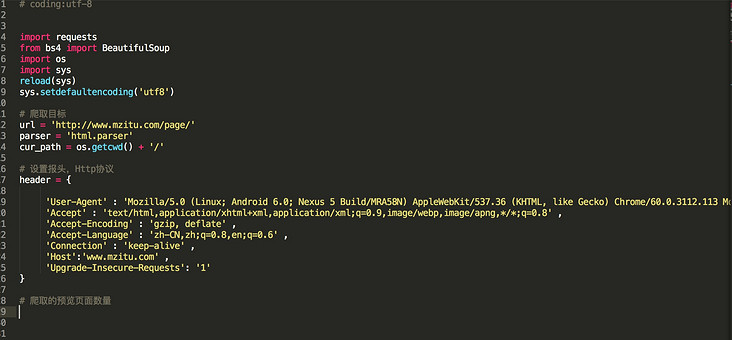

coding:utf-8

import requests

from bs4 import BeautifulSoup

import os

import sys

reload(sys)

sys.setdefaultencoding('utf8')

爬取目标

url = 'http://www.mzitu.com/page/'

parser = 'html.parser'

cur_path = os.getcwd() + '/'

设置报头,Http协议

header = {

'User-Agent' : 'Mozilla/5.0 (Linux; Android 6.0; Nexus 5 Build/MRA58N) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/60.0.3112.113 Mobile Safari/537.36', 'Accept' : 'text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,image/apng,*/*;q=0.8' ,

'Accept-Encoding' : 'gzip, deflate' ,

'Accept-Language' : 'zh-CN,zh;q=0.8,en;q=0.6' ,

'Connection' : 'keep-alive' ,

'Host':'www.mzitu.com' ,

'Upgrade-Insecure-Requests': '1',

'Referer': 'http:://http://www.mzitu.com/'

}

爬取的预览页面数量

preview_page_cnt = 2

for cur_page in range(1, int(preview_page_cnt) + 1):

cur_url = url + str(cur_page)cur_page = requests.get(cur_url, headers=header)

# 解析网页

soup = BeautifulSoup(cur_page.text, parser)

# 图片入口和文字入口取一个即可

preview_link_list = soup.find(id='pins').find_all('a', target='_blank')[1::2]

for link in preview_link_list:

dir_name = link.get_text().strip().replace('?', '')

link = link['href']

soup = BeautifulSoup(requests.get(link).text, parser)

# 获取图片数量

pic_cnt = soup.find('div', class_='pagenavi').find_all('a')[4].get_text()

# 创建目录

pic_path = cur_path + dir_name

if os.path.exists(pic_path):

print('directory exist!')

else:

os.mkdir(pic_path)

os.chdir(pic_path) # 进入目录,开始下载

print('下载' + dir_name + '...')

# 遍历获取每页图片的地址

for pic_index in range(1, int(pic_cnt) + 1):

pic_link = link + '/' + str(pic_index)

cur_page = requests.get(pic_link, headers=header)

soup = BeautifulSoup(cur_page.text, parser)

pic_src = soup.find('div', 'main-image').find('img')['src']

pic_name = pic_src.split('/')[-1]

f = open(pic_name, 'wb')

f.write(requests.get(pic_src, headers=header).content)

f.close()

os.chdir(cur_path) # 完成下载,退出目录

print('下载完成')

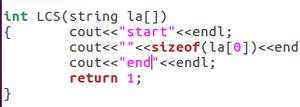

回答:

不喜欢看截图

回答:

用urlretrieve试试

回答:

ret = requests.get(pic_src, headers=header) open(pic_name, 'wb').write(ret.content)

这样试试呢

回答:

for pic_index in range(1, int(pic_cnt) + 1): pic_link = link + '/' + str(pic_index)

cur_page = requests.get(pic_link, headers=header)

soup = BeautifulSoup(cur_page.text, parser)

pic_src = soup.find('div', 'main-image').find('img')['src']

pic_name = pic_src.split('/')[-1]

f = open(pic_name, 'wb')

f.write(requests.get(pic_src, headers=header).content) # header有问题

f.close()

header有问题,自己改一下吧

以上是 Python爬虫,图片下载完后是损坏的,怎么解决? 的全部内容, 来源链接: utcz.com/a/162531.html